Best practices for avoiding noisy neighbor issues using cpu manager behaves wrt hyper-threading

Introduction

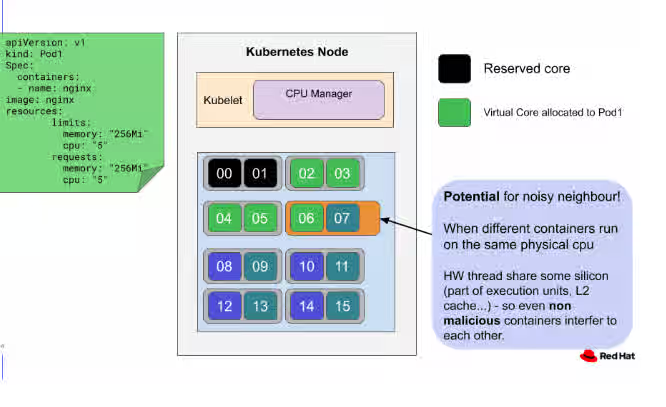

On x86_64 hardware, depending on the node hardware configuration, the entity which kubernetes identifies as “CPU” could be either a physical core, if simultaneous multi-threading is disabled or not available, or a virtual thread, if instead simultaneous multi-threading is enabled.

Simultaneous multithreading (SMT) is a feature of modern x86_64 processors which allows more than one, most commonly two, thread of execution (called “virtual threads” in this document) to run at the same time on the same physical core.

When two independent and possibly uncorrelated threads of execution share the compute resources of a core, like execution pipelines, or cache, they can interfere with each other, accidentally or not. This phenomenon is called “noisy neighbors”, and can lead to unpredictable slowdowns. Latency-sensitive workloads are especially vulnerable and want to prevent this behaviour from happening.

Behaviour up until openshift 4.9/kubernetes 1.22

The CPUManager is a module in the kubelet, a kubernetes component, which allows a container to gain exclusive access to a CPU.

Since its inception in kubernetes 1.8, the CPU manager allocates CPUs to containers requesting exclusive cpus using a Content from github.com is not included.topology-aware best-fit.

The allocation algorithm that cpumanager implements is designed to allocate cores in an efficient way considering the hardware topology of modern cpus.

The allocation algorithm is deterministic and reliable and there is a wide consensus about keeping its properties, but the algorithm proper is not part of API or guaranteed in any way.

Despite all the good and well-known properties, because of this lack of explicit guarantees, the actual algorithm has to be considered an “implementation detail”.

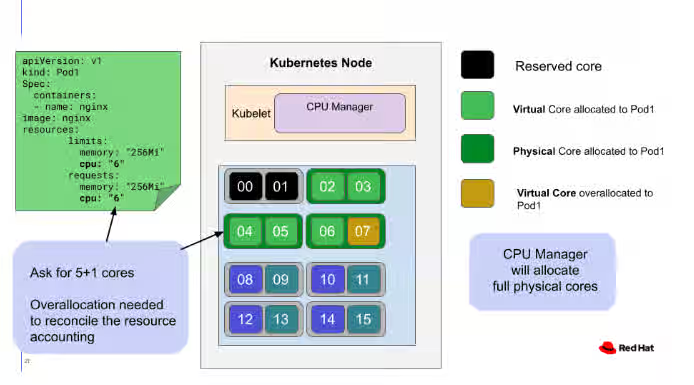

The algorithm implemented in the CPU manager:

- Acquires whole sockets, if available and the container requires at least a socket's-worth of CPUs. If the allocation request is not satisfied,

- Acquires whole cores, aka SMT sets, if available and the container requires at least a core's-worth of CPUs. If the allocation request is not satisfied,

- Acquires single threads, preferring to fill partially-allocated cores on the same sockets as the whole cores we have already taken in this allocation.

Because step 3 above, in order for a workload to prevent physical core sharing and the related noisy-neighbors issue, it is sufficient to request a number of CPUs which is a multiple of the simultaneous-multi-thread level of the machine. All x86_64 processors to date support two threads per core, so this means that the workload must request a number of cpus which is multiple of 2, rounding up if needed.

Due to a fundamental design decision of kubernetes, the kubelet cannot report a resource allocation different from the request. This means the kubelet cannot do the overallocation (rounding up) of cpus to guarantee absence of noisy neighbors. The workload definition must be provisioned explicitly.

Behaviour from openshift 4.10/kubernetes 1.23 and onwards

Starting with kubernetes 1.23, a feature called “cpu manager policy options” will be beta-level and thus visible by default. This feature allows to fine-tune the behaviour of the CPU manager.

One of the first tunable options added is the “full-pcpus-only” which requests CPU manager to allocate only full physical cores to a container requesting exclusive CPUs, or to not admit the workload on the pod. See Content from kubernetes.io is not included.here for more information.

This option formalizes and makes explicit the behaviour of CPU manager, which is now guaranteed by the API, and whose properties described above are now explicit and not anymore only an implementation detail. Furthermore, it is also now explicitly guaranteed that a container requesting exclusive cpus and wants to avoid noisy neighbors will either be admitted with those requirements, or will be rejected.

The workload has still to over allocate the cpus it needs, as the platform could not do that automatically.

Best practices summary

For latency-sensitive guaranteed workloads running on Openshift <= 4.9, and in general:

- performance-addon operator should be used to get a baseline known-good cluster and node configuration

- For guaranteed pods, CPUs should be requested in multiples of the SMT level of your hardware (usually 2), performing overallocation if needed. The CPU Manager will then deterministically allocate CPUs in such a way to avoid possible noisy neighbours.

- Note: another form of contention, like for LLC or memory access, may still cause noisy neighbours effect, but handling these resources is out of scope for the CPU manager and are not of the same order of magnitude as sharing the core units between the two virtual threads.

For latency-sensitive guaranteed workloads running on Openshift >= 4.10:

- All the recommendations for openshift <= 4.10 still hold

- The performance-addon operator will automatically configure the CPU manager to reject guaranteed containers whose allocation cannot be fulfilled using full physical cores.

- Review this configuration.

- Be prepared to handle pod rejections for SMTAlignmentError.