Procedure to transition from SiteConfig CRs to the ClusterInstance API

This engineering guide outlines concrete steps on how to migrate SNO clusters already installed using SiteConfig CRs to the new ClusterInstance API, now part of the GitOps ZTP workflow.

NOTE: For an article focused on incrementally transitioning OpenShift clusters involving the simultaneous coexistence of both ZTPv1 and ZTPv2 configurations, check out the following kbase https://access.redhat.com/articles/7124143. The article details the sequential steps required to modify configurations in Git and ArgoCD, ensuring a smooth and controlled transition. It also covers special scenarios like installing new OCP clusters, Multi-node OpenShift clusters (MNO), and redeploying transitioned clusters.

Why should I transition to ClusterInstance CRs?

The Content from github.com is not included.ClusterInstance definition, available in ACM since v2.12 and heavily inspired by the Content from github.com is not included.siteconfig-generator library, not only provides a unified and more generic way to define the installation of a cluster, but it also overcomes important limitations of its predecessor.

From a deployment perspective, this new iteration of the SiteConfig operator is now a fully developed controller shipped as an addon within the RH ACM product lifecycle, while previously it was implemented as a Kustomize plugin integrated within ArgoCD.

Additionally, besides the fact that previous SiteConfig CRs (i.e., ran.openshift.io/v1 group) are deprecated from OCP v4.18 onwards (and removed in OCP v4.21), the new ClusterInstance API provides diverse technical advantages that can be explored in the SiteConfig addon chapter for ACM v2.12.

Pre-requisites

This guide takes into account the following assumptions:

- You have logged in to the hub cluster as a user with

cluster-adminprivileges. - You have previously configured your GitOps ZTP environment successfully.

- You have installed and configured the Assisted Installer service successfully.

The first thing to ensure is that the target ManagedCluster(s) are NOT deploying still. To that end, we need to check the deployment status of the managed cluster(s)

-> export TARGET_CLUSTER=sno3

-> oc get ManagedCluster ${TARGET_CLUSTER}

NAME HUB ACCEPTED MANAGED CLUSTER URLS JOINED AVAILABLE AGE

sno3 true https://api.sno3.inbound.bos2.lab:6443 True True 14d

As shown, the target cluster(s) should have True in the JOINED and AVAILABLE columns, indicating its deployment was successful and has already joined the ACM hub.

IMPORTANT NOTE: Additionally, it is also recommended to drop the ArgoCD Project and App objects pointing to the deprecated

SiteConfigCRs in the Hub cluster. This is to avoid unexpected syncs or app triggers during the migration.

Delete ArgoCD AppProject

-> oc -n openshift-gitops get appprojects.argoproj.io

NAME AGE

default 426d

policy-app-project 426d

ztp-app-project 426d

-> oc -n openshift-gitops delete appprojects.argoproj.io ztp-app-project

appproject.argoproj.io "ztp-app-project" deleted

Delete ArgoCD App

IMPORTANT NOTE: There are both "cascaded" and "non-cascaded" deletes (see Content from argo-cd.readthedocs.io is not included.upstream docs). Please ensure you perform a "non-cascaded" deletion by FIRST checking its finalizer is unset and then deleting the ArgoCD App.

-> oc -n openshift-gitops get applications.argoproj.io

NAME SYNC STATUS HEALTH STATUS

clusters Synced Healthy

policies Synced Healthy

-> oc -n openshift-gitops get applications.argoproj.io clusters -ojson | jq .metadata.finalizers

null

-> oc -n openshift-gitops delete applications.argoproj.io clusters

application.argoproj.io "clusters" deleted

Prepare Git Repository

Given that we are using the GitOps operator to continuously deploy in the Hub cluster, we follow with the preparation of the new ClusterInstance CRs in Git (see Content from github.com is not included.dev docs upstream).

The recommended Git structure for hosting the ClusterInstance CRs remains to be the same as before. In this guide, we have created a separate clusters folder within the project structure to host the ClusterInstance CRs, however, multiple clusters can be grouped in the same directory.

Clusters folder with needed resources for sno3 cluster

-> tree clusters/sno3

clusters/sno3

├── clusterinstance.yaml

├── extra-manifests

│ └── master-core-password.yaml

├── kustomization.yaml

├── ns.yaml

└── secrets.yaml

1 directory, 5 files

NOTE: The requirements for

ClusterInstanceCRs are very similar to previousSiteConfigCRs regarding theGitstructure. In this guide for demonstration purposes, we have created a separatesno3subfolder. However, as commented, multiple clusters can be grouped in the same directory.

Kustomization file

-> cat clusters/sno3/kustomization.yaml

---

apiVersion: kustomize.config.k8s.io/v1beta1

kind: Kustomization

resources:

- ns.yaml

- secrets.yaml

- clusterinstance.yaml

configMapGenerator:

- files:

- extra-manifests/master-core-password.yaml

name: extra-manifests-cm

namespace: sno3

generatorOptions:

disableNameSuffixHash: true

Namespace resource

-> cat clusters/sno3/ns.yaml

---

apiVersion: v1

kind: Namespace

metadata:

name: sno3

Secret resources

-> cat clusters/sno3/secrets.yaml

---

apiVersion: v1

kind: Secret

metadata:

name: sno3-worker

namespace: sno3

data:

username: "<STRING_BASE64_ENCODED>"

password: "<STRING_BASE64_ENCODED>"

---

apiVersion: v1

kind: Secret

metadata:

name: assisted-deployment-pull-secret

namespace: sno3

data:

.dockerconfigjson: "<STRING_BASE64_ENCODED>"

type: Opaque

ClusterInstance resource

IMPORTANT NOTE: When porting the cluster attributes from deprecated

SiteConfigCRs to the newClusterInstanceresource (see Content from github.com is not included.API definition upstream), we should ensure IDENTICAL settings for all matching cluster fields (i.e., MAC addresses, networking config, root disk, capabilities, etc.), or the SiteConfig controller will fail to validate rendered deployment manifests.

-> cat clusters/sno3/clusterinstance.yaml

---

apiVersion: siteconfig.open-cluster-management.io/v1alpha1

kind: ClusterInstance

metadata:

name: "sno3"

namespace: "sno3"

spec:

additionalNTPSources:

- "2600:52:7:59::11"

baseDomain: "inbound.bos2.lab"

clusterImageSetNameRef: "openshift-4.18.0-ec.4"

clusterName: "sno3"

clusterNetwork:

- cidr: "fd01::/48"

hostPrefix: 64

cpuPartitioningMode: AllNodes

extraLabels:

ManagedCluster:

siteName: "vdu-mb"

group-du-sno: ""

logicalGroup: "active"

common-418-disconnected: "false"

common-418-resource-usage-test: ""

holdInstallation: false

installConfigOverrides: |

{

"capabilities": {

"baselineCapabilitySet": "None",

"additionalEnabledCapabilities": [

"NodeTuning",

"OperatorLifecycleManager",

"Ingress"

]

}

}

machineNetwork:

- cidr: "2600:52:7:59::/64"

networkType: "OVNKubernetes"

proxy:

httpProxy: http://jumphost.inbound.bos2.lab:3128

httpsProxy: http://jumphost.inbound.bos2.lab:3128

noProxy: .hub.inbound.bos2.lab,9999,5050,6385,8089,6180,6185

pullSecretRef:

name: "assisted-deployment-pull-secret"

serviceNetwork:

- cidr: "fd02::/112"

sshPublicKey: "<STRING_BASE64_ENCODED>"

templateRefs:

- name: ai-cluster-templates-v1

namespace: open-cluster-management

nodes:

- automatedCleaningMode: "disabled"

bmcAddress: "redfish-virtualmedia://192.168.13.144/redfish/v1/Systems/Self"

bmcCredentialsName:

name: "sno3-worker"

bootMACAddress: "b4:96:91:b6:58:28"

bootMode: "UEFI"

hostName: "sno3.inbound.bos2.lab"

ignitionConfigOverride: "<STRING_REDACTED>"

nodeNetwork:

interfaces:

- name: "ens2f0"

macAddress: "b4:96:91:b6:58:28"

config:

interfaces:

- name: ens2f0

type: ethernet

state: up

ipv4:

enabled: false

dhcp: false

ipv6:

address:

- ip: 2600:52:7:59::300

prefix-length: 64

dhcp: false

autoconf: false

enabled: true

- name: ens2f1

type: ethernet

state: down

ipv4:

enabled: false

dhcp: false

ipv6:

dhcp: false

autoconf: false

enabled: false

- name: ens1f1

type: ethernet

state: down

ipv4:

enabled: false

dhcp: false

ipv6:

dhcp: false

autoconf: false

enabled: false

dns-resolver:

config:

server:

- 2600:52:7:59::11

routes:

config:

- destination: '::/0'

next-hop-address: '2600:52:7:59::1'

next-hop-interface: ens2f0

role: "master"

rootDeviceHints:

deviceName: "/dev/disk/by-path/pci-0000:c3:00.0-nvme-1"

templateRefs:

- name: ai-node-templates-v1

namespace: open-cluster-management

Even though the ClusterInstance specification is inspired by the previous SiteConfig CRs, the two objects have important differences. Therefore, it is critical that users carefully and consciously translate configurations from previous ones to the new ClusterInstance definition.

NOTE: As of this writing, there are no plans to support a tool to perform this translation automatically by Red Hat, needs to be performed by the user.

Optionally, ExtraManifest resources

-> cat clusters/sno3/extra-manifests/master-core-password.yaml

---

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

name: master-core-password

labels:

machineconfiguration.openshift.io/role: master

spec:

config:

ignition:

version: 3.2.0

systemd:

units:

- name: core-password.service

enabled: true

contents: |

[Unit]

Description=Changes core password

[Service]

Type=oneshot

ExecStart=/bin/bash -c "echo <USER>:<PASSWD> | chpasswd"

[Install]

WantedBy=multi-user.target

Extra-manifest resources can be defined in two main ways (see Content from github.com is not included.dev docs upstream). However, for demonstration purposes in this guide, we generated an extra-manifests ConfigMap using Kustomize.

Once the Git repository is in place, let’s continue with the transition of the platform components in the Hub cluster.

Migrate Platform Components

Enable SiteConfig addon

Check that your ACM instance in the Hub cluster is above the minimum supported version (i.e., ACM v2.12) for the SiteConfig addon, and upgrade it if required. Once upgraded, the version of MCE that should automatically be upgraded is 2.7.z, according to the support matrix of ACM.

Check SiteConfig addon availability in ACM

-> export RHACM_NAMESPACE=open-cluster-management

-> oc -n ${RHACM_NAMESPACE} get mch multiclusterhub -ojson | jq '.spec.overrides.components[] | select(.name == "siteconfig")'

{

"configOverrides": {},

"enabled": false,

"name": "siteconfig"

}

NOTE: Recent versions of ACM deploy their resources at the

rhacmnamespace, but if you have a previous deployment (e.g., this guide started from ACM v2.10), the namespace can be different, likeopen-cluster-managementfor instance.

Enable SiteConfig addon

-> oc -n ${RHACM_NAMESPACE} patch multiclusterhubs.operator.open-cluster-management.io multiclusterhub --type json --patch '[{"op": "add", "path":"/spec/overrides/components/-", "value": {"name":"siteconfig","enabled": true}}]'

multiclusterhub.operator.open-cluster-management.io/multiclusterhub patched

Check SiteConfig controller deployment

-> oc -n ${RHACM_NAMESPACE} get po | grep siteconfig

siteconfig-controller-manager-6c864fb6b9-kvbv9 2/2 Running 0 43s

Check SiteConfig templates

-> oc -n ${RHACM_NAMESPACE} get cm | grep "templates"

ai-cluster-templates-v1 5 80s

ai-node-templates-v1 2 80s

ibi-cluster-templates-v1 3 80s

ibi-node-templates-v1 3 80s

Configure ArgoCD

Given that we are assuming ArgoCD as the tool to continuously deploy from Git, we follow with the configuration of the GitOps operator in the Hub cluster (see Content from github.com is not included.dev docs upstream).

Create ArgoCD AppProject

cat << EOF | oc apply -f -

---

apiVersion: argoproj.io/v1alpha1

kind: AppProject

metadata:

name: siteconfig-v2

namespace: openshift-gitops

spec:

clusterResourceWhitelist:

- group: ""

kind: Namespace

namespaceResourceWhitelist:

- group: ""

kind: ConfigMap

- group: ""

kind: Secret

- group: siteconfig.open-cluster-management.io

kind: ClusterInstance

sourceRepos:

- '*'

destinations:

- namespace: '*'

server: '*'

EOF

As commented upstream in the Content from github.com is not included.dev docs, at a minimum, the ClusterInstance, Namespace, ConfigMap, and Secret kinds should be added to the respective resource whitelists. If your ArgoCD App requires to watch any extra or custom resource this is the place to specify it.

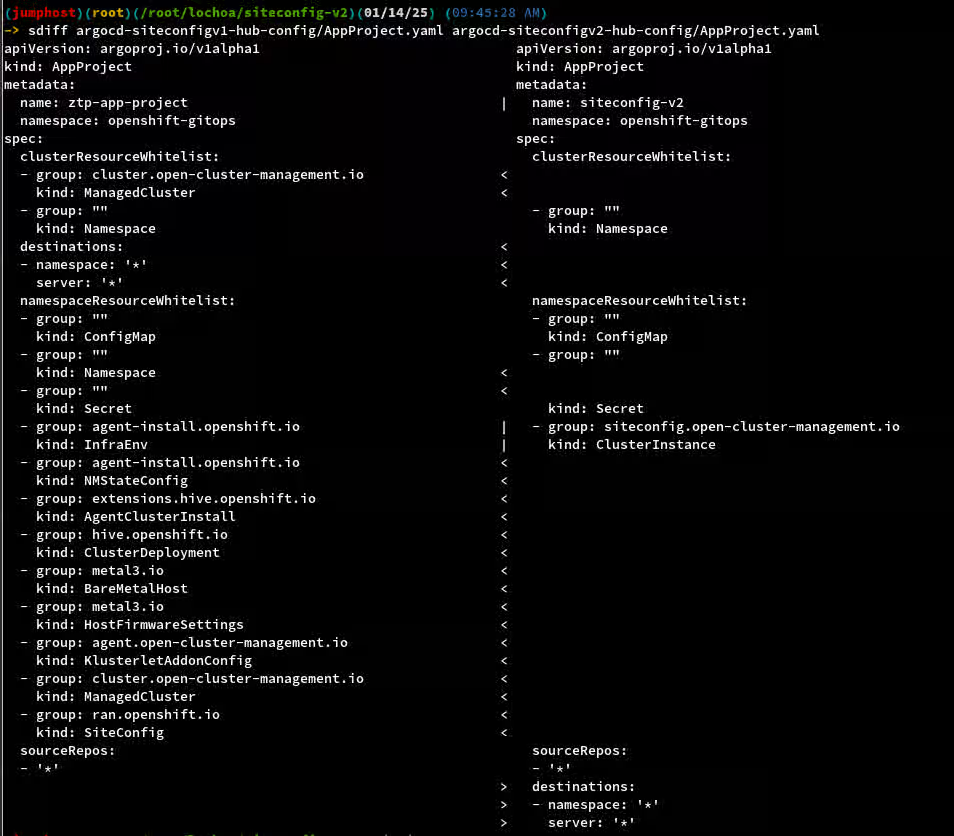

If curious about the differences between the previous AppProject and the new one, we also provide a sdiff between these two as a complement below.

NOTE: Please mind that deployment objects (i.e.,

AgentClusterInstall,BareMetalHost, etc.) are now monitored directly by the SiteConfig controller and don’t need to be specified in ArgoCD’sAppProject.

Analyze existing ArgoCD Permissions

When the GitOps ZTP approach was initially configured, by default we got some pre-configured ServiceAccount and ClusterRoleBinding to grant RBAC permissions to ArgoCD. Below, we quickly inspect the existing in the Hub cluster.

-> oc get ClusterRoleBinding gitops-cluster -oyaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

creationTimestamp: "2023-11-14T19:09:34Z"

name: gitops-cluster

resourceVersion: "711074"

uid: c01afe9b-e1c0-4616-a5c6-c910b0a9c890

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: openshift-gitops-argocd-application-controller

namespace: openshift-gitops

-> oc -n openshift-gitops get ServiceAccount openshift-gitops-argocd-application-controller -oyaml

apiVersion: v1

kind: ServiceAccount

metadata:

creationTimestamp: "2023-11-14T18:40:59Z"

labels:

app.kubernetes.io/managed-by: openshift-gitops

app.kubernetes.io/name: argocd-application-controller

app.kubernetes.io/part-of: argocd

name: openshift-gitops-argocd-application-controller

namespace: openshift-gitops

ownerReferences:

- apiVersion: argoproj.io/v1alpha1

blockOwnerDeletion: true

controller: true

kind: ArgoCD

name: openshift-gitops

uid: 3c6d40c0-959b-497f-be6e-6901224e84d5

resourceVersion: "236783345"

uid: 160f9639-97f6-4db5-8c13-e3903a267867

As shown, the existing ClusterRoleBinding and ServiceAccount resources can be re-utilized in the migrated scenario. Optionally, you can create a custom ClusterRole with fewer privileges.

NOTE: Optionally, you can create a custom

ClusterRolewith fewer privileges (see Content from github.com is not included.dev docs upstream), but for this migration Use Case existing ones are good to go.

Configure Access to the Git Repository

Once a new ArgoCD AppProject is created, access to the Git Repository needs to be properly configured for that specific project as well. To that end, you can use the Content from argo-cd.readthedocs.io is not included.ArgoCD CLI or the UI. Both approaches are briefly described in the Content from github.com is not included.dev docs upstream.

Create an ArgoCD App pointing to the clusters folder in Git

cat << EOF | oc apply -f -

---

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: clusters

namespace: openshift-gitops

spec:

destination:

namespace: clusters-sub

server: https://kubernetes.default.svc

project: siteconfig-v2

source:

path: clusters

repoURL: http://jumphost.inbound.bos2.lab:3000/kni/faredge-ztp.git

targetRevision: master

syncPolicy:

automated:

selfHeal: true

EOF

IMPORTANT NOTE: When migrating, AVOID setting

.syncPolicy.automated.pruneset totruein the ArgoCD App description (as specified Content from github.com is not included.upstream), or the GitOps operator will cause a full re-install of the cluster as theClusterInstanceCRs are subsequently reconciled.

Migrate Managed Clusters

At this point, ClusterInstance CRs checked out in Git should have been deployed to the Hub cluster via the ArgoCD App. Here below we now check that the target ManagedCluster(s) were effectively migrated to the newly deployed SiteConfig controller.

As shown below, note that the ClusterInstance CR is new (39 seconds old in this example) but the cluster itself was not re-installed/re-deployed.

-> oc -n ${TARGET_CLUSTER} get clusterinstances ${TARGET_CLUSTER}

NAME PROVISIONSTATUS PROVISIONDETAILS AGE

sno3 Completed Provisioning completed 39s

NOTE: Thanks to the relaxation of the Content from github.com is not included.createOrPatch function, we are now able to manage our

ClusterInstanceCRs as they were initially rendered by the SiteConfig controller.

So from now on, normal operations like adjusting labels on the ManagedCluster(s) to bind to new policies or similar, are expected and would not redeploy the cluster. Redeployment should only be possible if the deploymentGeneration field is changed.

As a complement, let’s also take a look at the .status.manifestsRendered field to validate that all deployment resources were properly rendered by the controller.

-> oc -n ${TARGET_CLUSTER} get clusterinstances ${TARGET_CLUSTER} -ojson | jq .status.manifestsRendered

[

{

"apiGroup": "extensions.hive.openshift.io/v1beta1",

"kind": "AgentClusterInstall",

"lastAppliedTime": "2025-01-13T11:10:52Z",

"name": "sno3",

"namespace": "sno3",

"status": "rendered",

"syncWave": 1

},

{

"apiGroup": "metal3.io/v1alpha1",

"kind": "BareMetalHost",

"lastAppliedTime": "2025-01-13T11:10:53Z",

"name": "sno3.inbound.bos2.lab",

"namespace": "sno3",

"status": "rendered",

"syncWave": 1

},

{

"apiGroup": "hive.openshift.io/v1",

"kind": "ClusterDeployment",

"lastAppliedTime": "2025-01-13T11:10:53Z",

"name": "sno3",

"namespace": "sno3",

"status": "rendered",

"syncWave": 1

},

{

"apiGroup": "agent-install.openshift.io/v1beta1",

"kind": "InfraEnv",

"lastAppliedTime": "2025-01-13T11:10:53Z",

"name": "sno3",

"namespace": "sno3",

"status": "rendered",

"syncWave": 1

},

{

"apiGroup": "agent-install.openshift.io/v1beta1",

"kind": "NMStateConfig",

"lastAppliedTime": "2025-01-13T11:10:53Z",

"name": "sno3.inbound.bos2.lab",

"namespace": "sno3",

"status": "rendered",

"syncWave": 1

},

{

"apiGroup": "agent.open-cluster-management.io/v1",

"kind": "KlusterletAddonConfig",

"lastAppliedTime": "2025-01-13T11:10:53Z",

"name": "sno3",

"namespace": "sno3",

"status": "rendered",

"syncWave": 2

},

{

"apiGroup": "cluster.open-cluster-management.io/v1",

"kind": "ManagedCluster",

"lastAppliedTime": "2025-01-13T11:10:53Z",

"name": "sno3",

"status": "rendered",

"syncWave": 2

}

]

Finally, SiteConfig logs will also validate the proper rendering of deployment resources. Below a command to inspect and follow those is also provided.

-> oc -n ${RHACM_NAMESPACE} logs -l app.kubernetes.io/name=siteconfig-controller -fc manager

Migrate Policies

Note on Article Scope: This guide does NOT cover the handling of

Policies. As of this writing, policies in GitOps ZTP environments are still managed byPolicyGeneratorplugins (i.e., created by the PGT resource) and later on managed byCGUobjects and the TALM operator.

Cleanup old artifacts

This is a list of artifacts that should and could be safely removed now that the new SiteConfig operator is in place.

Recommended

- Previous ArgoCD

AppProjectfor deprecatedSiteConfigsCRs (should have been removed from the pre-requisites step). - Previous ArgoCD

Appfor deprecatedSiteConfigsCRs (should have been removed from the pre-requisites step).

Optional

- Previous

Gitfolder storing deprecatedSiteConfigCRs.

FAQs

This is a list of frequently asked questions by users.

Is the new approach retro-compatible with previous SiteConfigs?

The new SiteConfig controller only reconciles upon updates of ClusterInstance objects (i.e., ClusterInstance kind and siteconfig.open-cluster-management.io/v1alpha1 group). Therefore, deprecated SiteConfig objects (i.e., SiteConfig kind and ran.openshift.io/v1 group) are not monitored nor managed by this SiteConfig Operator in any way.

Can both methods co-exist in the same Hub cluster?

It is strongly recommended to have just one method configured in the Hub cluster, this is the new ClusterInstance abstraction. Having both methods at the same time not only is discouraged but also could provoke uncontrolled re-deployments of ManagedCluster(s), given that both approaches will try to update the cluster’s deployment manifests during reconciling loops.

Is there a supported tool to translate from SiteConfig CRs to the new ClusterInstance CRs?

As of this writing, there is no plan to support a tool that automatically translates from the deprecated SiteConfig CRs to the newly supported ClusterInstance CRs. Therefore, info from the SiteConfig CRs needs to be carefully ported into the new ClusterInstance API by the users.

Can all of the Kustomize plugins be entirely removed from ArgoCD?

Short answer NO, not yet. The SiteGen argocd plugin is no longer needed when your environment has been fully transitioned to ClusterInstance (i.e., SiteConfig operator).

However, as of this writing, policies in GitOps ZTP environments are still managed by a combination of the PolicyGen or PolicyGenerator plugins, and the TALM operator. Specifically, the PolicyGen is still needed if the user manages policies with PolicyGenTemplate CRs in Git. On the other hand, the PolicyGenerator plugin (from ACM) is needed if they use PolicyGenerator CRs that are also checked out in Git.

Common Pitfalls

List of possible snags and pitfalls that users may find while migrating to the new approach.

Failed to validate rendered manifests using dry-run validation

Symptoms

# siteconfig controller logs

2024-12-12T13:43:33.585Z DEBUG ClusterInstanceController.validateRenderedManifests controller/clusterinstance_controller.go:520 Change detected in resource AgentClusterInstall:sno3/sno3, updating it {"name": "sno3", "namespace": "sno3"}

2024-12-12T13:43:33.614Z INFO ClusterInstanceController.validateRenderedManifests controller/clusterinstance_controller.go:524 Failed to update resource AgentClusterInstall:sno3/sno3 {"name": "sno3", "namespace": "sno3"}

-> oc get clusterinstances.siteconfig.open-cluster-management.io sno3 -ojson | jq .status.manifestsRendered

[

{

"apiGroup": "extensions.hive.openshift.io/v1beta1",

"kind": "AgentClusterInstall",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"message": "admission webhook \"agentclusterinstallvalidators.admission.agentinstall.openshift.io\" denied the request: Attempted to change AgentClusterInstall.Spec which is immutable after install started, except for ClusterMetadata,IgnitionEndpoint fields. Unsupported change: \n\tManifestsConfigMapRefs: ([] => [{Name:extra-manifests-cm}])",

"name": "sno3",

"namespace": "sno3",

"status": "failed",

"syncWave": 1

},

{

"apiGroup": "metal3.io/v1alpha1",

"kind": "BareMetalHost",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"name": "sno3.inbound.bos2.lab",

"namespace": "sno3",

"status": "validated",

"syncWave": 1

},

{

"apiGroup": "hive.openshift.io/v1",

"kind": "ClusterDeployment",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"name": "sno3",

"namespace": "sno3",

"status": "validated",

"syncWave": 1

},

{

"apiGroup": "agent-install.openshift.io/v1beta1",

"kind": "InfraEnv",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"name": "sno3",

"namespace": "sno3",

"status": "validated",

"syncWave": 1

},

{

"apiGroup": "agent-install.openshift.io/v1beta1",

"kind": "NMStateConfig",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"name": "sno3.inbound.bos2.lab",

"namespace": "sno3",

"status": "validated",

"syncWave": 1

},

{

"apiGroup": "agent.open-cluster-management.io/v1",

"kind": "KlusterletAddonConfig",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"name": "sno3",

"namespace": "sno3",

"status": "validated",

"syncWave": 2

},

{

"apiGroup": "cluster.open-cluster-management.io/v1",

"kind": "ManagedCluster",

"lastAppliedTime": "2024-12-12T14:17:39Z",

"name": "sno3",

"status": "validated",

"syncWave": 2

}

]

Solution

Accidentally included a new extra-manifest resource that was not included in my deployment initially, removing it has unblocked the rendering workflow of the SiteConfig controller.