How to use ARM platform hardware in OpenShift 4.19 and above for Telco RDS

ARM based Telco RDS is Generally Available for OpenShift Container Platform 4.20

ARM has been added to the RDS for 4.20. Official documentation is provided here.

ARM Tech Preview for 4.19

The following article covers 4.19 ARM which was considered Technical Preview. Please refer to the links above for 4.20 and above.

About Developer Preview features

Developer Preview features are not supported with Red Hat production service level agreements (SLAs) and are not functionally complete. Red Hat does not advise using them in a production setting. Developer Preview features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. These releases may not have any documentation, and testing is limited. Red Hat may provide ways to submit feedback on Developer Preview releases without an associated SLA. All information is subject to change on release.

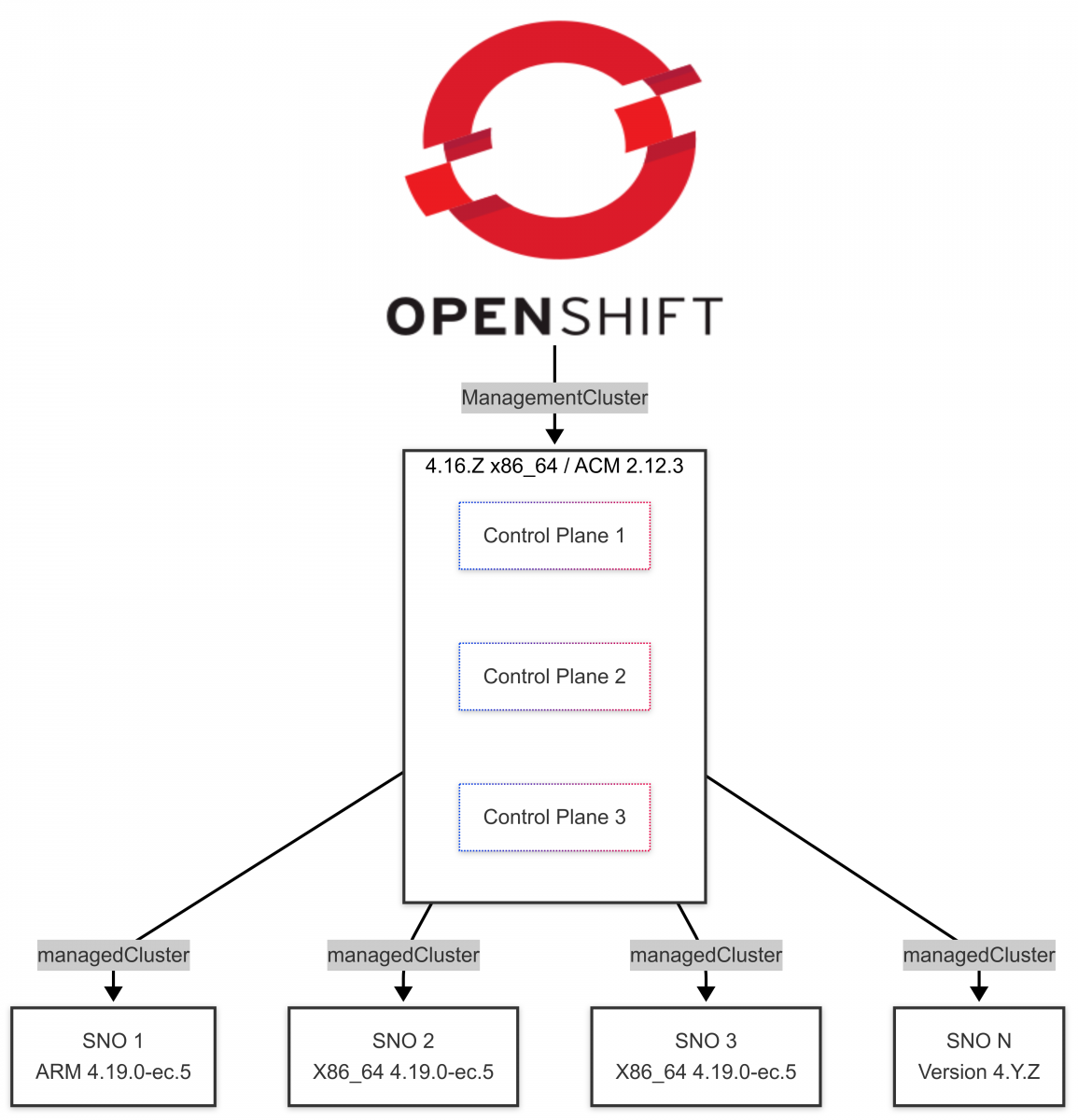

Hub Environment

The environment consists of a hub x86_64 cluster running OpenShift 4.16.Z and Advanced Cluster Management. It uses the Red Hat OpenShift GitOps and the Topology Aware Lifecycle Manager operators to deploy and manage clusters.

The following are the versions of the operators being used on the hub cluster:

| Operator | Version |

|---|---|

| advanced-cluster-management | v2.12.3 |

| multicluster-engine | v2.7.4 |

| openshift-gitops-operator | v1.15.0-0.1738074324.p |

| topology-aware-lifecycle-manager | v4.17.0 |

SNO Environment

The following are the versions of the operators being used in this ARM SNO deployment:

| Operator | Version | Supported | Validated | Target OCP |

|---|---|---|---|---|

| openshift-adp | v1.5.0 | Yes | Yes | |

| openshift-lifecycle-agent | v4.19.0 | No | No | 4.20 |

| openshift-local-storage | v4.19.0-202504282247 | Yes | Yes | |

| openshift-logging | v6.2.2 | Yes | Yes | |

| openshift-ptp | 4.19.0-202504282057 | Yes | Yes, OC only | |

| openshift-sriov-network-operator | 4.19.0-202504281747 | No | Yes | 4.20 |

ARM Deployment via ZTP workflow

From OpenShift Container Platform 4.19, ARM hardware can be utilized as the platform for Telco ZTP deployments. This article covers the deployment method for Content from github.com is not included.SiteConfigV2 but similar steps could be leveraged for SiteConfigV1.

Additionally, support for Image Based Install, Image Based Upgrade and Image Based Break+Fix for ARM SNO deployments will be added as the Lifecycle Agent Operator becomes working in OpenShift 4.20.

A matching CIS clusterimageset architecture for ARM must exist on the hub cluster where the ARM server will be deployed and managed from.

img4.19.0-ec.5-multi-appsub quay.io/openshift-release-dev/ocp-release:4.19.0-ec.5-multi

The CIS will be specified in the clusterinstance CR along with some template overrides. The payload image above will be utilized to locate the applicable ironic-imagein the event the architecture of the hub and spoke being deployed differ.

ARM Templates for SiteConfigV2

These two templates will provide some required architecture definitions for baremetalhost and infraenv CRs as well as specifying an ironic-agent image to use for the installation process. By default the managedcluster will use the architecture of the hub cluster to determine the ironic-agent image to use.

NOTE: Starting with Advanced Cluster Manager 2.14 there will no longer be a need to manually create the ConfigMaps required in the next step. See the following This content is not included.issue for Content from github.com is not included.more details. In addition to the architecture specifications, an Content from github.com is not included.assisted service ironic-agent This content is not included.image must be specified when the deployment hub and target spoke have differing hardware architecture. In this example, the hub cluster is on x86_64 hardware and the spoke will be ARM.

# oc adm release info --image-for=ironic-agent \ quay.io/openshift-release-dev/ocp-release:4.19.0-ec.5-multi

quay.io/openshift-release-dev/ocp-v4.0-art-dev@sha256:9f35628db5c01a9de3b892939a9a52d8e2ff18ab5efaf1a76f24092a917de1f2

ARM cluster template

# oc -n open-cluster-management get cm ai-cluster-templates-aarch64-v1 -o yaml

apiVersion: v1

data:

AgentClusterInstall: |-

apiVersion: extensions.hive.openshift.io/v1beta1

kind: AgentClusterInstall

metadata:

name: "{{ .Spec.ClusterName }}"

namespace: "{{ .Spec.ClusterName }}"

annotations:

{{ if .SpecialVars.InstallConfigOverrides }}

agent-install.openshift.io/install-config-overrides: '{{ .SpecialVars.InstallConfigOverrides }}'

{{ end }}

siteconfig.open-cluster-management.io/sync-wave: "1"

spec:

clusterDeploymentRef:

name: "{{ .Spec.ClusterName }}"

holdInstallation: {{ .Spec.HoldInstallation }}

imageSetRef:

name: "{{ .Spec.ClusterImageSetNameRef }}"

{{ if .Spec.ApiVIPs }}

apiVIPs:

{{ .Spec.ApiVIPs | toYaml | indent 4 }}

{{ end }}

{{ if .Spec.IngressVIPs }}

ingressVIPs:

{{ .Spec.IngressVIPs | toYaml | indent 4 }}

{{ end }}

networking:

{{ if .Spec.ClusterNetwork }}

clusterNetwork:

{{ .Spec.ClusterNetwork | toYaml | indent 6 }}

{{ end }}

{{ if .Spec.MachineNetwork }}

machineNetwork:

{{ .Spec.MachineNetwork | toYaml | indent 6 }}

{{ end }}

{{ if .Spec.ServiceNetwork }}

serviceNetwork:

{{ $serviceNetworks := list }}

{{ range .Spec.ServiceNetwork }}

{{ $serviceNetworks = append $serviceNetworks .CIDR }}

{{ end }}

{{ $serviceNetworks | toYaml | indent 6 }}

{{ end }}

provisionRequirements:

controlPlaneAgents: {{ .SpecialVars.ControlPlaneAgents }}

workerAgents: {{ .SpecialVars.WorkerAgents }}

{{ if .Spec.Proxy }}

proxy:

{{ .Spec.Proxy | toYaml | indent 4 }}

{{ end }}

{{ if .Spec.PlatformType }}

platformType: "{{ .Spec.PlatformType }}"

{{ end }}

sshPublicKey: "{{ .Spec.SSHPublicKey }}"

{{ if gt (len .Spec.ExtraManifestsRefs) 0 }}

manifestsConfigMapRefs:

{{ .Spec.ExtraManifestsRefs | toYaml | indent 4 }}

{{ end }}

ClusterDeployment: |-

apiVersion: hive.openshift.io/v1

kind: ClusterDeployment

metadata:

name: "{{ .Spec.ClusterName }}"

namespace: "{{ .Spec.ClusterName }}"

annotations:

siteconfig.open-cluster-management.io/sync-wave: "1"

spec:

baseDomain: "{{ .Spec.BaseDomain }}"

clusterInstallRef:

group: extensions.hive.openshift.io

kind: AgentClusterInstall

name: "{{ .Spec.ClusterName }}"

version: v1beta1

clusterName: "{{ .Spec.ClusterName }}"

platform:

agentBareMetal:

agentSelector:

matchLabels:

cluster-name: "{{ .Spec.ClusterName }}"

pullSecretRef:

name: "{{ .Spec.PullSecretRef.Name }}"

InfraEnv: |-

apiVersion: agent-install.openshift.io/v1beta1

kind: InfraEnv

metadata:

annotations:

# oc adm release info --image-for=ironic-agent quay.io/openshift-release-dev/ocp-release:4.19.0–ec.5-multi

infraenv.agent-install.openshift.io/ironic-agent-image-override: quay.io/openshift-release-dev/ocp-v4.0-art-dev@sha256:9f35628db5c01a9de3b892939a9a52d8e2ff18ab5efaf1a76f24092a917de1f2 # Addition here

siteconfig.open-cluster-management.io/sync-wave: "1"

name: "{{ .Spec.ClusterName }}"

namespace: "{{ .Spec.ClusterName }}"

spec:

clusterRef:

name: "{{ .Spec.ClusterName }}"

namespace: "{{ .Spec.ClusterName }}"

cpuArchitecture: "aarch64" # Addition here

sshAuthorizedKey: "{{ .Spec.SSHPublicKey }}"

{{ if .Spec.Proxy }}

proxy:

{{ .Spec.Proxy | toYaml | indent 4 }}

{{ end }}

pullSecretRef:

name: "{{ .Spec.PullSecretRef.Name }}"

ignitionConfigOverride: '{{ .Spec.IgnitionConfigOverride }}'

nmStateConfigLabelSelector:

matchLabels:

nmstate-label: "{{ .Spec.ClusterName }}"

additionalNTPSources:

{{ .Spec.AdditionalNTPSources | toYaml | indent 4 }}

KlusterletAddonConfig: |-

apiVersion: agent.open-cluster-management.io/v1

kind: KlusterletAddonConfig

metadata:

annotations:

siteconfig.open-cluster-management.io/sync-wave: "2"

name: "{{ .Spec.ClusterName }}"

namespace: "{{ .Spec.ClusterName }}"

spec:

clusterName: "{{ .Spec.ClusterName }}"

clusterNamespace: "{{ .Spec.ClusterName }}"

applicationManager:

enabled: false

certPolicyController:

enabled: false

iamPolicyController:

enabled: false

policyController:

enabled: true

searchCollector:

enabled: false

ManagedCluster: |-

apiVersion: cluster.open-cluster-management.io/v1

kind: ManagedCluster

metadata:

name: "{{ .Spec.ClusterName }}"

annotations:

siteconfig.open-cluster-management.io/sync-wave: "2"

labels:

cloud: auto-detect

vendor: auto-detect

spec:

hubAcceptsClient: true

immutable: true

kind: ConfigMap

metadata:

name: ai-cluster-templates-aarch64-v1

namespace: open-cluster-management

ARM node template

# oc -n open-cluster-management get cm ai-node-templates-aarch64-v1 -o yaml

apiVersion: v1

data:

BareMetalHost: |-

apiVersion: metal3.io/v1alpha1

kind: BareMetalHost

metadata:

name: "{{ .SpecialVars.CurrentNode.HostName }}"

namespace: "{{ .Spec.ClusterName }}"

annotations:

siteconfig.open-cluster-management.io/sync-wave: "1"

inspect.metal3.io: "{{ .SpecialVars.CurrentNode.IronicInspect }}"

{{ if .SpecialVars.CurrentNode.NodeLabels }}

{{ range $key, $value := .SpecialVars.CurrentNode.NodeLabels }}

bmac.agent-install.openshift.io.node-label.{{ $key }}: {{ $value | quote}}

{{ end }}

{{ end }}

bmac.agent-install.openshift.io/hostname: "{{ .SpecialVars.CurrentNode.HostName }}"

{{ if .SpecialVars.CurrentNode.InstallerArgs }}

bmac.agent-install.openshift.io/installer-args: '{{ .SpecialVars.CurrentNode.InstallerArgs }}'

{{ end }}

{{ if .SpecialVars.CurrentNode.IgnitionConfigOverride }}

bmac.agent-install.openshift.io/ignition-config-overrides: '{{ .SpecialVars.CurrentNode.IgnitionConfigOverride }}'

{{ end }}

bmac.agent-install.openshift.io/role: "{{ .SpecialVars.CurrentNode.Role }}"

labels:

infraenvs.agent-install.openshift.io: "{{ .Spec.ClusterName }}"

spec:

bootMode: "{{ .SpecialVars.CurrentNode.BootMode }}"

bmc:

address: "{{ .SpecialVars.CurrentNode.BmcAddress }}"

disableCertificateVerification: true

credentialsName: "{{ .SpecialVars.CurrentNode.BmcCredentialsName.Name }}"

bootMACAddress: "{{ .SpecialVars.CurrentNode.BootMACAddress }}"

automatedCleaningMode: "{{ .SpecialVars.CurrentNode.AutomatedCleaningMode }}"

online: true

{{ if .SpecialVars.CurrentNode.RootDeviceHints }}

rootDeviceHints:

{{ .SpecialVars.CurrentNode.RootDeviceHints | toYaml | indent 4 }}

{{ end }}

architecture: "aarch64" # **Addition here**

NMStateConfig: |-

{{ if .SpecialVars.CurrentNode.NodeNetwork }}

apiVersion: agent-install.openshift.io/v1beta1

kind: NMStateConfig

metadata:

annotations:

siteconfig.open-cluster-management.io/sync-wave: "1"

name: "{{ .SpecialVars.CurrentNode.HostName }}"

namespace: "{{ .Spec.ClusterName }}"

labels:

nmstate-label: "{{ .Spec.ClusterName }}"

spec:

config:

{{ .SpecialVars.CurrentNode.NodeNetwork.NetConfig | toYaml | indent 4}}

interfaces:

{{ .SpecialVars.CurrentNode.NodeNetwork.Interfaces | toYaml | indent 4 }}

{{ end }}

immutable: true

kind: ConfigMap

metadata:

name: ai-node-templates-aarch64-v1

namespace: open-cluster-management

Cluster Instance example

Once these two templates have been created, they can be utilized with a clusterinstance CR. Notice the CIS reference with the multi-architecture designation and the call to the two ARM templates created from above.

# cat clusterinstance.yaml

# Ref: https://github.com/stolostron/siteconfig/blob/main/bundle/manifests/siteconfig.open-cluster-management.io_clusterinstances.yaml

---

apiVersion: siteconfig.open-cluster-management.io/v1alpha1

kind: ClusterInstance

metadata:

name: "hp-arm1"

namespace: "hp-arm1"

spec:

additionalNTPSources:

- "2600:52:7:x::y"

baseDomain: "example.lab"

clusterImageSetNameRef: "openshift-4.19.0-ec.5-multi" # ARM OCP image

clusterName: "hp-arm1"

clusterNetwork:

- cidr: "fd01::/48"

hostPrefix: 64

cpuPartitioningMode: AllNodes

extraLabels:

ManagedCluster:

common-disconnected: "true"

common-418-arch: "arm"

holdInstallation: false

extraManifestsRefs:

- name: ran-rds-manifests

installConfigOverrides: |

{

"capabilities": {

"baselineCapabilitySet": "None",

"additionalEnabledCapabilities": [

"NodeTuning",

"OperatorLifecycleManager",

"Ingress",

"marketplace"

]

}

}

machineNetwork:

- cidr: "2600:52:7:97::/64"

networkType: "OVNKubernetes"

proxy:

httpProxy: http://jumphost.example.lab:3128

httpsProxy: http://jumphost.example.lab:3128

noProxy: .hub.example.lab,9999,5050,6385,8089,6180,6185

pullSecretRef:

name: "assisted-deployment-pull-secret"

serviceNetwork:

- cidr: "fd02::/112"

sshPublicKey: ""

templateRefs:

- name: ai-cluster-templates-aarch64-v1 # ARM cluster template

namespace: open-cluster-management

nodes:

- automatedCleaningMode: "disabled"

bmcAddress: "redfish-virtualmedia://192.168.x.y/redfish/v1/Systems/1" # -> HP-arm1 /Systems/1/

bmcCredentialsName:

name: "hp-arm1-worker"

bootMACAddress: "88:e9:a4:d6:66:cc"

bootMode: "UEFI"

hostName: "hp-arm1.example.lab"

nodeNetwork:

interfaces:

- name: "enP3p1s0f1np0"

macAddress: "88:e9:a4:d6:66:cc"

config:

interfaces:

- name: enP3p1s0f1np0

type: ethernet

state: up

ipv4:

address:

- ip: 192.168.x.y

prefix-length: 24

enabled: true

dhcp: false

ipv6:

address:

- ip: 2600:52:7:x::y

prefix-length: 64

dhcp: false

autoconf: false

enabled: true

- name: enP3p1s0f1np1

type: ethernet

state: down

ipv4:

enabled: false

dhcp: false

ipv6:

dhcp: false

autoconf: false

enabled: false

dns-resolver:

config:

server:

- 2600:52:7:x::y

- 192.168.x.y

routes:

config:

- destination: '::/0'

next-hop-address: '2600:52:7:x::1'

next-hop-interface: enP3p1s0f1np0

- destination: '0.0.0.0/0'

next-hop-address: '192.168.x.1'

next-hop-interface: enP3p1s0f1np0

role: "master"

rootDeviceHints:

deviceName: "/dev/disk/by-path/pci-0007:02:00.0-nvme-1"

templateRefs:

- name: ai-node-templates-aarch64-v1 # ARM node template

namespace: open-cluster-management

This will provide a ZTP assisted service deployed cluster awaiting ARM policy application.

# oc get clusterinstances.siteconfig.open-cluster-management.io -n hp-arm1 -o json | jq .items[].status.deploymentConditions

[

{

"lastProbeTime": "2025-04-29T13:06:00Z",

"lastTransitionTime": "2025-04-09T14:34:32Z",

"message": "The cluster installation stopped",

"reason": "ClusterInstallationStopped",

"status": "True",

"type": "ClusterInstallRequirementsMet"

},

{

"lastProbeTime": "2025-04-29T13:06:00Z",

"lastTransitionTime": "2025-04-09T14:34:32Z",

"message": "The installation has completed: Cluster is installed",

"reason": "InstallationCompleted",

"status": "True",

"type": "ClusterInstallCompleted"

},

{

"lastProbeTime": "2025-04-29T13:06:00Z",

"lastTransitionTime": "2025-04-09T14:34:32Z",

"message": "The installation has not failed",

"reason": "InstallationNotFailed",

"status": "False",

"type": "ClusterInstallFailed"

},

{

"lastProbeTime": "2025-04-29T13:06:00Z",

"lastTransitionTime": "2025-04-09T14:34:32Z",

"message": "The installation has stopped because it completed successfully",

"reason": "InstallationCompleted",

"status": "True",

"type": "ClusterInstallStopped"

}

]

ARM Policies

The normal x86_64 policies will have several differences from ARM based platforms. ARM platforms will prefer the use of the 64k kernel in lieu of the real-time (RT) kernel. In the upcoming 4.19 Node Tuning Operator, changes Content from github.com is not included.specifying the 64k kernel will be done in the performance profile.

Sample Performance Profile and Tuned Patch

- fileName: PerformanceProfile.yaml

policyName: "config-policy"

spec:

additionalKernelArgs:

- rcupdate.rcu_normal_after_boot=0

- efi=runtime

- iommu.passthrough=1

# 7 - This option must NOT be set when using with the NVIDIA GPU operator

cpu:

isolated: 2-127

reserved: 0-1

hugepages:

defaultHugepagesSize: 512M

# 1 - ARM huge page

pages:

- size: 512M

# 1 - ARM huge page

count: 32

machineConfigPoolSelector:

pools.operator.machineconfiguration.openshift.io/master: ""

nodeSelector:

node-role.kubernetes.io/master: ""

# To use the standard (non-realtime) kernel, set enabled to false

realTimeKernel:

enabled: false

# 2 - Disabling RT in favor of 64K one

kernelPageSize: 64k

# 3 Enabled 64k kernel pagesize

Completed tuned policy changes are:

- fileName: TunedPerformancePatch.yaml

policyName: "config-policy"

spec:

profile:

- name: performance-patch

data: |

[main]

summary=Configuration changes profile inherited from performance created tuned

include=openshift-node-performance-openshift-node-performance-profile

[sysctl]

kernel.timer_migration=0

# 4 - Kernel `timer_migration=0` to disable timer migration.

[service]

service.stalld=stop,disable

# 5 - Changed to stop/disable stalld service

service.chronyd=stop,disable

Some additional deltas between the standard policies include:

- Content from docs.kernel.org is not included.ARM huge page size

- Disabling RT in favor of 64K one

- Enabled 64k kernel pagesize

- Kernel

timer_migration=0to disable timer migration. This is required to get reasonable cyclic test results on the non-RT kernel and needs to be removed if testing on the RT kernel. - Changed to stop/disable stalld service since it This content is not included.doesn't work with the 64k pagesize kernel.

- Mentions to the default cpu frequency driver are automatically updated from intel_pstate to the ARM based: cppc_cpufreq

- This option

iommu.passthrough=1should not be set when using the Nvidia GPU operator.

NOTE: To enable 512M hugepagesize the kernelpagesize must be set to 64k as illustrated above. Currently, irqbalance is crashing on 4.19 with the following error. This This content is not included.issue tracks the problem and fix.

Apr 29 15:15:08 node.example.com irqbalance[27920]: IRQBALANCE_BANNED_CPUS is discarded, Use IRQBALANCE_BANNED_CPULIST instead

Apr 29 15:15:08 node.example.com irqbalance[27920]: IRQ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 >

Apr 29 15:15:08 node.example.com systemd-coredump[27958]: Process 27920 (irqbalance) of user 0 dumped core.

Stack trace of thread 27920:

#0 0x0000ffff8c952568 __pthread_kill_implementation (libc.so.6 + 0x82568)

#1 0x0000ffff8c90aa7c raise (libc.so.6 + 0x3aa7c)

#2 0x0000ffff8c8f6fb4 abort (libc.so.6 + 0x26fb4)

#3 0x0000ffff8c9467d8 __libc_message (libc.so.6 + 0x767d8)

#4 0x0000ffff8c9ca7b8 __fortify_fail (libc.so.6 + 0xfa7b8)

#5 0x0000ffff8c9c8f04 __chk_fail (libc.so.6 + 0xf8f04)

#6 0x0000ffff8c9c873c __strcat_chk (libc.so.6 + 0xf873c)

#7 0x0000aaaacaa16d18 check_platform_device (irqbalance + 0x6d18)

#8 0x0000aaaacaa17238 init_irq_class_and_type (irqbalance + 0x7238)

#9 0x0000aaaacaa19ec8 build_object_tree (irqbalance + 0x9ec8)

#10 0x0000aaaacaa12a18 main (irqbalance + 0x2a18)

#11 0x0000ffff8c8f7280 __libc_start_call_main (libc.so.6 + 0x27280)

#12 0x0000ffff8c8f7358 __libc_start_main@@GLIBC_2.34 (libc.so.6 + 0x27358)

#13 0x0000aaaacaa13430 _start (irqbalance + 0x3430)

ELF object binary architecture: AARCH64

ARM RDS Cluster Compare

The cluster compare tool can be used to investigate the ARM cluster deployment and its associated policies. The cluster compare’s default compares as if the cluster were an x86_64 architecture.

The expectation with the results is that the deployment conforms to the RDS to receive support. Any deviations should be shared and approved with Red Hat. This enables a fast track for ARM support as it becomes generally available with OpenShift 4.20.

The output reflects the policy changes from above.

Sample output:

**********************************

Cluster CR: performance.openshift.io/v2_PerformanceProfile_openshift-node-performance-profile

Reference File: required/node-tuning-operator/PerformanceProfile.yaml

Description:

https://docs.openshift.com/container-platform/4.18/scalability_and_performance/telco_ref_design_specs/ran/telco-ran-ref-du-components.html#telco-ran-node-tuning-operator_ran-ref-design-components

Diff Output: diff -u -N /tmp/MERGED-1429464385/performance-openshift-io-v2_performanceprofile_openshift-node-performance-profile /tmp/LIVE-2448102810/performance-openshift-io-v2_performanceprofile_openshift-node-performance-profile

--- /tmp/MERGED-1429464385/performance-openshift-io-v2_performanceprofile_openshift-node-performance-profile 2025-05-15 20:22:33.083328083 +0000

+++ /tmp/LIVE-2448102810/performance-openshift-io-v2_performanceprofile_openshift-node-performance-profile 2025-05-15 20:22:33.083328083 +0000

@@ -8,7 +8,7 @@

additionalKernelArgs:

- rcupdate.rcu_normal_after_boot=0

- efi=runtime

- - module_blacklist=irdma

+ - iommu.passthrough=1

cpu:

isolated: 2-127

reserved: 0-1

**********************************

Cluster CR: tuned.openshift.io/v1_Tuned_openshift-cluster-node-tuning-operator_performance-patch

Reference File: required/node-tuning-operator/TunedPerformancePatch.yaml

Description:

https://docs.openshift.com/container-platform/4.18/scalability_and_performance/telco_ref_design_specs/ran/telco-ran-ref-du-components.html#telco-ran-node-tuning-operator_ran-ref-design-components

Diff Output: diff -u -N /tmp/MERGED-1507483814/tuned-openshift-io-v1_tuned_openshift-cluster-node-tuning-operator_performance-patch /tmp/LIVE-300332996/tuned-openshift-io-v1_tuned_openshift-cluster-node-tuning-operator_performance-patch

--- /tmp/MERGED-1507483814/tuned-openshift-io-v1_tuned_openshift-cluster-node-tuning-operator_performance-patch 2025-05-15 20:22:33.119328352 +0000

+++ /tmp/LIVE-300332996/tuned-openshift-io-v1_tuned_openshift-cluster-node-tuning-operator_performance-patch 2025-05-15 20:22:33.119328352 +0000

@@ -5,17 +5,10 @@

namespace: openshift-cluster-node-tuning-operator

spec:

profile:

- - data: |

- [main]

- summary=Configuration changes profile inherited from performance created tuned

- include=openshift-node-performance-openshift-node-performance-profile

- [scheduler]

- group.ice-ptp=0:f:10:*:ice-ptp.*

- group.ice-gnss=0:f:10:*:ice-gnss.*

- group.ice-dplls=0:f:10:*:ice-dplls.*

- [service]

- service.stalld=start,enable

- service.chronyd=stop,disable

+ - data: "[main]\nsummary=Configuration changes profile inherited from performance

+ created tuned\ninclude=openshift-node-performance-openshift-node-performance-profile\n[sysctl]\nkernel.timer_migration=0\n[service]\nservice.stalld=stop,disable

+ # https://issues.redhat.com/browse/RHEL-40146\nservice.chronyd=stop,disable

+ \ \n"

name: performance-patch

recommend:

- machineConfigLabels:

**********************************

Cluster CR: v1_Namespace_openshift-ptp

Reference File: required/ptp-operator/PtpSubscriptionNS.yaml

Description:

https://docs.openshift.com/container-platform/4.18/scalability_and_performance/telco_ref_design_specs/ran/telco-ran-ref-du-components.html#telco-ran-ptp-operator_ran-ref-design-components

Diff Output: diff -u -N /tmp/MERGED-69102153/v1_namespace_openshift-ptp /tmp/LIVE-1744907916/v1_namespace_openshift-ptp

--- /tmp/MERGED-69102153/v1_namespace_openshift-ptp 2025-05-15 20:22:32.950327090 +0000

+++ /tmp/LIVE-1744907916/v1_namespace_openshift-ptp 2025-05-15 20:22:32.950327090 +0000

@@ -2,6 +2,7 @@

kind: Namespace

metadata:

annotations:

+ security.openshift.io/MinimallySufficientPodSecurityStandard: privileged

workload.openshift.io/allowed: management

labels:

openshift.io/cluster-monitoring: "true"

**********************************

Cluster CR: v1_Namespace_openshift-sriov-network-operator

Reference File: required/sriov-operator/SriovSubscriptionNS.yaml

Description:

https://docs.openshift.com/container-platform/4.18/scalability_and_performance/telco_ref_design_specs/ran/telco-ran-ref-du-components.html#telco-ran-sr-iov-operator_ran-ref-design-components

Diff Output: diff -u -N /tmp/MERGED-586270793/v1_namespace_openshift-sriov-network-operator /tmp/LIVE-3802692172/v1_namespace_openshift-sriov-network-operator

--- /tmp/MERGED-586270793/v1_namespace_openshift-sriov-network-operator 2025-05-15 20:22:32.952327105 +0000

+++ /tmp/LIVE-3802692172/v1_namespace_openshift-sriov-network-operator 2025-05-15 20:22:32.952327105 +0000

@@ -2,5 +2,6 @@

kind: Namespace

metadata:

annotations:

+ security.openshift.io/MinimallySufficientPodSecurityStandard: privileged

workload.openshift.io/allowed: management

name: openshift-sriov-network-operator

**********************************

Summary

CRs with diffs: 9/54

CRs in reference missing from the cluster: 2

machine-config:

Missing CRs:

- required/machine-config/sriov-related-kernel-arguments/07-sriov-related-kernel-args-master.yaml

Description:

https://docs.openshift.com/container-platform/4.18/scalability_and_performance/telco_ref_design_specs/ran/telco-ran-ref-du-components.html#telco-ran-machine-configuration_ran-ref-design-components

- required/machine-config/sriov-related-kernel-arguments/07-sriov-related-kernel-args-worker.yaml

Description:

https://docs.openshift.com/container-platform/4.18/scalability_and_performance/telco_ref_design_specs/ran/telco-ran-ref-du-components.html#telco-ran-machine-configuration_ran-ref-design-components

No CRs are unmatched to reference CRs

Metadata Hash: 84133948793621ede73a8992b7662cc5bff019e47cf68b6d236f6d2d8c6ba39d

No patched CRs

NOTE: The above missing CR is expected as the Intel based kernel args are not valid for an ARM based platform.