Enabling VolumeCondition reporting for CephFS PersistentVolumeClaims - Developer preview

Introduction

In order to help users with identifying application problems caused by storage, the Ceph-CSI driver can report the volume health for CephFS backed PersistentVolumes. Applications and monitoring can be informed about an unhealthy condition of the volume. This feature is available as Developers preview with ODF 4.20.

Important: A developer preview feature is subject to Developer preview support limitations. Developer preview features are not intended to be run in production environments. The clusters deployed with the developer preview features are considered to be development clusters and are not supported through the Red Hat Customer Portal case management system. Development Preview features are meant for customers who are willing to evaluate new products or releases of products in an early stage of product development. If you need assistance with developer preview features, reach out to the ocs-devpreview@redhat.com mailing list and a member of the Red Hat Development Team will assist you as quickly as possible based on availability and work schedules. To know more about the support scope refer to the This content is not included.KCS

Requirements

- OpenShift Data Foundation 4.20

- CephFS PersistentVolumeClaims

Enabling VolumeCondition reporting

$ oc -n openshift-storage \

annotate driver.csi.ceph.io/openshift-storage.cephfs.csi.ceph.com \

addons.csi.ceph.io/volume-condition=true

driver.csi.ceph.io/openshift-storage.cephfs.csi.ceph.com annotated

The following Pods get started:

$ oc -n openshift-storage get pods -l app=openshift-storage.cephfs.csi.ceph.com-nodeplugin-csi-addons

NAME READY STATUS RESTARTS AGE

openshift-storage.cephfs.csi.ceph.com-nodeplugin-csi-addon2g6ck 2/2 Running 0 11s

openshift-storage.cephfs.csi.ceph.com-nodeplugin-csi-addon7lm4f 2/2 Running 0 11s

openshift-storage.cephfs.csi.ceph.com-nodeplugin-csi-addon7vz67 2/2 Running 0 11s

Workload example

The Volume Condition can only be cheched when a Pod uses the PersistentVolume Claim. This example creates a PersistentVolumeClaim with the standard ocs-storagecluster-cephfs StorageClass, and starts a Pod that acts like a web-server.

Create the PersistentVolumeClaim:

$ oc create -f - << EOY

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: artifacts

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Gi

storageClassName: ocs-storagecluster-cephfs

EOY

persistentvolumeclaim/artifacts created

Start a Pod that uses the PersistentVolumeClaim:

$ oc create -f - << EOY

---

apiVersion: v1

kind: Pod

metadata:

name: builder

spec:

containers:

- name: sleep

image: registry.redhat.io/ubi10/ubi

command: ["sleep", "4h"]

securityContext:

allowPrivilegeEscalation: false

capabilities:

drop: ["ALL"]

seccompProfile:

type: RuntimeDefault

volumeMounts:

- name: data

mountPath: /srv

volumes:

- name: data

persistentVolumeClaim:

claimName: artifacts

readOnly: false

EOY

pod/builder created

Verify that the PersistentVolumeClaim has been created (staus Bound) and the builder Pod is running:

$ oc get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

artifacts Bound pvc-e2ec7646-eb28-4a88-8eb5-dfc37cf2111e 1Gi RWX ocs-storagecluster-cephfs <unset> 4m32s

$ oc get pod

NAME READY STATUS RESTARTS AGE

builder 1/1 Running 0 7s

Create a small artifact and have a process generating some data:

$ oc rsh builder

sh-5.2# while sleep 30 ; do date > /srv/latest ; sync /srv/latest ; cat /srv/latest ; done

Tue Nov 18 14:23:32 UTC 2025

Tue Nov 18 14:24:03 UTC 2025

Volume Condition reporting

The Volume Condition is reported as an Event for the PersistentVolumeClaim. When the condition changes, a new Event is generated.

By describing the PersistentVolumeClaim the Volume Condition will be displayed (as an Event at the bottom):

$ oc describe pvc/artifacts

Name: artifacts

Namespace: default

StorageClass: ocs-storagecluster-cephfs

Status: Bound

Volume: pvc-e2ec7646-eb28-4a88-8eb5-dfc37cf2111e

Labels: <none>

Annotations: pv.kubernetes.io/bind-completed: yes

pv.kubernetes.io/bound-by-controller: yes

volume.beta.kubernetes.io/storage-provisioner: openshift-storage.cephfs.csi.ceph.com

volume.kubernetes.io/storage-provisioner: openshift-storage.cephfs.csi.ceph.com

Finalizers: [kubernetes.io/pvc-protection]

Capacity: 1Gi

Access Modes: RWX

VolumeMode: Filesystem

Used By: builder

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Provisioning 8m4s openshift-storage.cephfs.csi.ceph.com_openshift-storage.cephfs.csi.ceph.com-ctrlplugin-78849d946b78tn_ce6899eb-78ce-4a6a-bd21-abd35e499c1e External provisioner is provisioning volume for claim "default/artifacts"

Normal ExternalProvisioning 8m4s persistentvolume-controller Waiting for a volume to be created either by the external provisioner 'openshift-storage.cephfs.csi.ceph.com' or manually by the system administrator. If volume creation is delayed, please verify that the provisioner is running and correctly registered.

Normal ProvisioningSucceeded 8m3s openshift-storage.cephfs.csi.ceph.com_openshift-storage.cephfs.csi.ceph.com-ctrlplugin-78849d946b78tn_ce6899eb-78ce-4a6a-bd21-abd35e499c1e Successfully provisioned volume pvc-e2ec7646-eb28-4a88-8eb5-dfc37cf2111e

Normal VolumeConditionHealthy 29s (x2 over 3m29s) CSI-Addons

It is also possible to list all Events for the PersistentVolumeClaim:

$ oc get event --field-selector involvedObject.name=artifacts

LAST SEEN TYPE REASON OBJECT MESSAGE

9m19s Normal Provisioning persistentvolumeclaim/artifacts External provisioner is provisioning volume for claim "default/artifacts"

9m19s Normal ExternalProvisioning persistentvolumeclaim/artifacts Waiting for a volume to be created either by the external provisioner 'openshift-storage.cephfs.csi.ceph.com' or manually by the system administrator. If volume creation is delayed, please verify that the provisioner is running and correctly registered.

9m18s Normal ProvisioningSucceeded persistentvolumeclaim/artifacts Successfully provisioned volume pvc-e2ec7646-eb28-4a88-8eb5-dfc37cf2111e

104s Normal VolumeConditionHealthy persistentvolumeclaim/artifacts volume is in a healthy condition

Forcing an unhealthy Volume Condition

Warning

This section describes how to break the CephFS functionality of Red Hat OpenShift Data Foundation. Following these steps will cause failures of all CephFS volumes used by all applications on the OpenShift cluster. Only execute these steps on an OpenShift cluster that can be used for testing.

Certain events can trigger an unhealthy Volume Condition. Often these problems are caused by network issues or by a misconfiguration. This example simulates fatal failures in the CephFS MDS, which is a crucial component for CephFS PersistentVolumes to function correctly.

Check the available CephFS MDS's, which are configured through a Deployment:

$ oc -n openshift-storage get deployments -l app.kubernetes.io/name=ceph-mds

NAME READY UP-TO-DATE AVAILABLE AGE

rook-ceph-mds-ocs-storagecluster-cephfilesystem-a 1/1 1 1 148m

rook-ceph-mds-ocs-storagecluster-cephfilesystem-b 1/1 1 1 148m

The CephFS MDS's can run in high-availability mode, so both Deployments needs to be scaled down to 0 replicas:

$ oc -n openshift-storage scale deployments -l app.kubernetes.io/name=ceph-mds --replicas=0

deployment.apps/rook-ceph-mds-ocs-storagecluster-cephfilesystem-a scaled

deployment.apps/rook-ceph-mds-ocs-storagecluster-cephfilesystem-b scaled

Confirm that the CephFS MDS's are not running anymore:

$ oc -n openshift-storage get deployments -l app.kubernetes.io/name=ceph-mds

NAME READY UP-TO-DATE AVAILABLE AGE

rook-ceph-mds-ocs-storagecluster-cephfilesystem-a 0/0 0 0 153m

rook-ceph-mds-ocs-storagecluster-cephfilesystem-b 0/0 0 0 152m

$ oc -n openshift-storage get pods -l app.kubernetes.io/name=ceph-mds

No resources found in openshift-storage namespace.

For the Volume Condition to be updated, there needs to be some read and/or writes happening on the PersistentVolumeClaim. In case of the web-server, it is possible that all contents is cached by the service, and no I/O is required on the volume. This is not a real issue, as the web-service is still functioning correctly, so the Volume Condition of the PersistentVolumeClaim is not relevant for the availability of the web-server.

Assuming the web-server actively reads from or writes to the volume, the volume is detected as unhealthy after a little delay:

$ oc get events -w

...

0s Warning VolumeConditionAbnormal persistentvolumeclaim/public-html health-check has not responded for 82.679238 seconds

And the details of the PersistentVolumeClaim contains the Warning Event too:

$ oc describe pvc/artifacts

Name: artifacts

Namespace: default

StorageClass: ocs-storagecluster-cephfs

Status: Bound

Volume: pvc-e2ec7646-eb28-4a88-8eb5-dfc37cf2111e

Labels: <none>

Annotations: pv.kubernetes.io/bind-completed: yes

pv.kubernetes.io/bound-by-controller: yes

volume.beta.kubernetes.io/storage-provisioner: openshift-storage.cephfs.csi.ceph.com

volume.kubernetes.io/storage-provisioner: openshift-storage.cephfs.csi.ceph.com

Finalizers: [kubernetes.io/pvc-protection]

Capacity: 1Gi

Access Modes: RWX

VolumeMode: Filesystem

Used By: builder

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal VolumeConditionHealthy 20m CSI-Addons volume is in a healthy condition

Normal VolumeConditionHealthy 20m CSI-Addons volume is in a healthy condition

Warning VolumeConditionAbnormal 4m49s CSI-Addons health-check has not responded for 89.445320 seconds

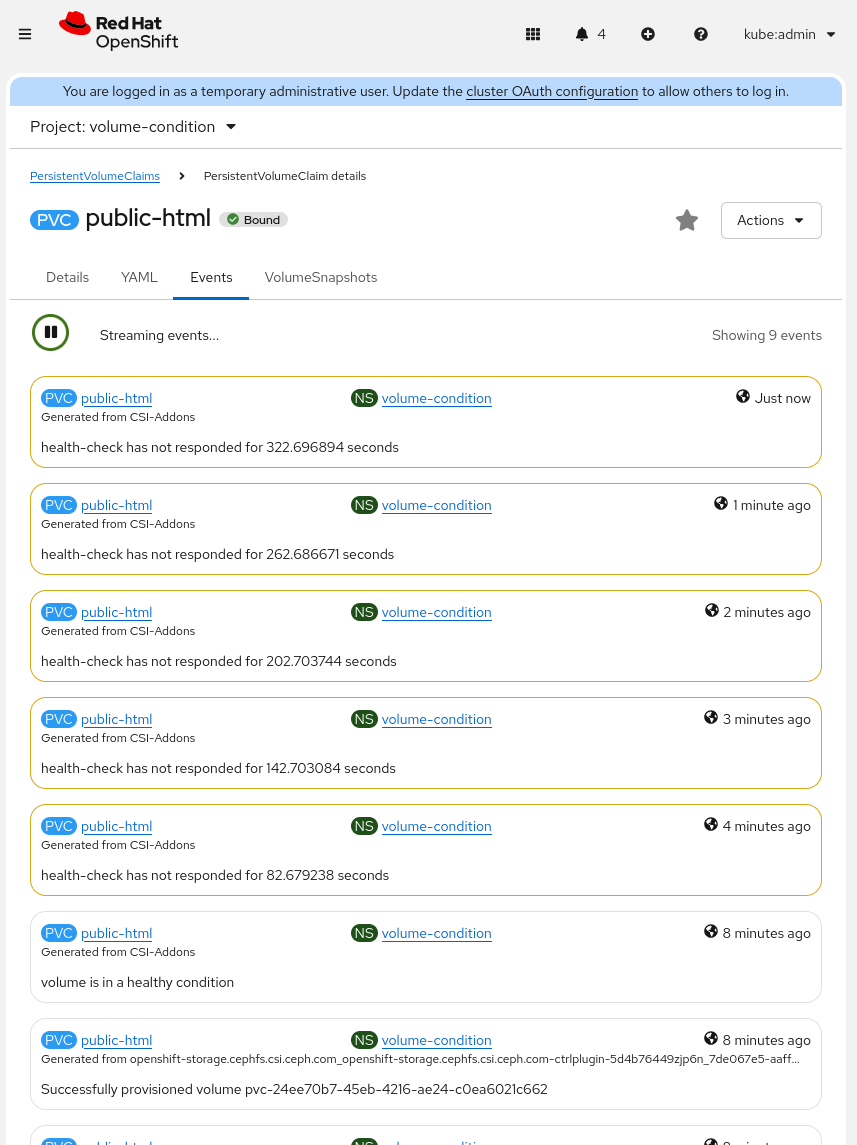

In the Red Hat OpenShift Console the Events tab for the PersistentVolumeClaim shows the Warning too:

Recovery from the unhealthy Volume Condition

In the above example, the CephFS MDS's were scaled down in order to provoke a fatal failure. To get the CephFS volumes all working again, the CephFS MDS's should be scaled up:

$ oc -n openshift-storage scale deployments -l app.kubernetes.io/name=ceph-mds --replicas=1

deployment.apps/rook-ceph-mds-ocs-storagecluster-cephfilesystem-a scaled

deployment.apps/rook-ceph-mds-ocs-storagecluster-cephfilesystem-b scaled

After scaling up, the CephFS MDS Pods should be running again:

$ oc -n openshift-storage get pods -l app.kubernetes.io/name=ceph-mds

NAME READY STATUS RESTARTS AGE

rook-ceph-mds-ocs-storagecluster-cephfilesystem-a-7fb849c5txxk6 2/2 Running 0 9s

rook-ceph-mds-ocs-storagecluster-cephfilesystem-b-5b8cc885lz8l2 2/2 Running 0 9s

The Volume Condition of the PersistentVolumeClaim is also reported back to VolumeConditionHealthy:

$ oc describe pvc/artifacts

...

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning VolumeConditionAbnormal 11m CSI-Addons health-check has not responded for 634.111610 seconds

Warning VolumeConditionAbnormal 10m CSI-Addons health-check has not responded for 650.078727 seconds

Normal VolumeConditionHealthy 9m51s (x3 over 32m) CSI-Addons volume is in a healthy condition