OpenShift nodes are being overloaded and going into NotReady state

Environment

- Red Hat OpenShift Container Platform (RHOCP)

- 4

Issue

- The

nodesare being overloaded by theirpods' resource usage. - The

podsaren't being scheduled evenly across thenodes.

Resolution

The two most direct ways to better improve node performance and scheduling is to:

A) Set appropriate pod resource requests and limits.

B) This page is not included, but the link has been rewritten to point to the nearest parent document.Allocate additional resources for the nodes' systems.

Refer to Pods are not getting allocated evenly on worker nodes in OpenShift for additional information about pod scheduling.

Root Cause

Disclaimer: Links contained herein to external website(s) are provided for convenience only. Red Hat has not reviewed the links and is not responsible for the content or its availability. The inclusion of any link to an external website does not imply endorsement by Red Hat of the website or their entities, products or services. You agree that Red Hat is not responsible or liable for any loss or expenses that may result due to your use of (or reliance on) the external site or content.

In Kubernetes, the Content from kubernetes.io is not included.Scheduler is responsible for scheduling new pods to a node in the cluster, and it does so based on several factors, creating a score for the node. One of the primary scores for the node is how many resources it has available, as well as how many resources the pod wants. The latter of these values is represented as the pod's resource requests.

When a given pod doesn't have any set requests and/or limits, or their set requests/limits are incongruent with their actual usage rates, it can result in poor scheduling. When several pods are poorly scheduled, it can result in lopsided scheduling, with some nodes being overloaded by pods resource usage whilst others have much fewer utilized resources, which is undesirable for cluster health and function.

Refer to Pods are not getting allocated evenly on worker nodes in OpenShift for additional information about pod scheduling.

Diagnostic Steps

Below is a bash script which will poll the API for a description of each node, then search for the non-terminated pods scheduled to each node that do not have a CPU or Memory request in place, excluding those in openshift-* namespaces. It will then cross-reference each pod with its current usage, for a general idea of the resources that pod is unexpectedly taking up in the cluster.

Create a bash script with name req-finder.sh and the following content:

#!/bin/bash

# Breaks down node descriptions to check on pod scheduling

# Prepend pods with descriptive column headers

declare -a pods=( "$(echo "Namespace Pod-Name CPU-Request MEM-Request CPU-Current MEM-Current")" )

# Read into array `pods` our pod info

readarray -t -O 1 pods < <(

oc describe nodes \

| awk '

/^Non-terminated Pods:/,/^Allocated resources:/ { # Search through all non-terminated pods on the hosts

if ($1 !~ /openshift-/ && ($3 == 0 || $7 == 0)) { # Select only those without a cpu or mem request

print $1, $2, $3, $7 # Print pod name, namespace, cpu, and mem requests

}

}'

)

# Iterate over the `pods` array, appending current usage data

length=$((${#pods[@]}-1))

for i in $(seq $length); do

# Grab just pod name and namespace from array

param=( ${pods[$i]} )

# Collect usage metrics into variable `addons`

addons="$(

oc adm top pod \

--no-headers "${param[1]}" \

--namespace "${param[0]}" \

| awk '{print $2, $3}'

)"

# Append metrics to array

pods[$i]="${pods[$i]} $addons"

done

for pod in "${pods[@]}"; do

echo "$pod"

done | column -t

Running the script will produce output that appears like so:

$ chmod u+x req-finder.sh

$ ./req-finder.sh

Namespace Pod-Name CPU-Request MEM-Request CPU-Current MEM-Current

open-cluster-management-agent-addon application-manager-7c5cc788df-prmcm 0 128Mi 1m 58Mi

open-cluster-management-agent-addon config-policy-controller-7599f64b67-q4wqs 0 128Mi 0m 88Mi

open-cluster-management-agent-addon iam-policy-controller-77c4877dd9-4blx8 0 150Mi 0m 32Mi

test argocd-test-app-7d4f7bff77-77glm 0 0 0m 45Mi

test1 httpd-59bfcd8bcb-kdjfm 0 0 0m 49Mi

open-cluster-management-agent-addon cluster-proxy-proxy-agent-f47f7888d-fg4j5 0 0 0m 35Mi

open-cluster-management-agent-addon cluster-proxy-service-proxy-694b955bff-xzc4s 0 128Mi 0m 22Mi

open-cluster-management-agent-addon klusterlet-addon-search-5495fc797d-mwbhc 0 128Mi 22m 147Mi

test argocd-test-app-7d4f7bff77-4vk25 0 0 0m 49Mi

test argocd-test-app-7d4f7bff77-gc47b 0 0 0m 46Mi

test1 httpd-59bfcd8bcb-nhsmh 0 0 1m 49Mi

open-cluster-management-agent-addon cert-policy-controller-56466f6cdf-4vrx6 0 150Mi 0m 19Mi

open-cluster-management-agent-addon governance-policy-framework-59f85fc577-gvkvn 0 128Mi 1m 71Mi

open-cluster-management-agent-addon klusterlet-addon-workmgr-75567869cc-9kkkp 0 128Mi 0m 36Mi

open-cluster-management-agent klusterlet-648c5b8c7b-rr7gv 0 0 1m 82Mi

psa nginx-754c485cc7-vtslc 0 0 0m 6Mi

test argocd-test-app-7d4f7bff77-tj88k 0 0 0m 49Mi

test argocd-test-app-7d4f7bff77-vswtc 0 0 0m 47Mi

test1 httpd-59bfcd8bcb-wd9hj 0 0 0m 42Mi

testjson code-with-quarkus-3-947d4 0 0 1m 165Mi

NOTE: This can also be used in conjunction with ocadmtop_node.sh script for discovering the current top consumers overall in the cluster.

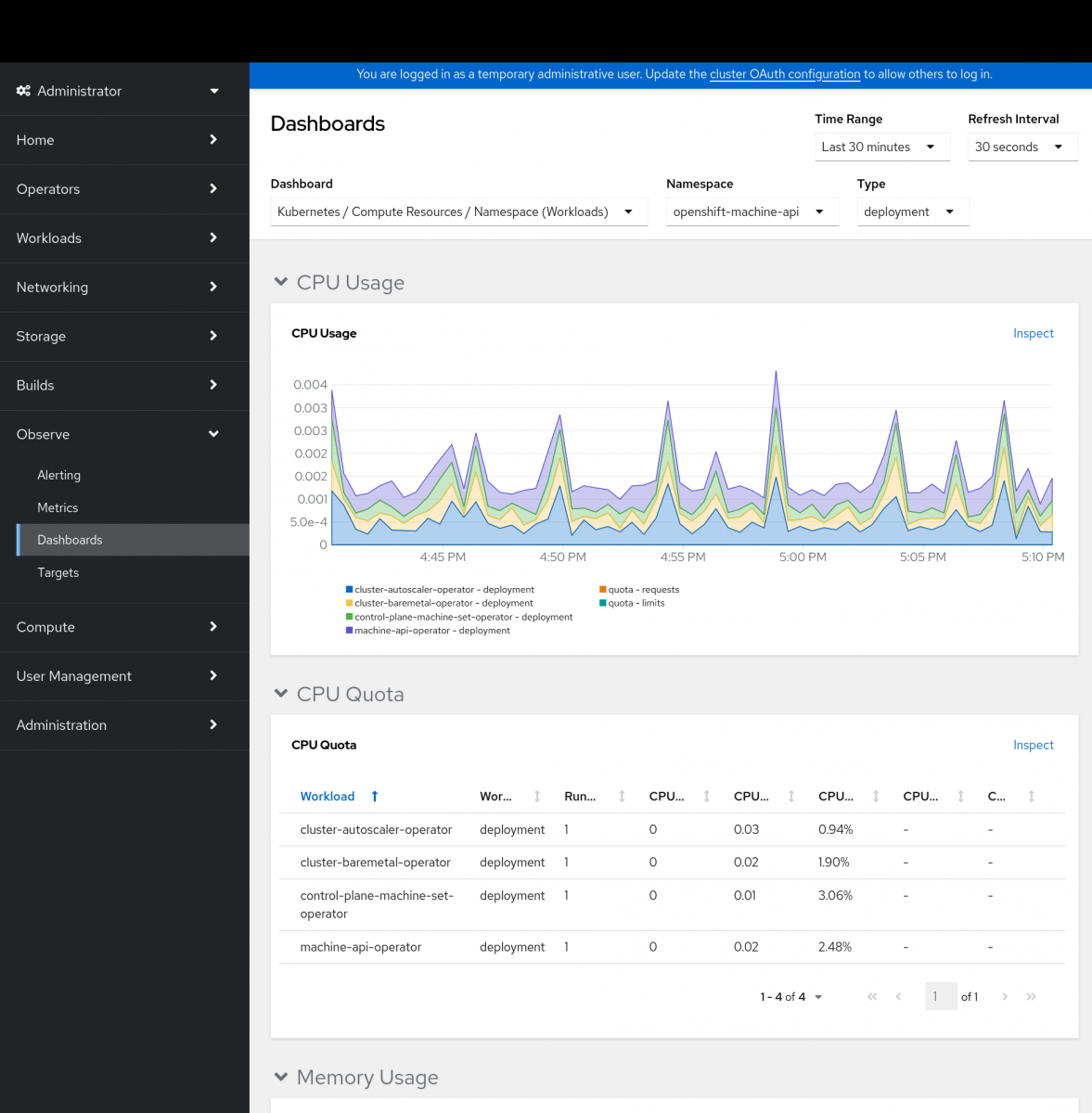

Once used, it is possible to track the pod's usage in the Metrics Dashboard for the cluster and identify its actual usage over time from the OpenShift Web Console by going to Observe > Dashboards. Set the Dashboard to an appropriate value to measure out usage, such as "Kubernetes / Compute Resources / Namespace (Workloads)" for use by type, and allow to identify resource usage over time:

If further guidance or advice on the cluster's scheduling is required, please open a case with Red Hat Support.

This solution is part of Red Hat’s fast-track publication program, providing a huge library of solutions that Red Hat engineers have created while supporting our customers. To give you the knowledge you need the instant it becomes available, these articles may be presented in a raw and unedited form.