OpenShift Virtualization - Fencing and VM High Availability Guide

Introduction

In virtualized environments, high availability (HA) is essential to ensure that workloads continue to operate in the event of node failure. In this context, high availability refers to the platform’s ability to restart workloads, such as virtual machines, which were hosted on a node that is no longer responding. In OpenShift Virtualization, HA capabilities are implemented using Kubernetes-native constructs, enhanced by OpenShift’s self-healing infrastructure. One key component of HA is fencing, the process of isolating a failed or suspect node to prevent data corruption or split-brain scenarios. Avoiding split-brain is important, it can occur when the nodes within the cluster stop communicating with each other and yet continue to operate independently. This can lead to data inconsistencies and node conflicts, for example when the cluster attempts to restart a VM that is still running on an isolated node.

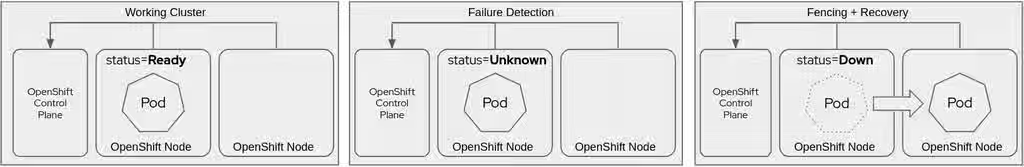

Restarting virtual machines (VMs) when a node fails occurs in three distinct phases: failure detection, fencing, and remediation. Each phase involves different components of the OpenShift and Kubernetes stack and has specific configuration requirements within the cluster. Failure detection identifies when a node is no longer responding, fencing ensures that the failed node is safely isolated and no workload is running, and remediation involves restarting the affected VMs on a healthy node.

Importantly, administrators are responsible for enabling and configuring the fencing mechanism after cluster deployment. Without the fencing configuration, node remediation and VM restarts will not happen as expected.

This guide provides a technical overview of fencing and remediation in OpenShift Virtualization, focusing on node failure detection and automated remediation strategies including Self Node Remediation (SNR), Fence Agents Remediation, and Machine Deletion Remediation. The Workload Availability for OpenShift documentation contains the most thorough and up-to-date information about the functionality, configuration, and options for detecting and recovering from node failure.

| Feature | Self Node Remediation | Fence Agents Remediation | Machine Deletion Remediation |

|---|---|---|---|

| VM recovery time | Slowest | Fastest | Varies |

| Requires BMC (e.g. IPMI) | No | Yes | On-prem, yes; Cloud, no |

| Requires watchdog device | Preferred | No | No |

| Works without external infra | Yes | No | No |

| Automatic node replacement | No | No | Yes |

Node Failure Detection

OpenShift uses node or machine health checks to monitor node health and take action if the node is determined to be unhealthy.

Before choosing a failure detection method, it’s important to understand the difference between a node and a machine in OpenShift.

- Nodes are Kubernetes objects which are a logical representation of a physical or virtual host that has joined the cluster and is capable of running workloads. Nodes are registered with the Kubernetes control plane by the kubelet (the node agent) during the cluster join process. Nodes are runtime objects, OpenShift does not inherently manage how nodes are created, destroyed, or replaced.

- Machines are an abstraction used in OpenShift by the Machine API and Bare Metal Operators to represent the desire for a cluster node to exist, including how it should be provisioned, configured, and managed by the underlying infrastructure. Put differently, when a Machine is created, it results in the Machine API provider provisioning and configuring a physical server from the underlying infrastructure. The Machine contains provider-specific configuration (e.g. AWS, vSphere, Bare Metal) and is typically created automatically by a MachineSet. The Machine API Operator interfaces with the underlying infrastructure and is responsible for the deployment and configuration of the physical or virtual host.

When a Machine is created, it triggers the provisioning of a new virtual or physical host. Depending on the underlying platform, this can include installing the operating system, along with configuring kubelet, and joining the cluster, at which point a corresponding Node object is created. The Machine resource is responsible for lifecycle management of the node, e.g. scaling, updating, and replacement.

Not all clusters use Machines to manage cluster members. Only clusters which have Machine API configured, such as with the baremetal cloud provider or using the Bare Metal Operator, will have Machine objects present.

Node Health Check

The Node Health Check (NHC) operator functions at the Kubernetes node level, where it directly observes node conditions and triggers remediation when a node is determined to be unhealthy. This approach is useful in deployments where machines are not managed by a MachineSet or where node replacement is unavailable or undesirable. Because NHC interacts directly with the node, it allows more flexible and programmable remediation workflows, such as triggering a node-local reboot. It also works well in environments where nodes are manually managed or provisioned outside of OpenShift’s typical automation stack.

Machine Health Check

The Machine Health Check (MHC) is the default and integrated mechanism provided by OpenShift’s Machine API. It monitors the health of cluster nodes both by monitoring node conditions, similar to NHC, and also watching the associated Machine object status. When a node becomes unhealthy, typically indicated by conditions such as Ready=False or Ready=Unknown for a specified period, MHC will, by default, delete the Machine object, triggering OpenShift’s Machine API to recreate it. However, just like with NHC, node reboots may also be used if desired.

MHC is tightly coupled with environments that have automated infrastructure provisioning, such as cloud platforms and on-premises bare metal deployments with the baremetal cloud provider configure, such as when deploying bare metal IPI. The health check options available to verify and report node status depend on other configuration in the cluster. All deployments are able to use node health checks, but only clusters with Machines configured and managed by a provider are able to use machine health checks.

A cluster can only use node health checks or machine health checks at any time. It is important to choose the option that is best suited to the infrastructure type your cluster is deployed to, the integration with the infrastructure, and the desired remediation actions.

Fencing and Remediation Strategies

When a node is determined to be not ready, the default behavior varies based on the specific workload, but generally includes:

- Eviction of Pods from failed nodes after a grace period.

- Rescheduling of workloads if replica sets or controllers (e.g., Deployments) are managing the pods.

However, this is insufficient for VM workloads, as they are stateful and sensitive to split-brain conditions, where restarting a VM on a different node while it’s still running on the original node can lead to data corruption. This is where fencing and remediation come in.

Self Node Remediation (SNR)

Self Node Remediation is a lightweight, node-local remediation strategy that allows a node to detect its own inability to communicate with the OpenShift API server and voluntarily reboot itself to restore cluster integrity. It is implemented using the Content from github.com is not included.medik8s project and is designed for autonomous recovery without requiring external fencing hardware or cloud APIs.

SNR functionality, in combination with the Node Health Check Operator, is used when the appropriate remediation template is applied in the node health check configuration. An agent on the node continuously checks the node’s ability to contact the Kubernetes API server and compares its view of the cluster’s health with that of its peers. If a node determines that it is isolated (e.g. a network partition or control plane unreachability), or it has access to the control plane which has marked it as unhealthy , it will trigger a reboot using the system’s watchdog device. By default, the Operator will use a hardware watchdog device if available or softdog if one is not configured. If neither is available, then a software reboot is used. Software reboots are the least reliable mechanism.

The reboot ensures that nothing is running on the failed node, allowing the control plane to safely reschedule VMs and other stateful workloads onto healthy nodes by the cluster, even if the rebooted node never comes back online.

The SNR configuration includes many tunable options to optimize the timeout for determining when a node is isolated, when to trigger the reboot action, and how long to wait to reschedule workload after the reboot action is triggered. Each of these should be carefully chosen, and thoroughly tested, to work with your environment and meet your recovery time objectives.

SNR is recommended for nodes that do not have a management interface or if the BMC interface is unreachable from within the cluster, for example due to security constraints, since SNR does not require one to work.

Requirements

- Self Node Remediation Operator deployed and configured

- Node Health Check (NHC) configured using the SelfNodeRemediationTemplate

Advantages

- Node-local process without external controllers or APIs

- Easiest configuration

- Does not depend on external fencing devices

- Does not require a communication path between the workload cluster and BMC network

See the documentation for full configuration options and recommendations.

Fence Agents Remediation (FAR)

Fence Agents Remediation (FAR) is a traditional, infrastructure-based approach to fencing that uses out-of-band management interfaces—such as IPMI, iDRAC, iLO, or Redfish—to forcibly power cycle a failed node. This method ensures that a non-responsive node is not running any workload before any remediation action, such as VM restart or node replacement, takes place. This protects against split-brain scenarios for stateful workloads like virtual machines.

FAR is suitable for environments where nodes are physical (bare metal) and equipped with a supported Baseboard Management Controller (BMC). It is often the most deterministic and reliable fencing method in traditional enterprise environments. The fence agent acts as the bridge between OpenShift and the BMC. It is invoked with the necessary parameters (e.g., IP, login credentials, fencing type), which are provided in the remediation template definition.

FAR accelerates workload recovery by setting a taint on the unhealthy node and deleting workloads in order to reschedule them to healthy nodes after the fencing action has been determined to be successful. When configuring FAR, ensure that a fence agent supported for bare metal with your hardware is used, e.g. fence_ipmilan, fence_redfish, or a hardware vendor specific option such as fence_ilo for HPE. Fence agents for virtual platforms, such as VMware or Red Hat Virtualization (RHV) are not compatible with bare metal OpenShift deployments.

For most on-premises deployments, FAR provides the fastest workload recovery time, as a result it is recommended when a management interface is available.

Requirements

- Fence Agents Remediation Operator deployed and configured

- Fencing device accessible from the Pod network and credentials provided

- Compatible fence agent configured in the FenceAgentsRemediationTemplate

Advantages

- Uses out-of-band management to enforce power state, even if node OS is unresponsive

- Mature solution, used by other Red Hat KVM-based virtualization solutions

- Can forcibly reboot or shutdown non-cooperative nodes

See the documentation for full configuration options and recommendations.

Machine Deletion Remediation (MDR)

Machine Deletion Remediation (MDR) is a cloud-native and infrastructure-integrated fencing and recovery strategy that removes a failed node from the cluster and recreates it using the Machine API. Rather than attempting to reboot or recover a failed node, this approach uses automated provisioning systems to replace the node. It is usable with bare metal clusters managed by the bare metal Operator.

When node health checks determine that a node is unhealthy, MDR will reprovision the node. This process relies on Machine API integration to declaratively manage the nodes and perform the process of redeploying Red Hat Enterprise Linux CoreOS (RHCOS) and rejoining the node to the cluster.

If your cluster has been deployed with the baremetal cloud provider type, for example using the bare metal IPI method or AWS (self-managed or ROSA), consider using this fencing option. If additional unprovisioned nodes are available to the cluster, this method will use those nodes to replace the failed node’s capacity while the bare metal Operator attempts to reset the failed node.

Critically, MDR should not operate on control plane nodes, even if they are managed using a control plane machine set. For clusters with a schedulable control plane, MDR destroying control plane nodes could lead to unexpected cluster stability concerns and should not be used to fence and remediate these nodes - a different fencing option should be used.

Requirements

- Machine Deletion Remediation Operator deployed and configured

- Machine API provider integration (e.g.,

baremetalOperator, AWS, etc.) - Machine Health Check (MHC) policies configured

Advantages

- Integrates with cloud infrastructure and existing Machine API mechanisms

- No dependency on BMC or watchdog with hyperscalers

See the documentation for full configuration options and recommendations.

Escalating remediations strategy

Escalating node health check remediations provide a structured and progressive approach to recovering from node failures by executing multiple remediation strategies in a defined escalation order. This allows cluster administrators to apply the least disruptive action first and escalate only if the issue persists, optimizing for both speed and reliability.

An example escalation could be:

- Fence Agent Remediation: The fastest method, power-cycling the node via Out-of-Band Management (OOBM). This is ideal when OOBM connectivity still exists.

- Self Node Remediation: If OOBM fails (e.g., due to complete network isolation), this method uses local watchdog timers and timeouts to autonomously reboot the disconnected node.

- Machine Deletion Remediation: As a final measure for an example, persistent disk issues (like a corrupted partition table), this strategy wipes and reprovisions the entire node.

By following this defined escalation order, cluster operators can apply increasingly drastic recovery actions only when necessary, optimizing for both speed and reliability in addressing node failures. The goal is to balance swift recovery with minimal disruption.

See the OpenShift documentation for configuration details and the Content from github.com is not included.medik8s documentation for additional details and examples using escalating remediations.

Summary

Ensuring virtual machine high availability in OpenShift Virtualization requires deliberate configuration and integration of failure detection, fencing, and remediation mechanisms. Unlike stateless container workloads, VMs introduce more complex availability challenges due to their stateful nature and longer recovery times. This makes the ability to safely detect node failures, isolate problematic nodes, and automatically restart workloads on healthy infrastructure critical for production deployments.

OpenShift offers several remediation strategies that cater to different operational environments. Self Node Remediation is ideal for edge or bare metal clusters with watchdog support, offering a lightweight and fully node-local fencing method. Fence Agents Remediation provides precise and deterministic node fencing for environments with BMC-equipped hardware, giving administrators fine-grained control over node isolation through traditional IPMI, Redfish, or other hardware interfaces. Machine Deletion Remediation is well-suited for IPI-managed clusters, where nodes can be safely treated as disposable and automatically recreated on failure.

Each approach has unique trade-offs in terms of complexity, speed, hardware dependencies, and integration with automation. Administrators must weigh these factors when choosing the right remediation strategy—or combination of strategies—for their cluster.

Ultimately, high availability in OpenShift Virtualization is not achieved by enabling a single feature, but by implementing a coordinated set of tools and policies. When properly configured, OpenShift can deliver rapid recovery from node failures, minimize VM downtime, and meet the high availability expectations of enterprise workloads.

Tested virtual machine recovery times

This section is presenting results from internal validation.

Environment

The software components used in the experimentation are shown in the following Table:

| Component | OpenShift 4.14 Version | OpenShift 4.15 Version | OpenShift 4.16 Version |

|---|---|---|---|

| OpenShift Virtualization | 4.14.0 | 4.15.1 | 4.16.0.rhel9-2468 |

| Node Health Check | 0.6.1 | 0.7.0 | 0.7.0 |

| Self Node Remediation | 0.7.1 | 0.8.0 | 0.8.0 |

| Fence Agents Remediation | 0.2.1 | 0.3.0 | 0.3.0 |

Test Results

The results obtained for each configuration after running 31 test iterations, without escalating remediation and with single node failures, i.e., only one node is unhealthy at any given time, results may vary in such scenarios. Then, the arithmetic mean of the resulting times are computed using SNR and FAR and increasing from the lowest possible value of spec.unhealthyConditions[].duration=1 second (unhealthy timeout), in steps of 60 seconds. The main goal is to find out if the progression follows a pattern, e.g., linear progression, logarithmic progression, etc. Results are shown in the following Table:

| SNR | |||||||

| FAR | |||||||

As shown in the Table, as the unhealthy timeout using the same remediation operator is decreased, the VM recovery time is reduced. The reduction factor is directly proportional to the unhealthy timeout value configured. For instance, on OpenShift 4.15, configured with FAR and an unhealthy timeout of 1 second against an unhealthy timeout of 60 seconds, the difference is 63 seconds.

Comparing different remediation operator configurations, FAR is faster than SNR when the same unhealthy timeout is used.

Please note that reducing the unhealthy timeout may create unnecessary remediation actions in the presence of transitional issues such as high network latency.