Administration guide

Administering Red Hat OpenShift Dev Spaces 3.27

Abstract

Preface

Install, configure, and manage Red Hat OpenShift Dev Spaces on OpenShift clusters.

Chapter 1. Security best practices

Apply these security best practices for Red Hat OpenShift Dev Spaces to protect user credentials, isolate workspaces, and reduce the cluster attack surface.

Red Hat OpenShift Dev Spaces runs on top of OpenShift, which provides the platform, and the foundation for the products functioning on top of it. OpenShift documentation is the entry point for security hardening.

1.1. Project isolation in OpenShift

In OpenShift, project isolation is similar to namespace isolation in Kubernetes but is achieved through the concept of projects. A project in OpenShift is a top-level organizational unit that provides isolation and collaboration between different applications, teams, or workloads within a cluster.

By default, OpenShift Dev Spaces provisions a unique <username>-devspaces project for each user. Alternatively, the cluster administrator can disable project self-provisioning on the OpenShift level, and turn off automatic namespace provisioning in the CheCluster custom resource:

devEnvironments:

defaultNamespace:

autoProvision: falseWith this setup, you achieve curated access to OpenShift Dev Spaces. Cluster administrators control provisioning for each user and can explicitly configure various settings including resource limits and quotas.

1.2. Role-based access control (RBAC)

By default, the OpenShift Dev Spaces operator creates the following ClusterRoles:

-

<namespace>-cheworkspaces-clusterrole -

<namespace>-cheworkspaces-devworkspace-clusterrole

The <namespace> prefix corresponds to the project name where the Red Hat OpenShift Dev Spaces CheCluster CR is located. The first time a user accesses Red Hat OpenShift Dev Spaces, the corresponding RoleBinding is created in the <username>-devspaces project.

The following table lists the resources and actions that you can grant users permission to use in their namespace.

Table 1.1. Overview of resources and actions available in a user’s namespace

| Resources | Actions |

|---|---|

| pods | "get", "list", "watch", "create", "delete", "update", "patch" |

| pods/exec | "get", "create" |

| pods/log | "get", "list", "watch" |

| pods/portforward | "get", "list", "create" |

| configmaps | "get", "list", "create", "update", "patch", "delete" |

| events | "list", "watch" |

| secrets | "get", "list", "create", "update", "patch", "delete" |

| services | "get", "list", "create", "delete", "update", "patch" |

| routes | "get", "list", "create", "delete" |

| persistentvolumeclaims | "get", "list", "watch", "create", "delete", "update", "patch" |

| apps/deployments | "get", "list", "watch", "create", "patch", "delete" |

| apps/replicasets | "get", "list", "patch", "delete" |

| namespaces | "get", "list" |

| projects | "get" |

| devworkspace | "get", "create", "delete", "list", "update", "patch", "watch" |

| devworkspacetemplates | "get", "create", "delete", "list", "update", "patch", "watch" |

Each user is granted permissions only to their namespace and cannot access other users' resources. Cluster administrators can add extra permissions to users. They should not remove permissions granted by default.

For more details about configuring cluster roles for Red Hat OpenShift Dev Spaces users and role-based access control, see the Additional resources section.

1.3. Dev environment isolation

Isolation of the development environments is implemented using OpenShift projects. Every developer has a project in which the following objects are created and managed:

- Cloud Development Environment (CDE) Pods, including the Integrated Development Environment (IDE) server.

- Secrets containing developer credentials, such as a Git token, SSH keys, and a Kubernetes token.

- ConfigMaps with developer-specific configuration, such as the Git name and email.

- Volumes that persist data such as the source code, even when the CDE Pod is stopped.

Access to the resources in a namespace must be limited to the developer owning it. Granting read access to another developer is equivalent to sharing the developer credentials and should be avoided.

1.4. Enhanced authorization

The current trend is to split an infrastructure into several "fit for purpose" clusters instead of having a gigantic monolith OpenShift cluster. A "fit for purpose" cluster is specifically designed and configured to meet the requirements of a particular use case or workload. It is tailored to optimize performance and resource utilization based on the characteristics of the workloads it manages.

For Red Hat OpenShift Dev Spaces, this type of cluster is recommended. However, administrators might still want to provide granular access and restrict the availability of certain functionalities to particular users.

For this purpose, optional properties that you can use to configure granular access for different groups and users are available in the CheCluster Custom Resource:

-

allowUsers -

allowGroups -

denyUsers -

denyGroups

The following example shows an access configuration:

networking:

auth:

advancedAuthorization:

allowUsers:

- user-a

- user-b

denyUsers:

- user-c

allowGroups:

- openshift-group-a

- openshift-group-b

denyGroups:

- openshift-group-c

Users in the denyUsers and denyGroup categories cannot use Red Hat OpenShift Dev Spaces and see a warning when trying to access the User Dashboard.

1.5. Authentication

Only authenticated OpenShift users can access Red Hat OpenShift Dev Spaces. The Gateway Pod uses a role-based access control (RBAC) subsystem to determine whether a developer is authorized to access a Cloud Development Environment (CDE) or not.

The CDE Gateway container checks the developer’s Kubernetes roles. If their roles allow access to the CDE Pod, the connection to the development environment is allowed. By default, only the owner of the namespace has access to the CDE Pod.

1.6. Security context and security context constraint

Red Hat OpenShift Dev Spaces adds SETGID and SETUID capabilities to the specification of the CDE Pod container security context:

"spec": {

"containers": [

"securityContext": {

"allowPrivilegeEscalation": true,

"capabilities": {

"add": ["SETGID", "SETUID"],

"drop": ["ALL","KILL","MKNOD"]

},

"readOnlyRootFilesystem": false,

"runAsNonRoot": true,

"runAsUser": 1001110000

}

]

}This provides the ability for users to build container images from within a CDE.

By default, Red Hat OpenShift Dev Spaces assigns users a specific SecurityContextConstraint (SCC) that allows them to start a Pod with such capabilities. This SCC grants more capabilities to the users compared to the default restricted SCC but less capability compared to the anyuid SCC. This default SCC is pre-created in the OpenShift Dev Spaces namespace and named container-build.

Setting the following property in the CheCluster Custom Resource prevents assigning extra capabilities and SCC to users:

spec:

devEnvironments:

disableContainerBuildCapabilities: true1.7. Resource Quotas and Limit Ranges

Resource Quotas and Limit Ranges are Kubernetes features you can use to help prevent bad actors and resource abuse within a cluster. Specifically, they allow you to set resource consumption constraints for pods and containers. By combining Resource Quotas and Limit Ranges, you can enforce project-specific policies to prevent bad actors from consuming excessive resources.

These mechanisms contribute to better resource management, stability, and fairness within an OpenShift cluster. More details about resource quotas and limit ranges are available in the OpenShift documentation.

1.8. Network policies

Network policies provide an additional layer of security by controlling network traffic between pods in a Kubernetes cluster. By default, every pod can communicate with every other pod and service on the cluster.

Implementing network policies allows you to:

- Control ingress and egress traffic to and from workspace pods

- Limit the attack surface by denying unauthorized network access

When configuring network policies for Red Hat OpenShift Dev Spaces, ensure that pods in the OpenShift Dev Spaces namespace can still communicate with pods in user namespaces. This communication is required for proper functionality.

For detailed instructions on implementing network policies with Red Hat OpenShift Dev Spaces, see the procedure for configuring network policies.

1.9. Disconnected environment

An air-gapped OpenShift disconnected cluster refers to an OpenShift cluster isolated from the internet or any external network. This isolation is often done for security reasons to protect sensitive or critical systems from potential cyber threats. In an air-gapped environment, the cluster cannot access external repositories or registries to download container images, updates, or dependencies.

Red Hat OpenShift Dev Spaces is supported and can be installed in a restricted environment.

1.10. Managing extensions

By default, Red Hat OpenShift Dev Spaces includes the embedded Open VSX registry which contains a limited set of extensions for the Microsoft Visual Studio Code - Open Source editor. Alternatively, cluster administrators can specify a different plugin registry in the Custom Resource, for example the open-vsx.org registry that contains thousands of extensions. They can also build a custom Open VSX registry.

Installing extra extensions increases potential risks. To minimize these risks, ensure that you only install extensions from reliable sources and regularly update them.

1.11. Secrets

Keep sensitive data stored as Kubernetes secrets in the users' namespaces confidential (for example Personal Access Tokens (PAT), and SSH keys).

1.12. Git repositories

It is crucial to operate within Git repositories that you are familiar with and that you trust. Before incorporating new dependencies into the repository, verify that they are well-maintained and regularly release updates to address any identified security vulnerabilities in their code.

Additional resources

- Section 6.3, “Provision projects in advance”

- Section 15.1, “Configure cluster roles for OpenShift Dev Spaces users”

- This page is not included, but the link has been rewritten to point to the nearest parent document.OpenShift role-based access control

- This page is not included, but the link has been rewritten to point to the nearest parent document.Resource quotas per project

- This page is not included, but the link has been rewritten to point to the nearest parent document.Limit ranges

- This content is not included.OpenShift networking overview

- Section 12.1, “Configure network policies”

- Section 4.3, “Install OpenShift Dev Spaces in a restricted environment on OpenShift”

- Chapter 19, Manage IDE extensions

Chapter 2. Prepare the installation

Ensure your OpenShift cluster meets the requirements for OpenShift Dev Spaces and install the tools you need for installation.

Review the supported platforms, install the dsc management tool, understand the OpenShift Dev Spaces architecture, and estimate resource requirements for your deployment.

2.1. Supported platforms

OpenShift Dev Spaces is supported on specific OpenShift versions and CPU architectures.

OpenShift Dev Spaces runs on OpenShift 4.16–4.22 on the following CPU architectures:

-

AMD64 and Intel 64 (

x86_64) -

IBM Z (

s390x) -

IBM Power (

ppc64le) -

ARMv8 (

arm64)

2.2. Install the dsc management tool

Install dsc, the Red Hat OpenShift Dev Spaces command-line management tool, on Linux, macOS, or Windows to start, stop, update, and delete the OpenShift Dev Spaces server.

Prerequisites

You have a Linux or macOS workstation.

NoteFor installing

dscon Windows, see the following pages:

Procedure

-

Download the archive from This content is not included.https://developers.redhat.com/products/openshift-dev-spaces/download to a directory such as

$HOME. -

Run

tar xvzfon the archive to extract the/dscdirectory. -

Add the extracted

/dsc/binsubdirectory to$PATH.

Verification

Run

dscto view information about it.$ dsc

Additional resources

2.3. OpenShift Dev Spaces architecture overview

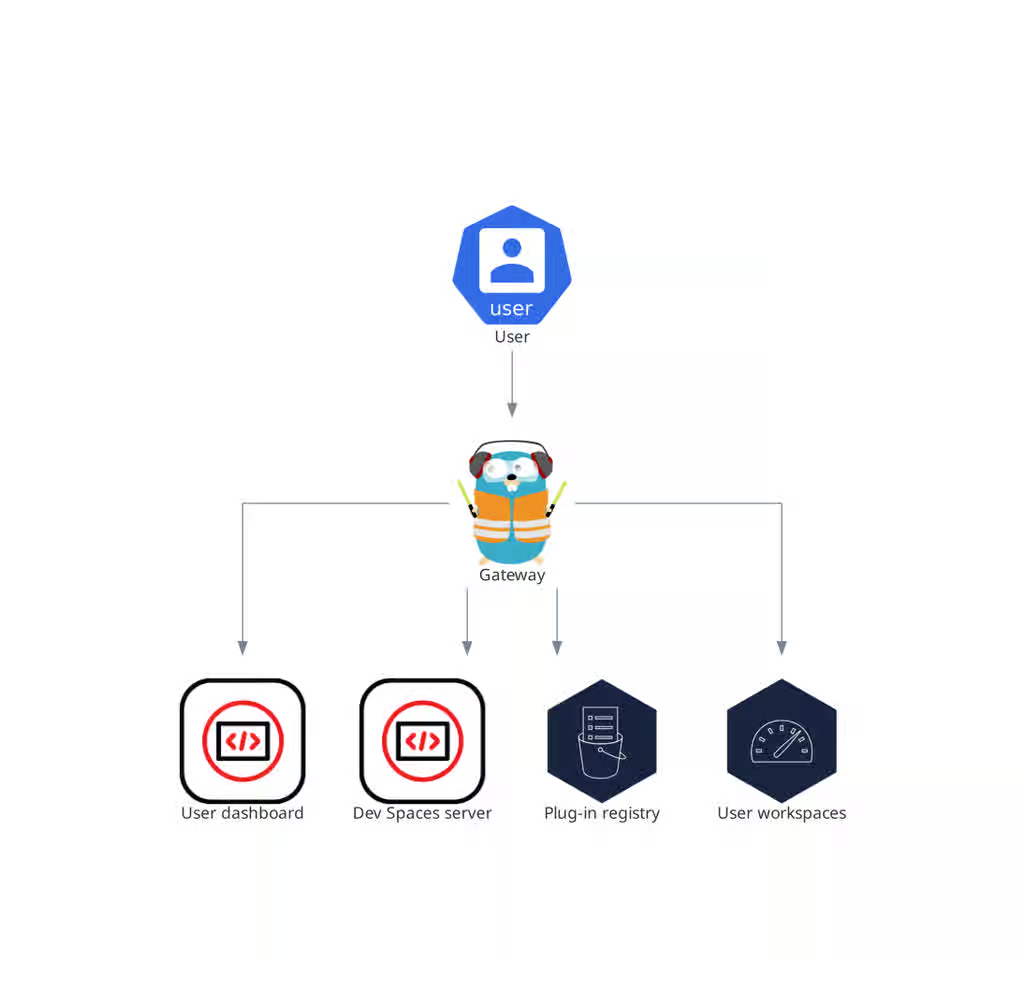

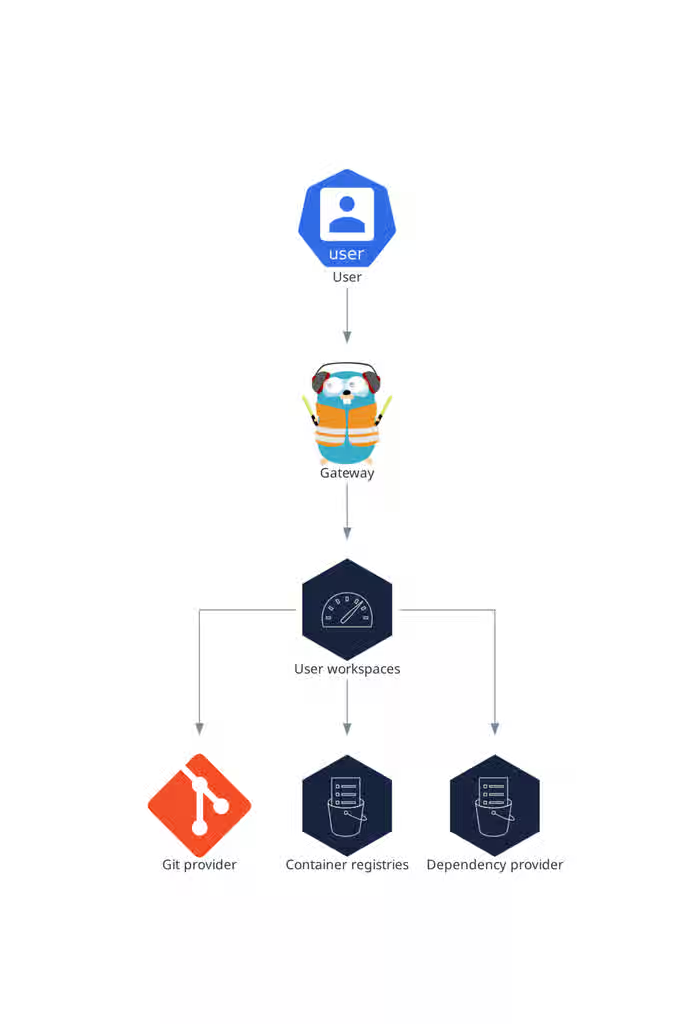

Figure 2.1. High-level OpenShift Dev Spaces architecture with the Dev Workspace operator

The OpenShift Dev Spaces architecture consists of server components, user workspaces, and the Dev Workspace Operator, which together provide cloud-based development environments on OpenShift.

OpenShift Dev Spaces runs on three groups of components:

- OpenShift Dev Spaces server components

- Manage User project and workspaces. The main component is the User dashboard, from which users control their workspaces.

- Dev Workspace operator

-

Creates and controls the necessary OpenShift objects to run User workspaces. Including

Pods,Services, andPersistentVolumes. - User workspaces

- Container-based development environments, the Integrated Development Environment (IDE) included.

The role of these OpenShift features is central:

- Dev Workspace Custom Resources

- Valid OpenShift objects representing the User workspaces and manipulated by OpenShift Dev Spaces. It is the communication channel for the three groups of components.

- OpenShift role-based access control (RBAC)

- Controls access to all resources.

Additional resources

2.3.1. Server components

The OpenShift Dev Spaces server components manage multi-tenancy and workspace lifecycle. Understanding these components helps you troubleshoot issues and plan cluster capacity.

Figure 2.2. OpenShift Dev Spaces server components interacting with the Dev Workspace operator

2.3.2. OpenShift Dev Spaces operator

The OpenShift Dev Spaces operator ensures full lifecycle management of the OpenShift Dev Spaces server components.

CheClustercustom resource definition (CRD)-

Defines the

CheClusterOpenShift object. - OpenShift Dev Spaces controller

- Creates and controls the necessary OpenShift objects to run an OpenShift Dev Spaces instance, such as pods, services, and persistent volumes.

CheClustercustom resource (CR)-

On a cluster with the OpenShift Dev Spaces operator, it is possible to create a

CheClustercustom resource (CR). The OpenShift Dev Spaces operator ensures the full lifecycle management of the OpenShift Dev Spaces server components on this OpenShift Dev Spaces instance. These components include the Dev Workspace Operator, gateway, user dashboard, OpenShift Dev Spaces server, and plug-in registry.

Additional resources

2.3.3. Dev Workspace operator

The Dev Workspace Operator (DWO) is a dependency of OpenShift Dev Spaces, and is an integral part of how OpenShift Dev Spaces functions. One of DWO’s main responsibilities is to reconcile Dev Workspace custom resources (CR).

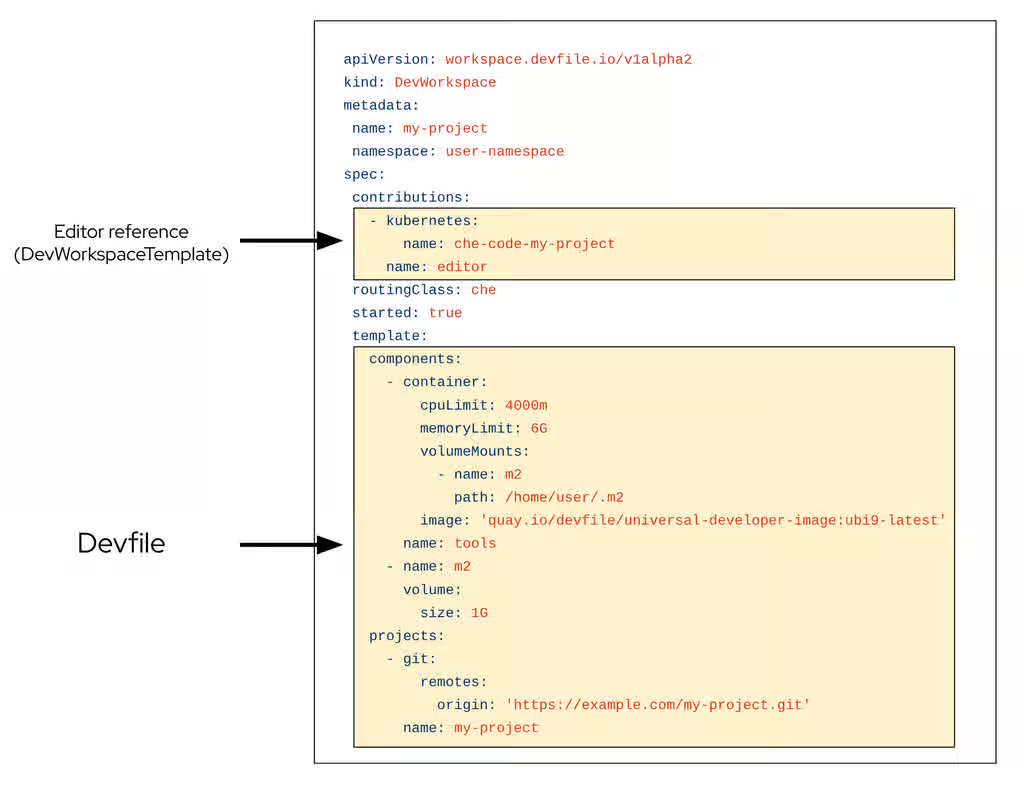

The Dev Workspace CR is an OpenShift resource representation of an OpenShift Dev Spaces workspace. Whenever a user creates a workspace using OpenShift Dev Spaces in the background, Dashboard OpenShift Dev Spaces creates a Dev Workspace CR in the cluster. For every OpenShift Dev Spaces workspace, there is an underlying Dev Workspace CR on the cluster.

Figure 2.3. Example of a Dev Workspace CR in a cluster

When creating a workspace with OpenShift Dev Spaces with a devfile, the Dev Workspace CR contains the devfile details. Additionally, OpenShift Dev Spaces adds the editor definition into the Dev Workspace CR depending on which editor was chosen for the workspace. OpenShift Dev Spaces also adds attributes to the Dev Workspace that further configure the workspace depending on how you configured the CheCluster CR.

A DevWorkspaceTemplate is a custom resource that defines a reusable spec.template for Dev Workspaces.

When a workspace is started, DWO reads the corresponding Dev Workspace CR and creates the necessary resources such as deployments, secrets, configmaps, and routes. As a result, a workspace pod representing the development environment defined in the devfile is created.

2.3.3.1. Custom Resources overview

The following Custom Resource Definitions are provided by the Dev Workspace Operator:

-

Dev Workspace -

DevWorkspaceTemplate -

DevWorkspaceOperatorConfig -

DevWorkspaceRouting

2.3.3.2. Dev Workspace

The Dev Workspace custom resource contains details about an OpenShift Dev Spaces workspace. Notably, it contains devfile details and a reference to the editor definition.

2.3.3.3. DevWorkspaceTemplate

In OpenShift Dev Spaces the DevWorkspaceTemplate custom resource is typically used to define an editor (such as Visual Studio Code - Open Source) for OpenShift Dev Spaces workspaces. You can use this custom resource to define reusable spec.template content that is reused by multiple Dev Workspaces.

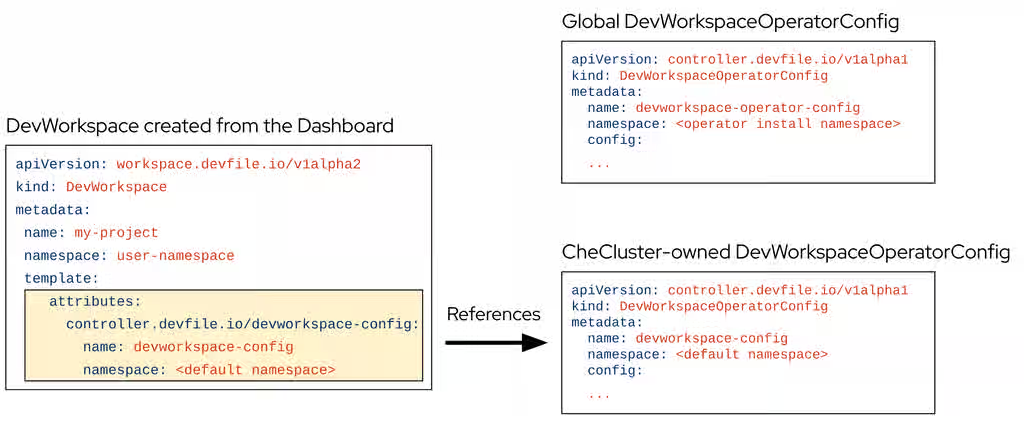

2.3.3.4. DevWorkspaceOperatorConfig

The DevWorkspaceOperatorConfig (DWOC) custom resource defines configuration options for the DWO. There are two different types of DWOC:

- global configuration

- non-global configuration

The global configuration is a DWOC custom resource named devworkspace-operator-config and is usually located in the DWO installation namespace. By default, the global configuration is not created upon installation. Configuration fields set in the global configuration apply to the DWO and all Dev Workspaces. However, the DWOC configuration can be overridden by a non-global configuration.

Any other DWOC custom resource than devworkspace-operator-config is considered to be non-global configuration. A non-global configuration does not apply to any Dev Workspaces unless the Dev Workspace contains a reference to the DWOC. If the global configuration and non-global configuration have the same fields, the non-global configuration field takes precedence.

Table 2.1. Global DWOC and OpenShift Dev Spaces-owned DWOC comparison

| Global DWOC | OpenShift Dev Spaces-owned DWOC | |

|---|---|---|

| Resource name |

|

|

| Namespace | DWO installation namespace | OpenShift Dev Spaces installation namespace |

| Default creation | Not created by default upon DWO installation | Created by default on OpenShift Dev Spaces installation |

| Scope | Applies to the DWO itself and all Dev Workspaces managed by DWO | Applies to Dev Workspaces created by OpenShift Dev Spaces |

| Precedence | Overridden by fields set in OpenShift Dev Spaces-owned config | Takes precedence over global config if both define the same field |

| Primary use case | Used to define default, broad settings that apply to DWO in general. | Used to define specific configuration for Dev Workspaces created by OpenShift Dev Spaces |

For example, by default OpenShift Dev Spaces creates and manages a non-global DWOC in the OpenShift Dev Spaces namespace named devworkspace-config. This DWOC contains configuration specific to OpenShift Dev Spaces workspaces, and is maintained by OpenShift Dev Spaces depending on how you configure the CheCluster CR. When OpenShift Dev Spaces creates a workspace, OpenShift Dev Spaces adds a reference to the OpenShift Dev Spaces-owned DWOC with the controller.devfile.io/devworkspace-config attribute.

Figure 2.4. Example of Dev Workspace configuration attribute

2.3.3.5. DevWorkspaceRouting

The DevWorkspaceRouting custom resource defines details about the endpoints of a Dev Workspace. Every Dev Workspace has its corresponding DevWorkspaceRouting object that specifies the workspace’s container endpoints. Endpoints defined from the devfile, as well as endpoints defined by the editor definition appear in the DevWorkspaceRouting custom resource.

apiVersion: controller.devfile.io/v1alpha1

kind: DevWorkspaceRouting

metadata:

annotations:

controller.devfile.io/devworkspace-started: 'false'

name: routing-workspaceb14aa33254674065

labels:

controller.devfile.io/devworkspace_id: workspaceb14aa33254674065

spec:

devworkspaceId: workspaceb14aa33254674065

endpoints:

universal-developer-image:

- attributes:

cookiesAuthEnabled: true

discoverable: false

type: main

urlRewriteSupported: true

exposure: public

name: che-code

protocol: https

secure: true

targetPort: 3100

podSelector:

controller.devfile.io/devworkspace_id: workspaceb14aa33254674065

routingClass: che

status:

exposedEndpoints:

...2.3.3.6. Dev Workspace Operator operands

The Dev Workspace Operator has two operands:

- controller deployment

- webhook deployment.

$ oc get pods -l 'app.kubernetes.io/part-of=devworkspace-operator' -o custom-columns=NAME:.metadata.name -n openshift-operators NAME devworkspace-controller-manager-66c6f674f5-l7rhj devworkspace-webhook-server-d4958d9cd-gh7vr devworkspace-webhook-server-d4958d9cd-rfvj6

where:

devworkspace-controller-manager-*- The Dev Workspace controller pod, which is responsible for reconciling custom resources.

devworkspace-webhook-server-*- The Dev Workspace operator webhook server pods.

2.3.3.7. Configuring the Dev Workspace-controller-manager deployment

You can configure the devworkspace-controller-manager pod in the Dev Workspace Operator Subscription object:

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: devworkspace-operator

namespace: openshift-operators

spec:

config:

affinity:

nodeAffinity: ...

podAffinity: ...

resources:

limits:

memory: ...

cpu: ...

requests:

memory: ...

cpu: ...2.3.3.8. Configuring the Dev Workspace-webhook-server deployment

You can configure the devworkspace-webhook-server deployment in the global DWOC:

apiVersion: controller.devfile.io/v1alpha1

kind: DevWorkspaceOperatorConfig

metadata:

name: devworkspace-operator-config

namespace: <DWO install namespace>

config:

webhooks:

nodeSelector: <map[string]string>

replicas: <int>

tolerations: <[]corev1.Toleration>2.3.4. OpenShift Dev Spaces gateway

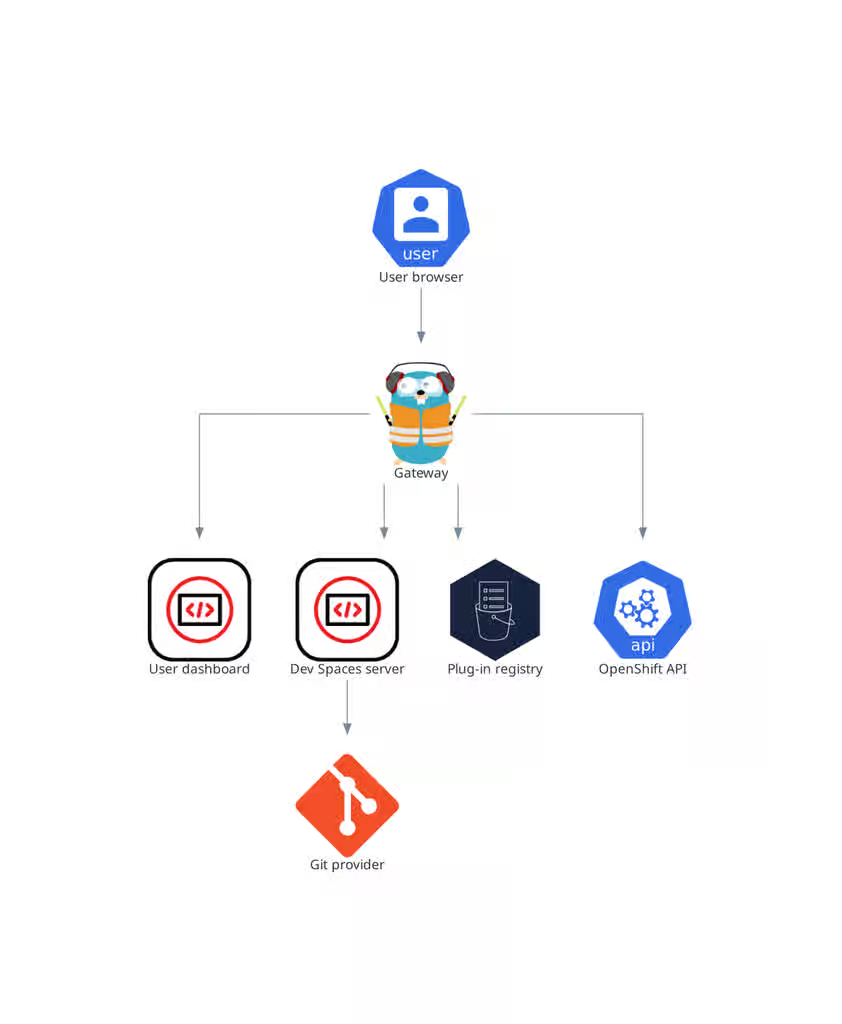

The OpenShift Dev Spaces gateway routes requests, authenticates users, and applies access control policies for OpenShift Dev Spaces resources.

The OpenShift Dev Spaces gateway has the following roles:

- Routing requests. It uses Traefik.

- Authenticating users with OpenID Connect (OIDC). It uses OAuth2 Proxy.

- Applying OpenShift Role Based Access Control (RBAC) policies to control access to any OpenShift Dev Spaces resource. It uses kube-rbac-proxy.

The OpenShift Dev Spaces operator manages it as the che-gateway Deployment.

It controls access to the user dashboard, the OpenShift Dev Spaces server, the plug-in registry, and user workspaces.

Figure 2.5. OpenShift Dev Spaces gateway interactions with other components

Additional resources

- Chapter 15, Manage identities and authorizations

- Content from github.com is not included.Traefik

- Content from github.com is not included.OAuth2 Proxy

- Content from github.com is not included.kube-rbac-proxy

- Section 2.3.5, “User dashboard”

- Section 2.3.6, “OpenShift Dev Spaces server”

- Section 2.3.7, “Plug-in registry”

- Section 2.4, “User workspaces”

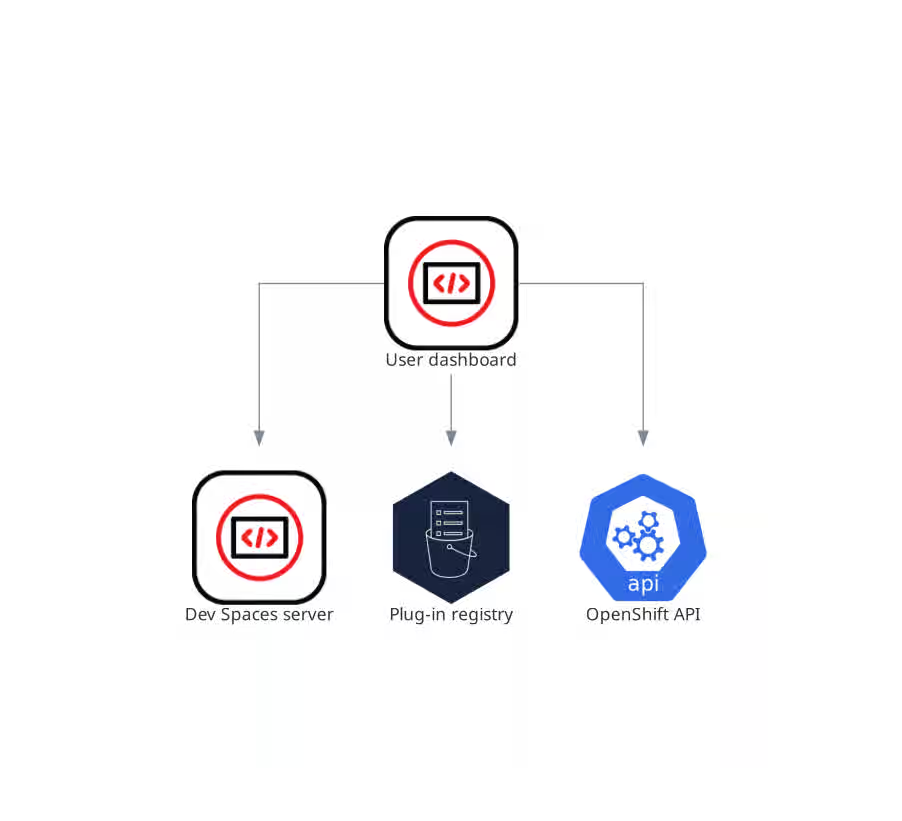

2.3.5. User dashboard

The user dashboard is the landing page of Red Hat OpenShift Dev Spaces, providing a central interface for users to create, access, and manage their workspaces.

It needs access to the OpenShift Dev Spaces server, the plug-in registry, and the OpenShift Application Programming Interface (API).

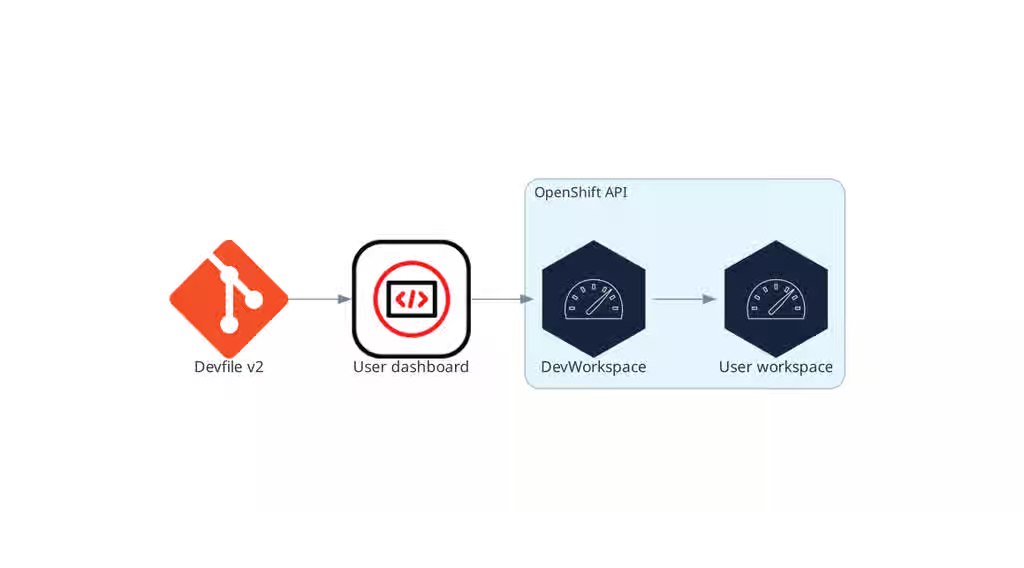

Figure 2.6. User dashboard interactions with other components

When the user requests the user dashboard to start a workspace, the user dashboard executes this sequence of actions:

- Sends the repository URL to the OpenShift Dev Spaces server and expects a devfile in return, when the user is creating a workspace from a remote devfile.

- Reads the devfile describing the workspace.

- Collects the additional metadata from the plug-in registry.

- Converts the information into a Dev Workspace Custom Resource.

- Creates the Dev Workspace Custom Resource in the user project using the OpenShift API.

- Watches the Dev Workspace Custom Resource status.

- Redirects the user to the running workspace IDE.

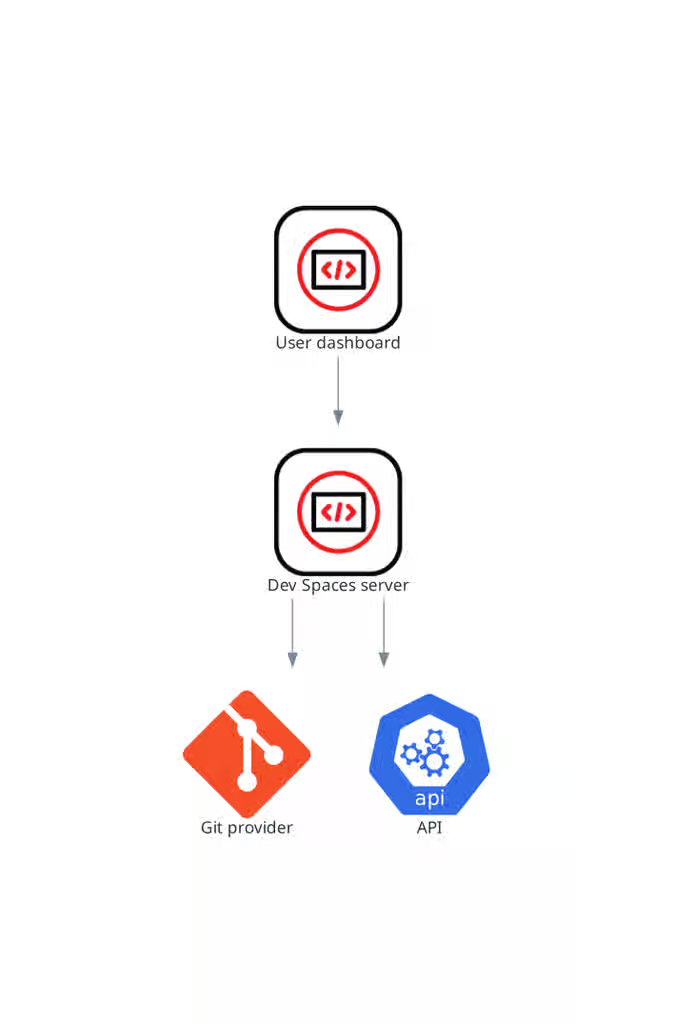

2.3.6. OpenShift Dev Spaces server

The OpenShift Dev Spaces server is a Java web service that manages user namespaces, provisions secrets and config maps, and integrates with Git service providers.

The OpenShift Dev Spaces server main functions are:

- Creating user namespaces.

- Provisioning user namespaces with required secrets and config maps.

- Integrating with Git services providers, to fetch and validate devfiles and authentication.

The OpenShift Dev Spaces server is a Java web service exposing a Hypertext Transfer Protocol (HTTP) REST API and needs access to:

- Git service providers

- OpenShift API

Figure 2.7. OpenShift Dev Spaces server interactions with other components

Additional resources

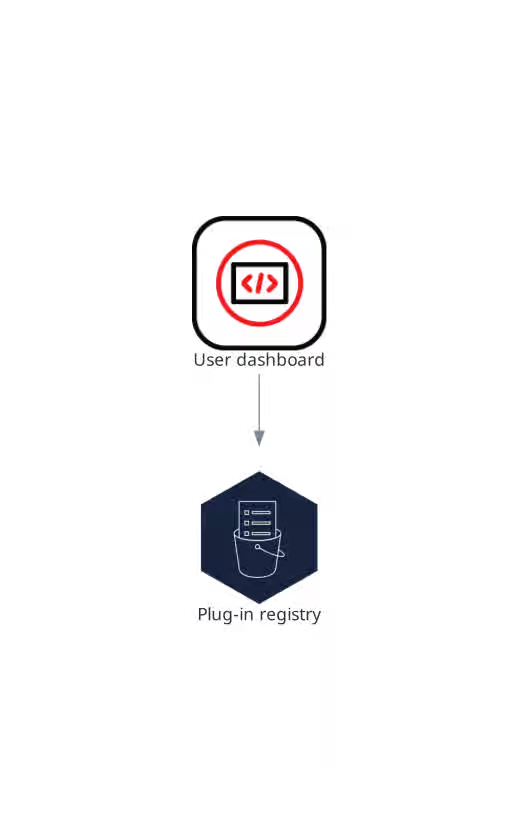

2.3.7. Plug-in registry

Each OpenShift Dev Spaces workspace starts with a specific editor and set of associated extensions. The OpenShift Dev Spaces plugin registry provides the list of available editors and editor extensions. A Devfile v2 describes each editor or extension.

The user dashboard reads the content of the registry.

Figure 2.8. Plugin registries interactions with other components

2.4. User workspaces

Figure 2.9. User workspaces interactions with other components

User workspaces provide browser-based IDEs running in OpenShift containers, giving developers on-demand access to editors, language servers, debugging tools, and application runtimes without local setup.

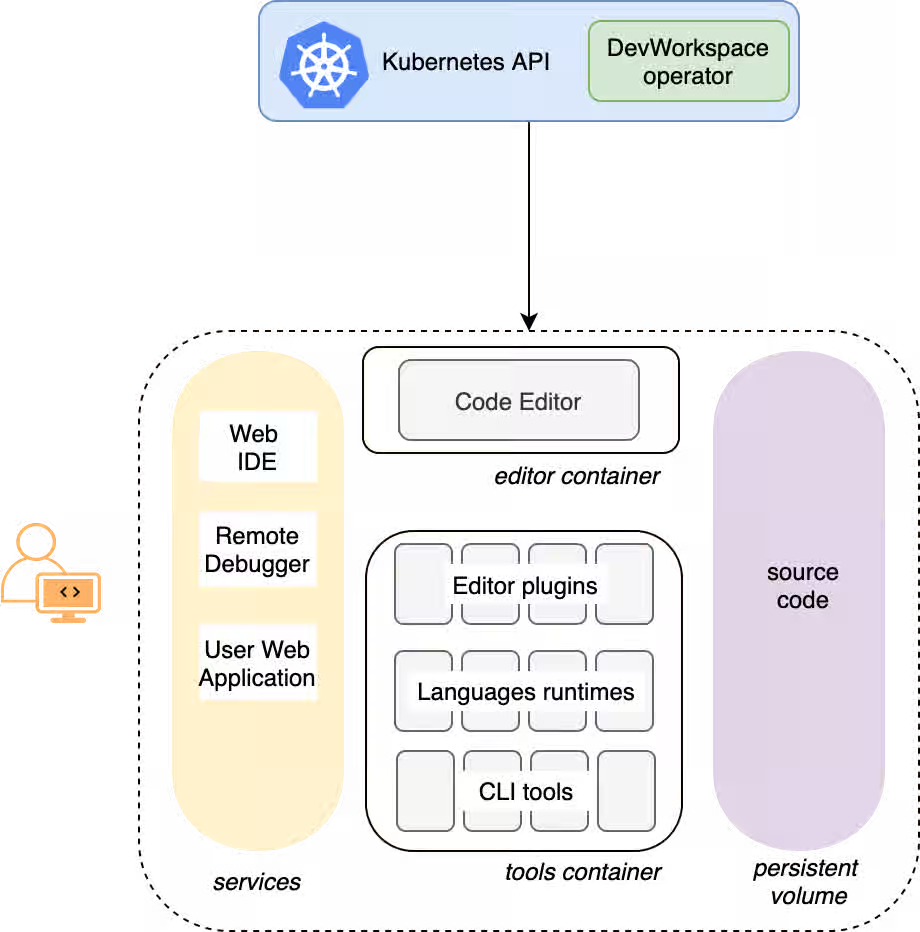

A User workspace is a web application. It consists of microservices running in containers providing all the services of a modern IDE running in your browser:

- Editor

- Language auto-completion

- Language server

- Debugging tools

- Plug-ins

- Application runtimes

A workspace is one OpenShift Deployment containing the workspace containers and enabled plugins, plus related OpenShift components:

- Containers

- ConfigMaps

- Services

- Endpoints

- Ingresses or Routes

- Secrets

- Persistent Volumes (PV)

A OpenShift Dev Spaces workspace contains the source code of the projects, persisted in an OpenShift Persistent Volume (PV). Microservices have read/write access to this shared directory.

Use the devfile v2 format to specify the tools and runtime applications of an OpenShift Dev Spaces workspace.

The following diagram shows one running OpenShift Dev Spaces workspace and its components.

Figure 2.10. OpenShift Dev Spaces workspace components

In the diagram, there is one running workspace.

2.5. Calculate OpenShift Dev Spaces resource requirements

Calculate the CPU and memory resource consumption for the OpenShift Dev Spaces Operator, Dev Workspace Controller, and user workspaces to right-size your cluster for the expected number of concurrent users.

The following link to an Content from github.com is not included.example devfile is a pointer to material from the upstream community. This material represents the very latest available content and the most recent best practices. These tips have not yet been vetted by Red Hat’s QE department, and they have not yet been proven by a wide user group. Please, use this information cautiously. It is best used for educational and 'developmental' purposes rather than 'production' purposes.

Prerequisites

- You have a planned or existing OpenShift Dev Spaces deployment on OpenShift Container Platform 4.16 or later.

- You have the devfiles that define the development environments for your users.

- You have an estimate of the number of concurrent workspaces that your users will run.

Procedure

Identify the workspace resource requirements from the devfile

componentssection. The following example uses the Content from github.com is not included.Quarkus API example devfile.The

toolscomponent of the devfile defines the following requests and limits:memoryLimit: 6G memoryRequest: 512M cpuRequest: 1000m cpuLimit: 4000mDuring workspace startup, an internal

che-gatewaycontainer is implicitly provisioned with the following requests and limits:memoryLimit: 256M memoryRequest: 64M cpuRequest: 50m cpuLimit: 500mAdditional memory and CPU are added implicitly for the Visual Studio Code - Open Source ("Code - OSS") editor:

memoryLimit: 1024M memoryRequest: 256M cpuRequest: 30m cpuLimit: 500mAdditional memory and CPU are added implicitly for a JetBrains IDE, for example IntelliJ IDEA Ultimate:

memoryLimit: 6144M memoryRequest: 2048M cpuRequest: 1500m cpuLimit: 2000m

Calculate the sums of the resources required for each workspace. If you intend to use multiple devfiles, repeat this calculation for every expected devfile.

Table 2.2. Workspace requirements for the Content from github.com is not included.example devfile in the previous step

Purpose Pod Container name Memory limit Memory request CPU limit CPU request Developer tools

workspacetools6 GiB

512 MiB

4000 m

1000 m

OpenShift Dev Spaces gateway

workspaceche-gateway256 MiB

64 MiB

500 m

50 m

Visual Studio Code

workspacetools1024 MiB

256 MiB

500 m

30 m

Total

7.3 GiB

832 MiB

5000 m

1080 m

- Multiply the resources calculated per workspace by the number of workspaces that you expect all of your users to run simultaneously.

Calculate the sums of the requirements for the OpenShift Dev Spaces Operator, Operands, and Dev Workspace Controller.

Table 2.3. Default requirements for the OpenShift Dev Spaces Operator, Operands, and Dev Workspace Controller

Purpose Pod name Container names Memory limit Memory request CPU limit CPU request OpenShift Dev Spaces operator

devspaces-operatordevspaces-operator256 MiB

64 MiB

500 m

100 m

OpenShift Dev Spaces Server

devspacesdevspaces-server1 GiB

512 MiB

1000 m

100 m

OpenShift Dev Spaces Dashboard

devspaces-dashboarddevspaces-dashboard256 MiB

32 MiB

500 m

100 m

OpenShift Dev Spaces Gateway

devspaces-gatewaytraefik4 GiB

128 MiB

1000 m

100 m

OpenShift Dev Spaces Gateway

devspaces-gatewayconfigbump256 MiB

64 MiB

500 m

50 m

OpenShift Dev Spaces Gateway

devspaces-gatewayoauth-proxy512 MiB

64 MiB

500 m

100 m

OpenShift Dev Spaces Gateway

devspaces-gatewaykube-rbac-proxy512 MiB

64 MiB

500 m

100 m

Plugin registry

plugin-registryplugin-registry256 MiB

32 MiB

500 m

100 m

Dev Workspace Controller Manager

devworkspace-controller-managerdevworkspace-controller5 GiB

100 MiB

3000 m

250 m

Dev Workspace Controller Manager

devworkspace-controller-managerkube-rbac-proxyN/A

N/A

N/A

N/A

Dev Workspace Operator Catalog

devworkspace-operator-catalogregistry-serverN/A

50 MiB

N/A

10 m

Dev Workspace Webhook Server

devworkspace-webhook-serverwebhook-server300 MiB

20 MiB

200 m

100 m

Dev Workspace Webhook Server

devworkspace-webhook-serverkube-rbac-proxyN/A

N/A

N/A

N/A

Total

12.3 GiB

1.1 GiB

8.2

1.1

- Add the workspace resources from step 3 and the operator resources from step 4 to determine total cluster resource requirements.

Verification

- Verify that the total resource requirements account for all OpenShift Dev Spaces Operator components, Dev Workspace Controller components, and the expected number of concurrent workspaces.

Chapter 3. OpenShift Dev Spaces scalability

Scaling Cloud Development Environments (CDEs) to thousands of concurrent workspaces on Kubernetes presents significant infrastructure and performance challenges.

Such a scale imposes high infrastructure demands and introduces potential bottlenecks that can impact performance and stability. Addressing these challenges requires meticulous planning, strategic architectural choices, monitoring, and continuous optimization.

CDE workloads are particularly complex to scale. The underlying IDE solutions, such as Visual Studio Code - Open Source ("Code - OSS") or JetBrains Gateway, are designed as single-user applications, not as multitenant services.

3.1. Resource quantity and object maximums

While there is no strict limit on the number of resources in a Kubernetes cluster, there are certain considerations for large clusters to remember.

OpenShift Container Platform, a certified distribution of Kubernetes, provides a set of tested maximums for various resources. These maximums can serve as an initial guideline for planning your environment:

Table 3.1. OpenShift Container Platform tested cluster maximums

| Resource type | Tested maximum |

|---|---|

| Number of nodes | 2000 |

| Number of pods | 150000 |

| Number of pods per node | 2500 |

| Number of namespace | 10000 |

| Number of services | 10000 |

| Number of secrets | 80000 |

| Number of config maps | 90000 |

For more details on OpenShift Container Platform tested object maximums, see the OpenShift Container Platform scalability and performance documentation.

For example, it is generally not recommended to have more than 10,000 namespaces due to potential performance and management overhead. In Red Hat OpenShift Dev Spaces, each user is allocated a namespace. If you expect the user base to be large, consider spreading workloads across multiple "fit-for-purpose" clusters and potentially using solutions for multi-cluster orchestration.

3.2. Resource requirements

When deploying Red Hat OpenShift Dev Spaces on Kubernetes, accurately calculate the resource requirements for each CDE, including memory and CPU or GPU needs. This determines the right sizing of the cluster. In general, the CDE size is limited by and cannot be bigger than the worker node size.

The resource requirements for CDEs can vary significantly based on the specific workloads and configurations. A simple CDE might require only a few hundred megabytes of memory. A more complex one might need several gigabytes of memory and multiple CPU cores.

For details about calculating resource requirements, see the procedure for calculating OpenShift Dev Spaces resource requirements.

3.3. Using etcd

The primary datastore of Kubernetes cluster configuration and state is etcd. It holds information about nodes, pods, services, and custom resources.

As a distributed key-value store, etcd does not scale well past a certain threshold. As the size of etcd grows, so does the load on the cluster, risking its stability.

The default etcd size is 2 GB, and the recommended maximum is 8 GB. Exceeding the maximum limit can make the Kubernetes cluster unstable and unresponsive. Even though the data stored in a ConfigMap cannot exceed 1 MiB by design, a few thousand relatively large ConfigMap objects can overload etcd storage.

3.4. Object size as a factor

The size of the objects stored in etcd is also a critical factor. Each object consumes space, and as the number of objects increases, the overall size of etcd grows. The larger the object, the more space it takes. For example, etcd can be overloaded with only a few thousand large Kubernetes objects.

In the context of Red Hat OpenShift Dev Spaces, by default the Operator creates and manages the 'ca-certs-merged' ConfigMap, which contains the Certificate Authorities (CAs) bundle, in every user namespace. With a large number of Transport Layer Security (TLS) certificates in the cluster, this results in additional etcd usage.

To disable mounting the CA bundle by using the ConfigMap under the /etc/pki/ca-trust/extracted/pem path, configure the CheCluster Custom Resource by setting the disableWorkspaceCaBundleMount property to true. With this configuration, only custom certificates are mounted under the path /public-certs:

spec:

devEnvironments:

trustedCerts:

disableWorkspaceCaBundleMount: true3.5. Dev Workspace objects

For large Kubernetes deployments, particularly those involving a high number of custom resources such as DevWorkspace objects, which represent CDEs, etcd can become a significant performance bottleneck.

Based on the load testing for 6,000 DevWorkspace objects, storage consumption for etcd was approximately 2.5GB.

Starting from Dev Workspace Operator version 0.34.0, you can configure a pruner that automatically cleans up DevWorkspace objects that were not in use for a certain period of time. To set the pruner up, configure the DevWorkspaceOperatorConfig object as follows:

apiVersion: controller.devfile.io/v1alpha1

kind: DevWorkspaceOperatorConfig

metadata:

name: devworkspace-operator-config

namespace: crw

config:

workspace:

cleanupCronJob:

enabled: true

dryRun: false

retainTime: 2592000

schedule: "0 0 1 * *"- retainTime

- By default, if a workspace was not started for more than 30 days, it is marked for deletion.

- schedule

- By default, the pruner runs once per month.

3.6. OLMConfig

When an Operator is installed by the Operator Lifecycle Manager (OLM), a stripped-down copy of its CSV is created in every namespace the Operator watches. These "Copied CSVs" communicate which controllers are reconciling resource events in a given namespace.

On large clusters with hundreds or thousands of namespaces, Copied CSVs consume an unsustainable amount of resources, including OLM memory, etcd storage, and network bandwidth. To eliminate the CSVs copied to every namespace, configure the OLMConfig object:

apiVersion: operators.coreos.com/v1

kind: OLMConfig

metadata:

name: cluster

spec:

features:

disableCopiedCSVs: true

Additional information about the disableCopiedCSVs feature is available in its original enhancement proposal.

In clusters with many namespaces and cluster-wide Operators, Copied CSVs increase etcd storage usage and memory consumption. Disabling Copied CSVs significantly reduces the data stored in etcd and improves cluster performance and stability.

Disabling Copied CSVs also reduces the memory footprint of OLM, as it no longer maintains these additional resources.

For more details about disabling Copied CSVs, see the OLM documentation.

3.7. Cluster Autoscaling

Although cluster autoscaling is a powerful Kubernetes feature, you cannot always rely on it. Consider predictive scaling by analyzing load data to detect daily or weekly usage patterns.

If your workloads follow a pattern with dramatic peaks throughout the day, provision worker nodes accordingly. For example, if workspaces increase during business hours and decrease during off-hours, predictive scaling adjusts the number of worker nodes. This ensures enough resources are available during peak load while minimizing costs during off-peak hours.

You can also use open-source solutions such as Karpenter for configuration and lifecycle management of the worker nodes. Karpenter can dynamically provision and optimize worker nodes based on the specific requirements of the workloads. This helps improve resource utilization and reduce costs.

3.8. Multi-cluster

By design, Red Hat OpenShift Dev Spaces is not multi-cluster aware. You can only have one instance per cluster.

However, you can run Red Hat OpenShift Dev Spaces in a multi-cluster environment by deploying Red Hat OpenShift Dev Spaces in each cluster. Use a load balancer or Domain Name System (DNS)-based routing to direct traffic to the appropriate instance. This approach distributes the workload across clusters and provides redundancy in case of cluster failures.

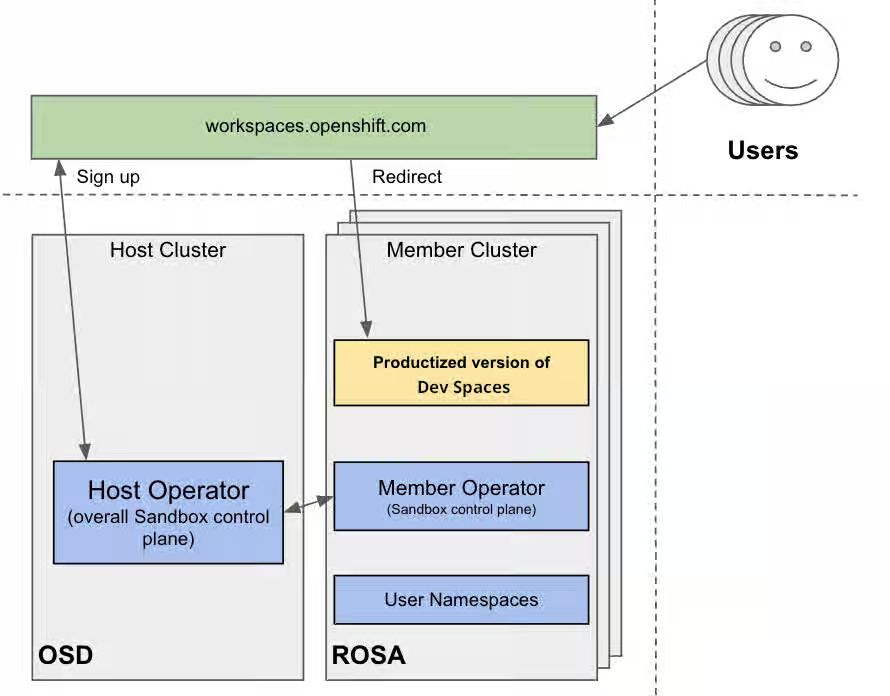

3.9. Developer Sandbox example

You can test running OpenShift Dev Spaces in a multi-cluster environment by using the Developer Sandbox, a free trial environment by Red Hat.

From an infrastructure perspective, the Developer Sandbox consists of multiple Red Hat OpenShift Service on AWS (ROSA) clusters. On each cluster, the productized version of Red Hat OpenShift Dev Spaces is installed and configured using Argo CD. The workspaces.openshift.com URL is used as a single entry point to the Red Hat OpenShift Dev Spaces instances across clusters.

Figure 3.1. Developer Sandbox multi-cluster architecture

You can find implementation details about the multicluster redirector in the crw-multicluster-redirector GitHub repository.

The multi-cluster architecture of workspaces.openshift.com is part of the Developer Sandbox. It is a Developer Sandbox-specific solution that cannot be reused as-is in other environments. However, you can use it as a reference for implementing a similar solution well-tailored to your specific multicluster needs.

3.10. The multicluster redirector solution for OpenShift Container Platform

Red Hat offers an open-source, Quarkus-based service that acts as a single gateway for developers. This service automatically redirects users to the correct Red Hat OpenShift Dev Spaces instance on the appropriate cluster based on their OpenShift Container Platform group membership. The community-supported version is available in the devspaces-multicluster-redirector GitHub repository.

3.11. Architecture and requirements

A critical requirement for the multicluster redirector is that all users are provisioned to the host cluster where the redirector is deployed. Users authenticate through the OAuth flow of this cluster, even if they never run workloads there. The host cluster’s OpenShift Container Platform groups determine the routing logic. See the devspaces-multicluster-redirector documentation for deployment instructions.

3.12. Configuration

The routing configuration uses a ConfigMap that contains JSON to map OpenShift Container Platform groups to Red Hat OpenShift Dev Spaces URLs. The redirector uses this file to update routing tables in real-time without requiring restarts.

3.13. Operational flow

The routing process follows these steps:

- Authenticate by using OAuth through a proxy sidecar.

- Pass identity and group information through HTTP headers.

- Verify group memberships by using OpenShift Container Platform API queries.

- Determine the appropriate Red Hat OpenShift Dev Spaces URL by using a mapping lookup.

- Redirect the user to the designated cluster instance.

If users belong to multiple OpenShift Container Platform groups, they can choose their desired Red Hat OpenShift Dev Spaces instance from a selection dashboard.

Additional resources

- Content from che.eclipseprojects.io is not included.Running at scale

- This content is not included.Enterprise multi-cluster scalability

- Content from kubernetes.io is not included.Kubernetes

- Content from github.com is not included.Visual Studio Code - Open Source ("Code - OSS")

- Content from www.jetbrains.com is not included.JetBrains Gateway

- Content from kubernetes.io is not included.Considerations for large clusters

- Content from kubernetespodcast.com is not included."Scalability, with Wojciech Tyczynski" episode of Kubernetes Podcast

- This content is not included.OpenShift Container Platform

- This content is not included.OpenShift Container Platform tested object maximums

- Section 2.5, “Calculate OpenShift Dev Spaces resource requirements”

- Content from etcd.io is not included.etcd

- Content from olm.operatorframework.io is not included.Operator Lifecycle Manager (OLM)

- Content from github.com is not included.OLM toggle Copied CSVs enhancement proposal

- Content from olm.operatorframework.io is not included.Disabling Copied CSVs in OLM

- Content from karpenter.sh is not included.Karpenter

- This content is not included.Developer Sandbox

- This content is not included.Red Hat OpenShift Service on AWS (ROSA)

- Content from argo-cd.readthedocs.io is not included.Argo CD

- Content from workspaces.openshift.com is not included.workspaces.openshift.com

- Content from github.com is not included.crw-multicluster-redirector GitHub repository

- Content from github.com is not included.devspaces-multicluster-redirector GitHub repository

Chapter 4. Install Red Hat OpenShift Dev Spaces

Install Red Hat OpenShift Dev Spaces on an OpenShift cluster by using the command-line interface (CLI) or the web console.

You can deploy only one instance of OpenShift Dev Spaces per cluster.

4.1. Install Dev Spaces on OpenShift using CLI

Install OpenShift Dev Spaces on OpenShift by using the dsc CLI management tool to deploy a new instance.

Prerequisites

- You have an OpenShift Container Platform 4.22 or later cluster.

-

You have an active

ocsession with administrative permissions to the OpenShift cluster. See This page is not included, but the link has been rewritten to point to the nearest parent document.Getting started with the OpenShift CLI. -

You have the

dscmanagement tool installed. See Section 2.2, “Install the dsc management tool”.

Procedure

Optional: If you previously deployed OpenShift Dev Spaces on this OpenShift cluster, ensure that the previous OpenShift Dev Spaces instance is removed:

$ dsc server:delete

Create the OpenShift Dev Spaces instance:

$ dsc server:deploy --platform openshift

Verification

Verify the OpenShift Dev Spaces instance status:

$ dsc server:status

Navigate to the OpenShift Dev Spaces cluster instance:

$ dsc dashboard:open

Additional resources

4.2. Install Dev Spaces on OpenShift using the web console

Install OpenShift Dev Spaces on OpenShift through the web console by deploying the Operator from OperatorHub and creating a CheCluster instance.

Prerequisites

- You have an OpenShift web console session as a cluster administrator. See This page is not included, but the link has been rewritten to point to the nearest parent document.Accessing the web console.

-

You have an active

ocsession with administrative permissions to the OpenShift cluster. See This page is not included, but the link has been rewritten to point to the nearest parent document.Getting started with the OpenShift CLI. - For a repeat installation: you have uninstalled the previous OpenShift Dev Spaces instance according to Chapter 24, Uninstall OpenShift Dev Spaces.

Procedure

-

In the Administrator view of the OpenShift web console, go to Operators → OperatorHub and search for

Red Hat OpenShift Dev Spaces. Install the Red Hat OpenShift Dev Spaces Operator.

ImportantThe Red Hat OpenShift Dev Spaces Operator depends on the Dev Workspace Operator. If you install the Red Hat OpenShift Dev Spaces Operator manually to a non-default namespace, ensure that the Dev Workspace Operator is also installed in the same namespace. The Operator Lifecycle Manager installs the Dev Workspace Operator as a dependency within the Red Hat OpenShift Dev Spaces Operator namespace. If the Dev Workspace Operator is already installed in a different namespace, two conflicting installations can result.

ImportantIf you want to onboard This page is not included, but the link has been rewritten to point to the nearest parent document.Web Terminal Operator on the cluster, use the same installation namespace as the Red Hat OpenShift Dev Spaces Operator. Both operators depend on the Dev Workspace Operator, so all three must be installed in the same namespace.

Create the

openshift-devspacesproject in OpenShift as follows:oc create namespace openshift-devspaces

- Go to Operators → Installed Operators → Red Hat OpenShift Dev Spaces instance Specification → Create CheCluster → YAML view.

-

In the YAML view, replace

namespace: openshift-operatorswithnamespace: openshift-devspaces. Select Create.

Verification

- In Red Hat OpenShift Dev Spaces instance Specification, go to devspaces, landing on the Details tab.

- Under Message, check that there is None, which means no errors.

- Under Red Hat OpenShift Dev Spaces URL, wait until the URL of the OpenShift Dev Spaces instance appears, and then open the URL to check the OpenShift Dev Spaces dashboard.

- In the Resources tab, view the resources for the OpenShift Dev Spaces deployment and their status.

4.3. Install OpenShift Dev Spaces in a restricted environment on OpenShift

Install OpenShift Dev Spaces on an air-gapped OpenShift cluster by mirroring required images and operator catalogs to a registry within the restricted network.

On a restricted network, deploying OpenShift Dev Spaces and running workspaces requires the following public resources:

- Operator catalog

- Container images

- Sample projects

To make these resources available, you can replace them with their copy in a registry accessible by the OpenShift cluster.

Prerequisites

- You have an OpenShift cluster with at least 64 GB of disk space.

- You have an OpenShift cluster ready to operate on a restricted network. See This content is not included.About disconnected installation mirroring and This page is not included, but the link has been rewritten to point to the nearest parent document.Using Operator Lifecycle Manager on restricted networks.

-

You have an active

ocsession with administrative permissions to the OpenShift cluster. See This page is not included, but the link has been rewritten to point to the nearest parent document.Getting started with the OpenShift CLI. -

You have an active

oc registrysession to theregistry.redhat.ioRed Hat Ecosystem Catalog. See Red Hat Container Registry authentication. -

You have

opminstalled. See This page is not included, but the link has been rewritten to point to the nearest parent document.Installing theopmCLI. -

You have

jqinstalled. See Content from stedolan.github.io is not included.Downloadingjq. -

You have

podmaninstalled. See Content from podman.io is not included.Podman Installation Instructions. -

You have

skopeoversion 1.6 or higher installed. See Content from github.com is not included.Installing Skopeo. -

You have an active

skopeosession with administrative access to the private Docker registry. See Content from github.com is not included.Authenticating to a registry and This content is not included.Mirroring images for a disconnected installation. -

You have

dscfor OpenShift Dev Spaces version 3.27 installed. See Section 2.2, “Install the dsc management tool”.

Procedure

Download and execute the mirroring script to install a custom Operator catalog and mirror the related images.

$ bash prepare-restricted-environment.sh \ --devworkspace_operator_index registry.redhat.io/redhat/redhat-operator-index:v4.22\ --devworkspace_operator_version "v0.40.0" \ --prod_operator_index "registry.redhat.io/redhat/redhat-operator-index:v4.22" \ --prod_operator_package_name "devspaces" \ --prod_operator_bundle_name "devspacesoperator" \ --prod_operator_version "v3.27.0" \ --my_registry "<my_registry>"--my_registry- The private Docker registry where the images will be mirrored

Procedure

Install OpenShift Dev Spaces with the configuration set in the

che-operator-cr-patch.yamlduring the previous step:$ dsc server:deploy \ --platform=openshift \ --olm-channel stable \ --catalog-source-name=devspaces-disconnected-install \ --catalog-source-namespace=openshift-marketplace \ --skip-devworkspace-operator \ --che-operator-cr-patch-yaml=che-operator-cr-patch.yaml

- Allow incoming traffic from the OpenShift Dev Spaces namespace to all Pods in the user projects. See: Section 12.1, “Configure network policies”.

Verification

Verify that the OpenShift Dev Spaces instance is running:

$ dsc server:status

4.4. Set up an Ansible sample

Configure an Ansible sample for use in restricted OpenShift Dev Spaces environments.

Prerequisites

- You have Microsoft Visual Studio Code - Open Source IDE as the configured editor.

- You have a 64-bit x86 system.

Procedure

Mirror the following images:

ghcr.io/ansible/ansible-devspaces@sha256:ce1ecc3b3c350eab2a9a417ce14a33f4b222a6aafd663b5cf997ccc8c601fe2c registry.access.redhat.com/ubi8/python-39@sha256:301fec66443f80c3cc507ccaf72319052db5a1dc56deb55c8f169011d4bbaacb

Configure the cluster proxy to allow access to the following domains:

.ansible.com .ansible-galaxy-ng.s3.dualstack.us-east-1.amazonaws.com

NoteSupport for the following IDE and CPU architectures is planned for a future release:

CPU architectures

- IBM Power (ppc64le)

- IBM Z (s390x)

4.5. Find the fully qualified domain name (FQDN)

Retrieve the fully qualified domain name (FQDN) of your organization’s instance of OpenShift Dev Spaces on the command line to access the OpenShift Dev Spaces dashboard URL.

You can find the FQDN for your organization’s OpenShift Dev Spaces instance in the Administrator view of the OpenShift web console as follows. Go to Operators → Installed Operators → Red Hat OpenShift Dev Spaces instance Specification → devspaces → Red Hat OpenShift Dev Spaces URL.

Prerequisites

-

You have an active

ocsession with administrative permissions to the OpenShift cluster. See This page is not included, but the link has been rewritten to point to the nearest parent document.Getting started with the OpenShift CLI.

Procedure

Run the following command:

oc get checluster devspaces -n openshift-devspaces -o jsonpath='{.status.cheURL}'

Verification

- Open the returned URL in a web browser and verify that the OpenShift Dev Spaces dashboard loads.

4.6. Permissions to install OpenShift Dev Spaces on OpenShift using CLI

A specific set of permissions is required to install OpenShift Dev Spaces on an OpenShift cluster using the dsc CLI tool.

The following YAML shows the minimal set of permissions required to install OpenShift Dev Spaces on an OpenShift cluster using dsc:

apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: name: devspaces-install-dsc rules: - apiGroups: ["org.eclipse.che"] resources: ["checlusters"] verbs: ["*"] - apiGroups: ["project.openshift.io"] resources: ["projects"] verbs: ["get", "list"] - apiGroups: [""] resources: ["namespaces"] verbs: ["get", "list", "create"] - apiGroups: [""] resources: ["pods", "configmaps"] verbs: ["get", "list"] - apiGroups: ["route.openshift.io"] resources: ["routes"] verbs: ["get", "list"] # OLM resources permissions - apiGroups: ["operators.coreos.com"] resources: ["catalogsources", "subscriptions"] verbs: ["create", "get", "list", "watch"] - apiGroups: ["operators.coreos.com"] resources: ["operatorgroups", "clusterserviceversions"] verbs: ["get", "list", "watch"] - apiGroups: ["operators.coreos.com"] resources: ["installplans"] verbs: ["patch", "get", "list", "watch"] - apiGroups: ["packages.operators.coreos.com"] resources: ["packagemanifests"] verbs: ["get", "list"]

4.7. Permissions to install OpenShift Dev Spaces on OpenShift using web console

A specific set of permissions is required to install OpenShift Dev Spaces on an OpenShift cluster using the web console.

The following YAML shows the minimal set of permissions required to install OpenShift Dev Spaces on an OpenShift cluster using the web console:

apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: name: devspaces-install-web-console rules: - apiGroups: ["org.eclipse.che"] resources: ["checlusters"] verbs: ["*"] - apiGroups: [""] resources: ["namespaces"] verbs: ["get", "list", "create"] - apiGroups: ["project.openshift.io"] resources: ["projects"] verbs: ["get", "list", "create"] # OLM resources permissions - apiGroups: ["operators.coreos.com"] resources: ["subscriptions"] verbs: ["get", "list", "watch", "create", "update", "patch", "delete"] - apiGroups: ["operators.coreos.com"] resources: ["operatorgroups"] verbs: ["get", "list", "watch"] - apiGroups: ["operators.coreos.com"] resources: ["clusterserviceversions", "catalogsources", "installplans"] verbs: ["get", "list", "watch", "delete"] - apiGroups: ["packages.operators.coreos.com"] resources: ["packagemanifests", "packagemanifests/icon"] verbs: ["get", "list", "watch"] # Workaround related to viewing operators in OperatorHub - apiGroups: ["operator.openshift.io"] resources: ["cloudcredentials"] verbs: ["get", "list", "watch"] - apiGroups: ["config.openshift.io"] resources: ["infrastructures", "authentications"] verbs: ["get", "list", "watch"]

Additional resources

Chapter 5. Configure the CheCluster Custom Resource

Configure your OpenShift Dev Spaces instance by editing the CheCluster Custom Resource (CR).

The CheCluster CR is the central configuration object for OpenShift Dev Spaces. You can set fields during installation with dsc flags or modify them at any time afterward with oc.

5.1. The CheCluster Custom Resource

A default deployment of OpenShift Dev Spaces consists of a CheCluster Custom Resource parameterized by the Red Hat OpenShift Dev Spaces Operator. Understand its structure to customize OpenShift Dev Spaces components for your environment.

The CheCluster Custom Resource is a Kubernetes object. You can configure it by editing the CheCluster Custom Resource YAML file. This file contains sections to configure each component: devWorkspace, cheServer, pluginRegistry, devfileRegistry, dashboard and imagePuller.

The Red Hat OpenShift Dev Spaces Operator translates the CheCluster Custom Resource into a config map usable by each component of the OpenShift Dev Spaces installation.

The OpenShift platform applies the configuration to each component, and creates the necessary Pods. When OpenShift detects changes in the configuration of a component, it restarts the Pods accordingly.

Example 5.1. Configuring the main properties of the OpenShift Dev Spaces server component

-

Apply the

CheClusterCustom Resource YAML file with suitable modifications in thecheServercomponent section. -

The Operator generates the

cheConfigMap. -

OpenShift detects changes in the

ConfigMapand triggers a restart of the OpenShift Dev Spaces Pod.

5.2. Use dsc to configure the CheCluster Custom Resource during installation

To deploy OpenShift Dev Spaces with a suitable configuration, edit the CheCluster Custom Resource YAML file during the installation of OpenShift Dev Spaces. Otherwise, the OpenShift Dev Spaces deployment uses the default configuration parameterized by the Operator.

Prerequisites

-

You have an active

ocsession with administrative permissions to the OpenShift cluster. See This page is not included, but the link has been rewritten to point to the nearest parent document.Getting started with the CLI. -

You have the

dscmanagement tool installed. See Section 2.2, “Install the dsc management tool”.

Procedure

Create a

che-operator-cr-patch.yamlYAML file that contains the subset of theCheClusterCustom Resource to configure:spec: <component>: <property_to_configure>: <value>

Deploy OpenShift Dev Spaces and apply the changes described in

che-operator-cr-patch.yamlfile:$ dsc server:deploy \ --che-operator-cr-patch-yaml=che-operator-cr-patch.yaml \ --platform <chosen_platform>

Verification

Verify the value of the configured property:

$ oc get configmap che -o jsonpath='{.data.<configured_property>}' \ -n openshift-devspaces

5.3. Use the CLI to configure the CheCluster Custom Resource

Edit the CheCluster Custom Resource YAML file to customize the behavior of a running OpenShift Dev Spaces instance for your environment.

Prerequisites

- You have an instance of OpenShift Dev Spaces on OpenShift.

-

You have an active

ocsession with administrative permissions to the destination OpenShift cluster. See This page is not included, but the link has been rewritten to point to the nearest parent document.Getting started with the CLI.

Procedure

Edit the CheCluster Custom Resource on the cluster:

$ oc edit checluster/devspaces -n openshift-devspaces

- Save and close the file to apply the changes.

Verification

Verify the value of the configured property:

$ oc get configmap che -o jsonpath='{.data.<configured_property>}' \ -n openshift-devspaces

5.4. CheCluster Custom Resource fields reference

Customize the CheCluster Custom Resource by configuring its specification fields to control OpenShift Dev Spaces server, dashboard, gateway, and workspace components.

Example 5.2. A minimal CheCluster Custom Resource example.

apiVersion: org.eclipse.che/v2

kind: CheCluster

metadata:

name: devspaces

namespace: openshift-devspaces

spec:

components: {}

devEnvironments: {}

networking: {}Table 5.1. Development environment configuration options.

| Property | Description | Default |

|---|---|---|

| allowedSources | AllowedSources defines the allowed sources on which workspaces can be started. | |

| containerBuildConfiguration | Container build configuration. | |

| containerResourceCaps | ContainerResourceCaps defines the maximum resource requirements enforced for workspace containers. If a container specifies limits or requests that exceed these values, they will be capped at the maximum. Note: Caps only apply when resources are already specified on a container. For containers without resource specifications, use DefaultContainerResources instead. These resource caps do not apply to initContainers or the projectClone container. | |

| containerRunConfiguration | Container run configuration. | |

| defaultComponents | Default components applied to DevWorkspaces. These default components are meant to be used when a Devfile, that does not contain any components. | |

| defaultContainerResources | DefaultContainerResources defines the resource requirements (memory/cpu limit/request) used for container components that do not define limits or requests. | |

| defaultEditor |

The default editor to workspace create with. It could be a plugin ID or a URI. The plugin ID must have | |

| defaultNamespace | User’s default namespace. | { "autoProvision": true, "template": "<username>-che"} |

| defaultPlugins | Default plug-ins applied to DevWorkspaces. | |

| deploymentStrategy |

DeploymentStrategy defines the deployment strategy to use to replace existing workspace pods with new ones. The available deployment stragies are | |

| disableContainerBuildCapabilities |

Disables the container build capabilities. When set to | |

| disableContainerRunCapabilities |

Disables container run capabilities. Can be enabled on OpenShift version 4.20 or later. When set to | true |

| editorsDownloadUrls |

EditorsDownloadUrls provides a list of custom download URLs for JetBrains editors in a local-to-remote flow. It is particularly useful in disconnected or air-gapped environments, where editors cannot be downloaded from the public internet. Each entry contains an editor identifier in the | |

| gatewayContainer | GatewayContainer configuration. | |

| ignoredUnrecoverableEvents | IgnoredUnrecoverableEvents defines a list of Kubernetes event names that should be ignored when deciding to fail a workspace that is starting. This option should be used if a transient cluster issue is triggering false-positives (for example, if the cluster occasionally encounters FailedScheduling events). Events listed here will not trigger workspace failures. | [ "FailedScheduling"] |

| imagePullPolicy | ImagePullPolicy defines the imagePullPolicy used for containers in a DevWorkspace. | |

| maxNumberOfRunningWorkspacesPerCluster | The maximum number of concurrently running workspaces across the entire Kubernetes cluster. This applies to all users in the system. If the value is set to -1, it means there is no limit on the number of running workspaces. | |

| maxNumberOfRunningWorkspacesPerUser | The maximum number of running workspaces per user. The value, -1, allows users to run an unlimited number of workspaces. | |

| maxNumberOfWorkspacesPerUser | Total number of workspaces, both stopped and running, that a user can keep. The value, -1, allows users to keep an unlimited number of workspaces. | -1 |

| networking | Configuration settings related to the workspaces networking. | |

| nodeSelector | The node selector limits the nodes that can run the workspace pods. | |

| persistUserHome | PersistUserHome defines configuration options for persisting the user home directory in workspaces. | |

| podSchedulerName | Pod scheduler for the workspace pods. If not specified, the pod scheduler is set to the default scheduler on the cluster. | |

| projectCloneContainer | Project clone container configuration. | |

| runtimeClassName | RuntimeClassName specifies the spec.runtimeClassName for workspace pods. | |

| secondsOfInactivityBeforeIdling | Idle timeout for workspaces in seconds. This timeout is the duration after which a workspace will be idled if there is no activity. To disable workspace idling due to inactivity, set this value to -1. | 1800 |

| secondsOfRunBeforeIdling | Run timeout for workspaces in seconds. This timeout is the maximum duration a workspace runs. To disable workspace run timeout, set this value to -1. | -1 |

| security | Workspace security configuration. | |

| serviceAccount | ServiceAccount to use by the DevWorkspace operator when starting the workspaces. | |

| serviceAccountTokens | List of ServiceAccount tokens that will be mounted into workspace pods as projected volumes. | |

| startTimeoutSeconds | StartTimeoutSeconds determines the maximum duration (in seconds) that a workspace can take to start before it is automatically failed. If not specified, the default value of 300 seconds (5 minutes) is used. | 300 |

| storage | Workspaces persistent storage. | { "pvcStrategy": "per-user"} |

| tolerations | The pod tolerations of the workspace pods limit where the workspace pods can run. | |

| trustedCerts | Trusted certificate settings. | |

| user | User configuration. | |

| workspacesPodAnnotations | WorkspacesPodAnnotations defines additional annotations for workspace pods. |

Table 5.2. allowedSources options.

| Property | Description | Default |

|---|---|---|

| urls |

The list of approved URLs for starting Cloud Development Environments (CDEs). CDEs can only be initiated from these URLs. Wildcards |

Table 5.3. defaultNamespace options.

| Property | Description | Default |

|---|---|---|

| autoProvision | Indicates if is allowed to automatically create a user namespace. If it set to false, then user namespace must be pre-created by a cluster administrator. | true |

| template |

If you do not create the user namespaces in advance, this field defines the Kubernetes namespace created when you start your first workspace. You can use | "<username>-che" |

Table 5.4. defaultPlugins options.

| Property | Description | Default |

|---|---|---|

| editor |

The editor ID to specify default plug-ins for. The plugin ID must have | |

| plugins | Default plug-in URIs for the specified editor. |

Table 5.5. editorsDownloadUrls options.

| Property | Description | Default |

|---|---|---|

| editor |

The editor ID must have | |

| url | ul |

Table 5.6. gatewayContainer options.

| Property | Description | Default |

|---|---|---|

| env | List of environment variables to set in the container. | |

| image | Container image. Omit it or leave it empty to use the default container image provided by the Operator. | |

| imagePullPolicy |

Image pull policy. Default value is | |

| name | Container name. | |

| resources | Compute resources required by this container. |

Table 5.7. networking options.

| Property | Description | Default |

|---|---|---|

| externalTLSConfig | External TLS configuration. |

Table 5.8. externalTLSConfig options.

| Property | Description | Default |

|---|---|---|

| annotations | Annotations to be applied to ingress/route objects when external TLS is enabled. | |

| enabled | Enabled determines whether external TLS configuration is used. If set to true, the operator will not set TLS config for ingress/route objects. Instead, it ensures that any custom TLS configuration will not be reverted on synchronization. | |

| labels | Labels to be applied to ingress/route objects when external TLS is enabled. |

Table 5.9. persistUserHome options.

| Property | Description | Default |

|---|---|---|

| disableInitContainer |

Determines whether the init container that initializes the persistent home directory should be disabled. When the | |

| enabled | Determines whether the user home directory in workspaces should persist between workspace shutdown and startup. Must be used with the 'per-user' or 'per-workspace' PVC strategy to take effect. Disabled by default. |

Table 5.10. projectCloneContainer options.

| Property | Description | Default |

|---|---|---|

| env | List of environment variables to set in the container. | |

| image | Container image. Omit it or leave it empty to use the default container image provided by the Operator. | |

| imagePullPolicy |

Image pull policy. Default value is | |

| name | Container name. | |

| resources | Compute resources required by this container. |

Table 5.11. security options.

| Property | Description | Default |

|---|---|---|

| containerSecurityContext |

Defines the SecurityContext applied to all workspace-related containers. When set, the specified values are merged with the default SecurityContext configuration. This setting takes effect only if both | |

| podSecurityContext | PodSecurityContext used by all workspace-related pods. If set, defined values are merged into the default PodSecurityContext configuration. |

Table 5.12. storage options.

| Property | Description | Default |

|---|---|---|

| perUserStrategyPvcConfig |

PVC settings when using the | |

| perWorkspaceStrategyPvcConfig |

PVC settings when using the | |

| pvcStrategy |

Persistent volume claim strategy for the OpenShift Dev Spaces server. The supported strategies are: | "per-user" |

Table 5.13. per-user PVC strategy options.

| Property | Description | Default |

|---|---|---|

| claimSize | Persistent Volume Claim size. To update the claim size, the storage class that provisions it must support resizing. | |

| storageAccessMode | StorageAccessMode are the desired access modes the volume should have. It is used to specify PersistentVolume access mode type to RWO/RWX when using per-user strategy, allowing user to re-use volume across multiple workspaces. It defaults to ReadWriteOnce if not specified | |

| storageClass | Storage class for the Persistent Volume Claim. When omitted or left blank, a default storage class is used. |

Table 5.14. per-workspace PVC strategy options.

| Property | Description | Default |

|---|---|---|

| claimSize | Persistent Volume Claim size. To update the claim size, the storage class that provisions it must support resizing. | |

| storageAccessMode | StorageAccessMode are the desired access modes the volume should have. It is used to specify PersistentVolume access mode type to RWO/RWX when using per-user strategy, allowing user to re-use volume across multiple workspaces. It defaults to ReadWriteOnce if not specified | |

| storageClass | Storage class for the Persistent Volume Claim. When omitted or left blank, a default storage class is used. |

Table 5.15. trustedCerts options.

| Property | Description | Default |

|---|---|---|

| disableWorkspaceCaBundleMount | By default, the Operator creates and mounts the 'ca-certs-merged' ConfigMap containing the CA certificate bundle in users' workspaces at two locations: '/public-certs' and '/etc/pki/ca-trust/extracted/pem'. The '/etc/pki/ca-trust/extracted/pem' directory is where the system stores extracted CA certificates for trusted certificate authorities on Red Hat (e.g., CentOS, Fedora). This option disables mounting the CA bundle to the '/etc/pki/ca-trust/extracted/pem' directory while still mounting it to '/public-certs'. | |

| gitTrustedCertsConfigMapName |

The ConfigMap contains certificates to propagate to the OpenShift Dev Spaces components and to provide a particular configuration for Git. See the following page: Content from www.eclipse.org is not included.https://www.eclipse.org/che/docs/stable/administration-guide/deploying-che-with-support-for-git-repositories-with-self-signed-certificates/ The ConfigMap must have a |

Table 5.16. user options.

| Property | Description | Default |

|---|---|---|

| clusterRoles |

Additional ClusterRoles assigned to the user. The role must have |

Table 5.17. containerBuildConfiguration options.

| Property | Description | Default |

|---|---|---|

| openShiftSecurityContextConstraint | OpenShift security context constraint to build containers. | "container-build" |

Table 5.18. containerRunConfiguration options.

| Property | Description | Default |

|---|---|---|

| containerSecurityContext |

SecurityContext applied to all workspace containers when run capabilities are enabled. The default | { "allowPrivilegeEscalation": true, "capabilities": { "add": [ "SETGID", "SETUID" ] }, "procMount": "Unmasked"} |

| openShiftSecurityContextConstraint | Specifies the OpenShift SecurityContextConstraint used to run containers. | "container-run" |

| workspacesPodAnnotations |

Extra annotations applied to all workspace pods, in addition to those defined in | { "io.kubernetes.cri-o.Devices": "/dev/fuse,/dev/net/tun"} |

Table 5.19. OpenShift Dev Spaces components configuration.

| Property | Description | Default |

|---|---|---|

| cheServer | General configuration settings related to the OpenShift Dev Spaces server. | { "debug": false, "logLevel": "INFO"} |

| dashboard | Configuration settings related to the dashboard used by the OpenShift Dev Spaces installation. | |

| devWorkspace | DevWorkspace Operator configuration. | |

| devfileRegistry | Configuration settings related to the devfile registry used by the OpenShift Dev Spaces installation. | |

| imagePuller | Kubernetes Image Puller configuration. | |

| metrics | OpenShift Dev Spaces server metrics configuration. | { "enable": true} |

| pluginRegistry | Configuration settings related to the plug-in registry used by the OpenShift Dev Spaces installation. |

Table 5.20. General configuration settings related to the OpenShift Dev Spaces server component.

| Property | Description | Default |

|---|---|---|

| clusterRoles |

Additional ClusterRoles assigned to OpenShift Dev Spaces ServiceAccount. Each role must have a | |

| debug | Enables the debug mode for OpenShift Dev Spaces server. | false |

| deployment | Deployment override options. | |

| extraProperties |

A map of additional environment variables applied in the generated | |

| logLevel |

The log level for the OpenShift Dev Spaces server: | "INFO" |

| proxy | Proxy server settings for Kubernetes cluster. No additional configuration is required for OpenShift cluster. By specifying these settings for the OpenShift cluster, you override the OpenShift proxy configuration. |

Table 5.21. proxy options.

| Property | Description | Default |

|---|---|---|

| credentialsSecretName |

The secret name that contains | |

| nonProxyHosts |

A list of hosts that can be reached directly, bypassing the proxy. Specify wild card domain use the following form | |

| port | Proxy server port. | |

| url |

URL (protocol+hostname) of the proxy server. Use only when a proxy configuration is required. The Operator respects OpenShift cluster-wide proxy configuration, defining |

Table 5.22. Configuration settings related to the Plug-in registry component used by the OpenShift Dev Spaces installation.

| Property | Description | Default |

|---|---|---|

| deployment | Deployment override options. | |

| disableInternalRegistry | Disables internal plug-in registry. | |

| externalPluginRegistries | External plugin registries. | |

| openVSXURL | Open VSX registry URL. If omitted an embedded instance will be used. |

Table 5.23. externalPluginRegistries options.

| Property | Description | Default |

|---|---|---|

| url | Public URL of the plug-in registry. |

Table 5.24. Configuration settings related to the Devfile registry component used by the OpenShift Dev Spaces installation.

| Property | Description | Default |

|---|---|---|

| deployment | Deprecated deployment override options. | |

| disableInternalRegistry | Disables internal devfile registry. | |