Serving

Getting started with Knative Serving and configuring services

Abstract

Chapter 1. Getting started with Knative Serving

1.1. Creating serverless applications

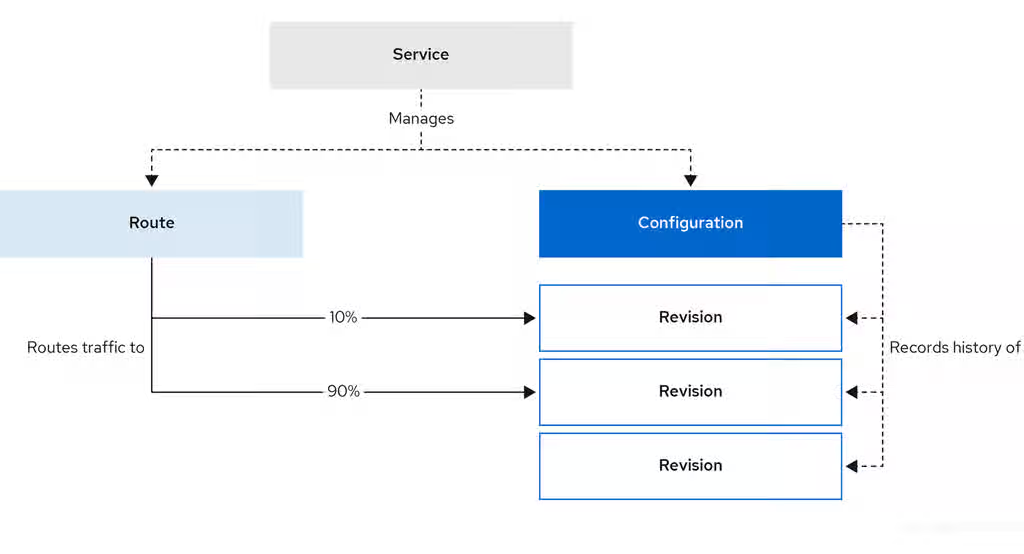

Serverless applications are created and deployed as Kubernetes services, defined by a route and a configuration, and contained in a YAML file. To deploy a serverless application by using OpenShift Serverless, you must create a Knative Service object.

Example Knative Service object YAML file

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: showcase 1 namespace: default 2 spec: template: spec: containers: - image: quay.io/openshift-knative/showcase 3 env: - name: GREET 4 value: Ciao

You can create a serverless application by using one of the following methods:

Create a Knative service from the OpenShift Container Platform web console.

For OpenShift Container Platform, see Creating applications for more information.

-

Create a Knative service by using the Knative (

kn) CLI. -

Create and apply a Knative

Serviceobject as a YAML file, by using theocCLI.

1.1.1. Creating serverless applications by using the Knative CLI

Using the Knative (kn) CLI to create serverless applications provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service create command to create a basic serverless application.

Prerequisites

- You have installed OpenShift Serverless Operator and Knative Serving on your cluster.

-

You have installed the Knative (

kn) CLI. - You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

Procedure

Create a Knative service:

$ kn service create <service_name> --image <image> --tag <tag_value>

Where:

-

--imageis the URI of the image for the application. --tagis an optional flag that you can use to add a tag to the initial revision that Knative creates with the service.You get an output similar to the following example command:

$ kn service create showcase \ --image quay.io/openshift-knative/showcaseYou get an output similar to the following example:

Creating service 'showcase' in namespace 'default': 0.271s The Route is still working to reflect the latest desired specification. 0.580s Configuration "showcase" is waiting for a Revision to become ready. 3.857s ... 3.861s Ingress has not yet been reconciled. 4.270s Ready to serve. Service 'showcase' created with latest revision 'showcase-00001' and URL: http://showcase-default.apps-crc.testing

-

1.1.2. Creating serverless applications using YAML

Use YAML files to create Knative resources with a declarative API. Define a Knative Service object in a YAML file and apply it by using oc apply to deploy your serverless application.

When you create the service and deploy the application, Knative creates an immutable revision for that version of the application. Knative also configures the route, ingress, service, and load balancer for the application and scales pods based on traffic.

Prerequisites

- You have installed OpenShift Serverless Operator and Knative Serving on your cluster.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

-

Install the OpenShift CLI (

oc).

Procedure

Create a YAML file containing the following sample code:

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: showcase namespace: default spec: template: spec: containers: - image: quay.io/openshift-knative/showcase env: - name: GREET value: BonjourNavigate to the directory that has the YAML file and run the following command to deploy the application:

$ oc apply -f <filename>

1.1.3. Creating a service using offline mode

You can run kn service commands in offline mode so the command does not change the cluster and instead creates a service descriptor file on your local machine. After you create the descriptor file, you can change it before propagating changes to the cluster.

The offline mode of the Knative CLI is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

Prerequisites

- You have installed OpenShift Serverless Operator and Knative Serving on your cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

In offline mode, create a local Knative service descriptor file:

$ kn service create showcase \ --image quay.io/openshift-knative/showcase \ --target ./ \ --namespace testYou get an output similar to the following example:

Service 'showcase' created in namespace 'test'.

The

--target ./flag enables offline mode and specifies./as the directory for storing the new directory tree.If you do not specify an existing directory but use a filename, such as

--target my-service.yaml, the command does not create a directory tree. Instead, the command creates the service descriptor filemy-service.yamlin the current directory.The filename can have the

.yaml,.yml, or.jsonextension. Choosing.jsoncreates the service descriptor file in the JSON format.The

--namespace testoption places the new service in thetestnamespace.If you do not use

--namespaceand you log in to an OpenShift Container Platform cluster, the command creates the descriptor file in the current namespace. If you do not log in to a cluster, the command creates the descriptor file in the default namespace.

Examine the created directory structure:

$ tree ./

You get an output similar to the following example:

./ └── test └── ksvc └── showcase.yaml 2 directories, 1 file-

The

./directory specified with--targetnow has atest/directory named after the specified namespace. -

The

test/directory has theksvcdirectory, named after the resource type. -

The

ksvcdirectory has the descriptor fileshowcase.yaml, named according to the specified service name.

-

The

Examine the generated service descriptor file:

$ cat test/ksvc/showcase.yaml

You get an output similar to the following example:

apiVersion: serving.knative.dev/v1 kind: Service metadata: creationTimestamp: null name: showcase namespace: test spec: template: metadata: annotations: client.knative.dev/user-image: quay.io/openshift-knative/showcase creationTimestamp: null spec: containers: - image: quay.io/openshift-knative/showcase name: "" resources: {} status: {}List information about the new service:

$ kn service describe showcase --target ./ --namespace test

You get an output similar to the following example:

Name: showcase Namespace: test Age: URL: Revisions: Conditions: OK TYPE AGE REASON

The

--target ./option specifies the root directory for the directory structure containing namespace subdirectories.You can also directly specify a YAML or JSON filename with the

--targetoption. The accepted file extensions are.yaml,.yml, and.json.The

--namespaceoption specifies the namespace, which communicates toknthe subdirectory that has the necessary service descriptor file.If you do not use

--namespaceand you log in to an OpenShift Container Platform cluster,knsearches for the service in the subdirectory that matches the current namespace. Otherwise,knsearches in thedefault/subdirectory.

Use the service descriptor file to create the service on the cluster:

$ kn service create -f test/ksvc/showcase.yaml

You get an output similar to the following example:

Creating service 'showcase' in namespace 'test': 0.058s The Route is still working to reflect the latest desired specification. 0.098s ... 0.168s Configuration "showcase" is waiting for a Revision to become ready. 23.377s ... 23.419s Ingress has not yet been reconciled. 23.534s Waiting for load balancer to be ready 23.723s Ready to serve. Service 'showcase' created to latest revision 'showcase-00001' is available at URL: http://showcase-test.apps.example.com

1.1.4. Verifying your serverless application deployment

To verify that your serverless application deployed successfully, get the application URL that Knative created. Then send a request to that URL and observe the output. OpenShift Serverless supports both HTTP and HTTPS URLs. However, the oc get ksvc command always prints URLs in the http:// format.

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving your cluster.

-

You have installed the

ocCLI. - You have created a Knative service.

Prerequisites

-

Install the OpenShift CLI (

oc).

Procedure

Find the application URL:

$ oc get ksvc <service_name>

Example output

NAME URL LATESTCREATED LATESTREADY READY REASON showcase http://showcase-default.example.com showcase-00001 showcase-00001 True

Make a request to your cluster and observe the output.

Example HTTP request (using

HTTPietool)$ http showcase-default.example.com

Example HTTPS request

$ https showcase-default.example.com

Example output

HTTP/1.1 200 OK Content-Type: application/json Server: Quarkus/2.13.7.Final-redhat-00003 Java/17.0.7 X-Config: {"sink":"http://localhost:31111","greet":"Ciao","delay":0} X-Version: v0.7.0-4-g23d460f content-length: 49 { "artifact": "knative-showcase", "greeting": "Ciao" }Optional. If you do not have the

HTTPietool installed on your system, you can likely use curl tool instead:Example HTTPS request

$ curl http://showcase-default.example.com

Example output

{"artifact":"knative-showcase","greeting":"Ciao"}Optional. If you receive an error relating to a self-signed certificate in the certificate chain, you can add the

--verify=noflag to theHTTPiecommand to ignore the error:$ https --verify=no showcase-default.example.com

Example output

HTTP/1.1 200 OK Content-Type: application/json Server: Quarkus/2.13.7.Final-redhat-00003 Java/17.0.7 X-Config: {"sink":"http://localhost:31111","greet":"Ciao","delay":0} X-Version: v0.7.0-4-g23d460f content-length: 49 { "artifact": "knative-showcase", "greeting": "Ciao" }ImportantYou must not use Self-signed certificates in a production deployment. This method is only for testing purposes.

Optional. If your OpenShift Container Platform cluster uses a certificate signed by a certificate authority (CA) that your system does not yet trust, specify the certificate when you run the

curlcommand. Pass the certificate path to thecurlcommand by using the--cacertflag:$ curl https://showcase-default.example.com --cacert <file>

Example output

{"artifact":"knative-showcase","greeting":"Ciao"}

1.1.5. Additional resources

Chapter 2. Scalability and performance of OpenShift Serverless Serving

OpenShift Serverless consists of several different components that have different resource requirements and scaling behaviors. These components are horizontally and vertically scalable, but their resource requirements and configuration highly depend on the actual use-case.

- Control-plane components

- These components are responsible for observing and reacting to custom resources and continuously reconfiguring the system, for example, the controller pods.

- Data-plane components

- These components are directly involved in requests and response handling, for example, the Knative Servings activator component.

The following metrics and findings were recorded using the following test setup:

- A cluster running OpenShift Container Platform 4.13

- The cluster running 4 compute nodes in AWS with a machine type of m6.xlarge

- OpenShift Serverless 1.30

2.1. Overhead of OpenShift Serverless Serving

As components of OpenShift Serverless Serving are part of the data-plane, requests from clients are routed through:

- The ingress-gateway (Kourier or Service Mesh)

- The activator component

- The queue-proxy sidecar container in each Knative Service

These components introduce an additional hop in networking and perform additional tasks, for example, adding observability and request queuing. The following are the measured latency overheads:

- Each additional network hop adds 0.5 ms to 1 ms latency to a request. Depending on the current load of the Knative Service and if the Knative Service was scaled to zero before the request, the activator component is not always a part of the data-plane.

- Depending on the payload size, each of the components is consuming up to 1 vCPU of CPU for handling 2500 requests per second.

2.2. Known limitations of OpenShift Serverless Serving

The maximum number of Knative Services that can be created is 3,000. This corresponds to the OpenShift Container Platform Kubernetes services limit of 10,000, since 1 Knative Service creates 3 Kubernetes services.

2.3. Scaling and performance of OpenShift Serverless Serving

OpenShift Serverless Serving has to be scaled and configured based on the following parameters:

- Number of Knative Services

- Number of Revisions

- Amount of concurrent requests in the system

- Size of payloads of the requests

- The startup-latency and response latency of the Knative Service added by the user’s web application

- Number of changes of the KnativeService custom resource (CR) over time

2.3.1. KnativeServing default configuration

Per default, OpenShift Serverless Serving is configured to run all components with high-availability and medium-sized CPU and memory requests and limits. This means that the high-available field in KnativeServing CR is automatically set to a value of 2 and all system components are scaled to two replicas. This configuration is suitable for medium workload scenarios and has been tested with:

- 170 Knative Services

- 1-2 Revisions per Knative Service

- 89 test scenarios mainly focused on testing the control plane

- 48 re-creating scenarios where Knative Services are deleted and re-created

- 41 stable scenarios, in which requests are slowly but continuously sent to the system

During these test cases, the system components effectively consumed:

| Component | Measured Resources |

|---|---|

|

Operator in project | 1 GB Memory, 0.2 Cores of CPU |

|

Serving components in project | 5 GB Memory, 2.5 Cores of CPU |

2.3.2. Minimal requirements of OpenShift Serverless Serving

While the default setup is suitable for medium-sized workloads, it might be over-sized for smaller setups or under-sized for high-workload scenarios. To configure OpenShift Serverless Serving for a minimal workload scenario, you need to know the idle consumption of the system components.

2.3.2.1. Idle consumption

The idle consumption is dependent on the number of Knative Services. The following memory usage has been measured for the components in the knative-serving and knative-serving-ingress OpenShift Container Platform projects:

| Component | 0 Services | 100 Services | 500 Services | 1000 Services |

|---|---|---|---|---|

|

| 55Mi | 86Mi | 300Mi | 450Mi |

|

| 52Mi | 102Mi | 225Mi | 350Mi |

|

| 100Mi | 135Mi | 310Mi | 500Mi |

|

| 60Mi | 60Mi | 60Mi | 60Mi |

|

| 20Mi | 60Mi | 190Mi | 330Mi |

|

| 90Mi | 170Mi | 340Mi | 430Mi |

Either 3scale-kourier-gateway and net-kourier-controller components or istio-ingressgateway and net-istio-controller components are installed.

The memory consumption of net-istio is based on the total number of pods within the mesh.

2.3.3. Configuring Serving for minimal workloads

You can configure Knative Serving with reduced resource requirements for minimal workload scenarios to optimize resource consumption. This configuration adjusts replicas, CPU, and memory settings for system components such as activator, controller, and webhook.

Procedure

You can configure Knative Serving for minimal workloads using the

KnativeServingcustom resource (CR):A minimal workload configuration in

KnativeServingCRapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: high-availability: replicas: 1 1 workloads: - name: activator replicas: 2 2 resources: - container: activator requests: cpu: 250m 3 memory: 60Mi 4 limits: cpu: 1000m memory: 600Mi - name: controller replicas: 1 5 resources: - container: controller requests: cpu: 10m memory: 100Mi limits: 6 cpu: 200m memory: 300Mi - name: webhook replicas: 2 resources: - container: webhook requests: cpu: 100m 7 memory: 60Mi limits: cpu: 200m memory: 200Mi podDisruptionBudgets: 8 - name: activator-pdb minAvailable: 1 - name: webhook-pdb minAvailable: 1- 1

- Setting this to

1scales all system components to one replica. - 2

- Always scale the activator to at least

2instances to avoid downtime. - 3

- Set the activator CPU request to at least

250m, because theHorizontalPodAutoscaleruses this value to scale the activator up or down. - 4

- Adjust memory requests to the idle values from the earlier table. Also adjust memory limits according to your expected load (this might need custom testing to find the best values).

- 5

- One webhook and one controller are enough for a minimal-workload scenario

- 6

- These limits are enough for a minimal-workload scenario, but they also might need adjustments depending on your concrete workload.

- 7

- Set the webhook CPU request to at least

100m, because theHorizontalPodAutoscaleruses this value to scale the webhook up or down. - 8

- Adjust the

PodDistruptionBudgetsto a value lower thanreplicas, to avoid problems during node maintenance.

2.3.4. Configuring Serving for high workloads

You can configure Knative Serving for high workloads by using the KnativeServing custom resource (CR). The following findings are relevant to configuring Knative Serving for a high workload:

These findings have been tested with requests with a payload size of 0-32 kb. The Knative Service backends used in those tests had a startup latency between 0 to 10 seconds and response times between 0 to 5 seconds.

- All data-plane components are mostly increasing CPU usage on higher requests and payload scenarios, so the CPU requests and limits have to be tested and potentially increased.

- The activator component might require additional memory when it buffers more or larger request payloads. In such cases, increase the memory requests and limits.

- One activator pod can handle about 2500 requests per second before it starts to increase latency and, at some point, leads to errors.

-

One

3scale-kourier-gatewayoristio-ingressgatewaypod can also handle about 2500 requests per second before it starts to increase latency and, at some point, leads to errors. - Each of the data-plane components consumes up to 1 vCPU of CPU for handling 2500 requests per second. Note that this highly depends on the payload size and the response times of the Knative Service backend.

Fast startup and fast response-times of your Knative Service user workloads are critical for good performance of the overall system. The Knative Serving components are buffering incoming requests when the Knative Service user backend is scaling up or when request concurrency has reached its capacity. If your Knative Service user workload introduces long startup or request latency, it will either overload the activator component (when the CPU and memory configuration is too low) or lead to errors for the calling clients.

Procedure

To fine-tune your installation, use the earlier findings combined with your own test results to configure the

KnativeServingcustom resource:A high workload configuration in

KnativeServingCRapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: high-availability: replicas: 2 1 workloads: - name: component-name 2 replicas: 2 3 resources: - container: container-name requests: cpu: 4 memory: limits: cpu: memory: podDisruptionBudgets: 5 - name: name-of-pod-disruption-budget minAvailable: 1- 1

- Set this parameter to at least

2to make sure you always have at least two instances of every component running. You can also useworkloadsto override the replicas for certain components. - 2

- Use the

workloadslist to configure specific components. Use thedeploymentname of the component and set thereplicasfield. - 3

- For the

activator,webhook, and3scale-kourier-gatewaycomponents, which use horizontal pod autoscalers (HPAs), thereplicasfield sets the minimum number of replicas. The actual number of replicas depends on the CPU load and scaling done by the HPAs. - 4

- Set the requested and limited CPU and memory according to at least the idle consumption while also taking the earlier findings and your own test results into consideration.

- 5

- Adjust the

PodDistruptionBudgetsto a value lower thanreplicasto avoid problems during node maintenance. The defaultminAvailablesets to1, so if you increase the required replicas, you must also increaseminAvailable.

As each environment is highly specific, it is essential to test and find your own ideal configuration. Use the monitoring and alerting functionality of OpenShift Container Platform to continuously monitor your actual resource consumption and make adjustments if needed.

When you use the OpenShift Serverless and Service Mesh integration, the istio-proxy sidecar containers increase CPU usage.

Chapter 3. Autoscaling

3.1. Autoscaling

Knative Serving provides automatic scaling, or autoscaling, for applications to match incoming demand. For example, if an application is receiving no traffic, and scale-to-zero is enabled, Knative Serving scales the application down to zero replicas. If scale-to-zero is disabled, the application is scaled down to the minimum number of replicas configured for applications on the cluster. Replicas can also be scaled up to meet demand if traffic to the application increases.

Autoscaling settings for Knative services can be global settings that are configured by cluster administrators (or dedicated administrators for Red Hat OpenShift Service on AWS and OpenShift Dedicated), or per-revision settings that are configured for individual services.

You can modify per-revision settings for your services by using the OpenShift Container Platform web console, by modifying the YAML file for your service, or by using the Knative (kn) CLI.

Any limits or targets that you set for a service are measured against a single instance of your application. For example, setting the target annotation to 50 configures the autoscaler to scale the application so that each revision handles 50 requests at a time.

3.2. Scale bounds

Scale bounds determine the minimum and maximum numbers of replicas that can serve an application at any given time. You can set scale bounds for an application to help prevent cold starts or control computing costs.

3.2.1. Minimum scale bounds

The min-scale annotation sets the minimum number of replicas that serve an application. If you do not enable scale to zero, the min-scale value defaults to 1.

The min-scale value defaults to 0 replicas when the following conditions apply:

-

You do not set the

min-scaleannotation. - You enable scaling to zero.

-

You use the

KPAclass.

Example service spec with min-scale annotation

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: showcase

namespace: default

spec:

template:

metadata:

annotations:

autoscaling.knative.dev/min-scale: "0"

...3.2.1.1. Setting the min-scale annotation by using the Knative CLI

Using the Knative (kn) CLI to set the min-scale annotation provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service command with the --scale-min flag to create or change the min-scale value for a service.

Prerequisites

- You have installed Knative Serving on the cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

Set the minimum number of replicas for the service by using the

--scale-minflag:$ kn service create <service_name> --image <image_uri> --scale-min <integer>

Example command

$ kn service create showcase --image quay.io/openshift-knative/showcase --scale-min 2

3.2.2. Maximum scale bounds

The max-scale annotation determines the maximum number of replicas that can serve an application. If the max-scale annotation is not set, there is no upper limit for the number of replicas created.

Example service spec with max-scale annotation

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: showcase

namespace: default

spec:

template:

metadata:

annotations:

autoscaling.knative.dev/max-scale: "10"

...3.2.2.1. Setting the max-scale annotation by using the Knative CLI

Using the Knative (kn) CLI to set the max-scale annotation provides a more streamlined and intuitive user interface over modifying YAML files directly. You can use the kn service command with the --scale-max flag to create or change the max-scale value for a service.

Prerequisites

- You have installed Knative Serving on the cluster.

-

You have installed the Knative (

kn) CLI.

Procedure

Set the maximum number of replicas for the service by using the

--scale-maxflag:$ kn service create <service_name> --image <image_uri> --scale-max <integer>

Example command

$ kn service create showcase --image quay.io/openshift-knative/showcase --scale-max 10

3.3. Concurrency

Concurrency determines the number of simultaneous requests that can be processed by each replica of an application at any given time. Concurrency can be configured as a soft limit or a hard limit:

- A soft limit is a targeted requests limit, rather than a strictly enforced bound. For example, if there is a sudden burst of traffic, the soft limit target can be exceeded.

A hard limit is a strictly enforced upper bound requests limit. If concurrency reaches the hard limit, surplus requests are buffered and must wait until there is enough free capacity to execute the requests.

ImportantUsing a hard limit configuration is only recommended if there is a clear use case for it with your application. Having a low, hard limit specified may have a negative impact on the throughput and latency of an application, and might cause cold starts.

Adding a soft target and a hard limit means that the autoscaler targets the soft target number of concurrent requests, but imposes a hard limit of the hard limit value for the maximum number of requests.

If the hard limit value is less than the soft limit value, the soft limit value is tuned down, because there is no need to target more requests than the number that can actually be handled.

3.3.1. Configuring a soft concurrency target

A soft limit sets a target for the number of requests rather than a strictly enforced bound. For example, during a sudden traffic burst, concurrency can exceed the soft limit. You can specify a soft concurrency target for your Knative service by setting the autoscaling.knative.dev/target annotation in the spec, or by using the kn service command with the correct flags.

Procedure

Optional: Set the

autoscaling.knative.dev/targetannotation for your Knative service in the spec of theServicecustom resource:Example service spec

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: showcase namespace: default spec: template: metadata: annotations: autoscaling.knative.dev/target: "200"Optional: Use the

kn servicecommand to specify the--concurrency-targetflag:$ kn service create <service_name> --image <image_uri> --concurrency-target <integer>

Example command to create a service with a concurrency target of 50 requests

$ kn service create showcase --image quay.io/openshift-knative/showcase --concurrency-target 50

3.3.2. Configuring a hard concurrency limit

A hard concurrency limit sets a strict upper bound on the number of requests. When concurrency reaches this limit, the system buffers additional requests until capacity becomes available. You can specify a hard concurrency limit for your Knative service by modifying the containerConcurrency spec, or by using the kn service command with the correct flags.

Procedure

Optional: Set the

containerConcurrencyspec for your Knative service in the spec of theServicecustom resource:Example service spec

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: showcase namespace: default spec: template: spec: containerConcurrency: 50The default value is

0, which means that the system does not limit the number of simultaneous requests that can reach a single service replica.A value greater than

0sets the exact number of requests that can reach a single service replica at one time. In this example, the system limits concurrency to 50 requests.Optional: Use the

kn servicecommand to specify the--concurrency-limitflag:$ kn service create <service_name> --image <image_uri> --concurrency-limit <integer>

Example command to create a service with a concurrency limit of 50 requests

$ kn service create showcase --image quay.io/openshift-knative/showcase --concurrency-limit 50

3.3.3. Concurrency target utilization

This value specifies the percentage of the concurrency limit that is actually targeted by the autoscaler. This is also known as specifying the hotness at which a replica runs, which enables the autoscaler to scale up before the defined hard limit is reached.

For example, if the containerConcurrency value is set to 10, and the target-utilization-percentage value is set to 70 percent, the autoscaler creates a new replica when the average number of concurrent requests across all existing replicas reaches 7. Requests numbered 7 to 10 are still sent to the existing replicas, but additional replicas are started in anticipation of being required after the containerConcurrency value is reached.

Example service configured using the target-utilization-percentage annotation

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: showcase

namespace: default

spec:

template:

metadata:

annotations:

autoscaling.knative.dev/target-utilization-percentage: "70"

...3.4. Scale-to-zero

Knative Serving provides automatic scaling, or autoscaling, for applications to match incoming demand.

3.4.1. Enabling scale-to-zero

You can use the enable-scale-to-zero spec to enable or disable scale-to-zero globally for applications on the cluster.

Prerequisites

- You have installed OpenShift Serverless Operator and Knative Serving on your cluster.

- You have cluster administrator permissions on OpenShift Container Platform, or you have cluster or dedicated administrator permissions on Red Hat OpenShift Service on AWS or OpenShift Dedicated.

- You are using the default Knative Pod Autoscaler. The scale to zero feature is not available if you are using the Kubernetes Horizontal Pod Autoscaler.

Procedure

Change the

enable-scale-to-zerospec in theKnativeServingcustom resource (CR):Example

KnativeServingCRapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: autoscaler: enable-scale-to-zero: "false" 1- 1

- The

enable-scale-to-zerospec accepts eithertrueorfalse. When you set the value totrue, the system enablesscale-to-zero. When you set the value tofalse, the system scales applications down to the configured minimum scale bound. The default value istrue.

3.4.2. Configuring the scale-to-zero grace period

Knative Serving provides automatic scaling down to zero pods for applications. You can use the scale-to-zero-grace-period spec to define an upper bound time limit that Knative waits for scale-to-zero machinery to be in place before the last replica of an application is removed.

Prerequisites

- You have installed OpenShift Serverless Operator and Knative Serving on your cluster.

- You have cluster administrator permissions on OpenShift Container Platform, or you have cluster or dedicated administrator permissions on Red Hat OpenShift Service on AWS or OpenShift Dedicated.

- You are using the default Knative Pod Autoscaler. The scale-to-zero feature is not available if you are using the Kubernetes Horizontal Pod Autoscaler.

Procedure

Modify the

scale-to-zero-grace-periodspec in theKnativeServingcustom resource (CR):Example KnativeServing CR

apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: autoscaler: scale-to-zero-grace-period: "30s" 1- 1

- The grace period time in seconds. The default value is 30 seconds.

Chapter 4. Configuring OpenShift Serverless applications

4.1. Multi-container support for Serving

You can deploy a multi-container pod by using a single Knative service. This method is useful for separating application responsibilities into smaller, specialized parts.

4.1.1. Configuring a multi-container service

By default, Knative Serving supports multi-container pods. You can specify many containers in the service to create a multi-container pod.

Procedure

Change your service to include additional containers. Only one container can handle requests, so specify

portsfor exactly one container. Here is an example configuration with two containers:Many containers configuration

apiVersion: serving.knative.dev/v1 kind: Service ... spec: template: spec: containers: - name: first-container 1 image: gcr.io/knative-samples/helloworld-go ports: - containerPort: 8080 2 - name: second-container 3 image: gcr.io/knative-samples/helloworld-java

4.1.2. Probing a multi-container service

You can specify readiness and liveness probes for multiple containers. This feature is not enabled by default and you must configure it using the KnativeServing custom resource (CR).

Procedure

Configure multi-container probing for your service by enabling the

multi-container-probingfeature in theKnativeServingCR.Multi-container probing configuration

... spec: config: features: "multi-container-probing": enabled 1 ...- 1

- Enabled multi-container-probing feature

Apply the updated

KnativeServingCR.$ oc apply -f <filename>

Modify your multi-container service to include the specified probes.

Multi-container probing

apiVersion: serving.knative.dev/v1 kind: Service ... spec: template: spec: containers: - name: first-container image: ghcr.io/knative/helloworld-go:latest ports: - containerPort: 8080 readinessProbe: 1 httpGet: port: 8080 - name: second-container image: gcr.io/knative-samples/helloworld-java readinessProbe: 2 httpGet: port: 8090

4.1.2.1. Additional resources

4.2. EmptyDir volumes

emptyDir volumes are empty volumes that are created when a pod is created, and are used to provide temporary working disk space. emptyDir volumes are deleted when the pod they were created for is deleted.

4.2.1. Configuring the EmptyDir extension

You can use the kubernetes.podspec-volumes-emptydir extension to control whether Knative Serving allows emptyDir volumes. To enable using emptyDir volumes, you must change the KnativeServing custom resource (CR) to include the following YAML:

Example KnativeServing CR

apiVersion: operator.knative.dev/v1beta1

kind: KnativeServing

metadata:

name: knative-serving

spec:

config:

features:

kubernetes.podspec-volumes-emptydir: enabled

...4.3. Persistent Volume Claims for Serving

Some serverless applications require permanent data storage. By configuring different volume types, you can provide data storage for Knative services. Serving supports mounting of the volume types such as secret, configMap, projected, and emptyDir.

You can configure persistent volume claims (PVCs) for your Knative services. The Persistent volume types are implemented as plugins. To determine if there are any persistent volume types available, you can check the available or installed storage classes in your cluster. Persistent volumes are supported, but require a feature flag to be enabled.

The mounting of large volumes can lead to a considerable delay in the start time of the application.

4.3.1. Enabling PVC support

Procedure

To enable Knative Serving to use PVCs and write to them, change the

KnativeServingcustom resource (CR) to include the following YAML:Enabling PVCs with write access

... spec: config: features: "kubernetes.podspec-persistent-volume-claim": enabled "kubernetes.podspec-persistent-volume-write": enabled ...-

The

kubernetes.podspec-persistent-volume-claimextension controls whether Knative Serving can use persistent volumes (PVs). -

The

kubernetes.podspec-persistent-volume-writeextension controls whether PVs are available to Knative Serving with the write access.

-

The

To claim a PV, change your service to include the PV configuration. For example, you might have a persistent volume claim with the following configuration:

NoteUse the storage class that supports the access mode you are requesting. For example, you can use the

ocs-storagecluster-cephfsstorage class for theReadWriteManyaccess mode.The

ocs-storagecluster-cephfsstorage class is supported and comes from This page is not included, but the link has been rewritten to point to the nearest parent document.Red Hat OpenShift Data Foundation.PersistentVolumeClaimconfigurationapiVersion: v1 kind: PersistentVolumeClaim metadata: name: example-pv-claim namespace: my-ns spec: accessModes: - ReadWriteMany storageClassName: ocs-storagecluster-cephfs resources: requests: storage: 1GiIn this case, to claim a PV with write access, change your service as follows:

Knative service PVC configuration

apiVersion: serving.knative.dev/v1 kind: Service metadata: namespace: my-ns ... spec: template: spec: containers: ... volumeMounts: 1 - mountPath: /data name: mydata readOnly: false volumes: - name: mydata persistentVolumeClaim: 2 claimName: example-pv-claim readOnly: false 3NoteTo successfully use persistent storage in Knative services, you need additional configuration, such as the user permissions for the Knative container user.

4.3.2. Additional resources for OpenShift Container Platform

4.4. Init containers

Content from kubernetes.io is not included.Init containers are specialized containers that are run before application containers in a pod. They are generally used to implement initialization logic for an application, which may include running setup scripts or downloading required configurations. You can enable the use of init containers for Knative services by modifying the KnativeServing custom resource (CR).

Init containers may cause longer application start-up times and should be used with caution for serverless applications, which are expected to scale up and down frequently.

4.4.1. Enabling init containers

You can enable the use of init containers in Knative Serving workloads by configuring the KnativeServing custom resource. Init containers run before application containers start and are useful for setup tasks such as configuration, database migrations, or permission adjustments.

Prerequisites

- You have installed OpenShift Serverless Operator and Knative Serving on your cluster.

- You have cluster administrator permissions on OpenShift Container Platform, or you have cluster or dedicated administrator permissions on Red Hat OpenShift Service on AWS or OpenShift Dedicated.

Procedure

Enable the use of init containers by adding the

kubernetes.podspec-init-containersflag to theKnativeServingCR:Example

KnativeServingCRapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: features: kubernetes.podspec-init-containers: enabled ...

4.5. Startup probes

Startup probes verify whether a service has started successfully, helping to reduce cold start times for containers with slow startup processes. Startup probes run only during the container’s initialization phase and do not execute periodically. If a startup probe fails, the container adheres to the defined restartPolicy.

4.5.1. Progress deadline

By default, services have a progress deadline that defines the time limit for a service to complete its initial startup. When using startup probes, ensure that the progress deadline is set to exceed the maximum time required by the startup probes. If the progress deadline is set too low, the startup probes might not finish before the deadline is reached, which can prevent the service from starting.

Consider increasing the progress deadline if you encounter any of these conditions in your deployment:

- The service image takes a long time to pull due to its size.

-

The service takes a long time to become

READYbecause of initial cache priming. - The cluster relies on autoscaling to allocate resources for new pods.

4.5.2. Configuring startup probing

For OpenShift Serverless Serving, startup probes are not defined by default. You can define startup probes for your containers in your deployment configuration.

Procedure

Define startup probes for your service by modifying your deployment configuration. The following example shows a configuration with two containers:

Example of defined starup probes

apiVersion: serving.knative.dev/v1 kind: Service # ... spec: template: spec: containers: - name: first-container image: <image> ports: - containerPort: 8080 # ... startupProbe: 1 httpGet: port: 8080 path: "/" - name: second-container image: <image> # ... startupProbe: 2 httpGet: port: 8081 path: "/"

4.5.3. Configuring the progress deadline

You can configure progress deadline settings to specify the maximum time allowed for your deployment to progress before the system reports a failure for the Knative Revision. You can specify the time limit in seconds or minutes.

To configure the progress deadline effectively, consider the following parameters:

-

initialDelaySeconds -

failureThreshold -

periodSeconds -

timeoutSeconds

If the initial scale is not achieved within the specified time limit, the Knative Autoscaler component scales the revision to 0, and the Knative service enters a terminal Failed state.

By default, the system sets the progress deadline to 600 seconds. Specify this value as a Golang time.Duration string and round it to the nearest second.

Procedure

To configure the progress deadline setting, use an annotation in your deployment configuration.

Example of progress deadline set to 60 seconds

apiVersion: serving.knative.dev/v1 kind: Service ... spec: template: metadata: annotations: serving.knative.dev/progress-deadline: "60s" spec: containers: - image: ghcr.io/knative/helloworld-go:latest

4.6. Resolving image tags to digests

If the Knative Serving controller has access to the container registry, Knative Serving resolves image tags to a digest when you create a revision of a service. This is known as tag-to-digest resolution, and helps to provide consistency for deployments.

4.6.1. Tag-to-digest resolution

To give the controller access to the container registry on OpenShift Container Platform, you must create a secret and then configure controller custom certificates. You can configure controller custom certificates by modifying the controller-custom-certs spec in the KnativeServing custom resource (CR). The secret must reside in the same namespace as the KnativeServing CR.

If a secret is not included in the KnativeServing CR, this setting defaults to using public key infrastructure (PKI). When using PKI, the cluster-wide certificates are automatically injected into the Knative Serving controller by using the config-service-sa config map. The OpenShift Serverless Operator populates the config-service-sa config map with cluster-wide certificates and mounts the config map as a volume to the controller.

4.6.1.1. Configuring tag-to-digest resolution by using a secret

If the controller-custom-certs spec uses the Secret type, the system mounts the secret as a volume. Knative components consume the secret directly and assume that the secret has the required certificates.

Prerequisites

- You have cluster administrator permissions on OpenShift Container Platform, or you have cluster or dedicated administrator permissions on Red Hat OpenShift Service on AWS or OpenShift Dedicated.

- You have installed the OpenShift Serverless Operator and Knative Serving on your cluster.

Procedure

Create a secret:

Example command

$ oc -n knative-serving create secret generic custom-secret --from-file=<secret_name>.crt=<path_to_certificate>

Configure the

controller-custom-certsspec in theKnativeServingcustom resource (CR) to use theSecrettype:Example

KnativeServingCRapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: controller-custom-certs: name: custom-secret type: Secret

4.7. Configuring deployment resources

In Knative Serving, the config-deployment config map contains settings that determine how Kubernetes Deployment resources are configured for Knative services. In OpenShift Serverless Serving, you can configure these settings in the deployment section of your KnativeServing custom resource (CR).

You can use the deployment section to configure the following:

- Tag resolution

- Runtime environments

- Progress deadlines

4.7.1. Skipping tag resolution

Skipping tag resolution in OpenShift Serverless Serving can speed up deployments by avoiding unnecessary queries to the container registry, reducing latency and dependency on registry availability.

You can configure Serving to skip tag resolution by modifying the registriesSkippingTagResolving setting in your KnativeServing custom resource (CR).

Procedure

In your

KnativeServingCR, modify theregistriesSkippingTagResolvingsetting with the list of registries for which tag resoution will be skipped:Example of configured tag resolution skipping

apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: deployment: registriesSkippingTagResolving: "registry.example.com, another.registry.com"

4.7.2. Configuring selectable RuntimeClassName

You can configure OpenShift Serverless Serving to set a specific RuntimeClassName resource for Deployments by updating the runtime-class-name setting in your KnativeServing custom resource (CR).

This setting interacts with service labels, applying either the default RuntimeClassName or the one that matches the most labels associated with the service.

Procedure

In your

KnativeServingCR, configure theruntime-class-namesetting:Example of configured

runtime-class-namesettingapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: deployment: runtime-class-name: | kata: {} gvisor: selector: my-label: selector

4.7.3. Progress deadline

By default, services have a progress deadline that defines the time limit for a service to complete its initial startup.

Consider increasing the progress deadline if you encounter any of these conditions in your deployment:

- The service image takes a long time to pull due to its size.

-

The service takes a long time to become

READYbecause of initial cache priming. - The cluster relies on autoscaling to allocate resources for new pods.

If the initial scale is not achieved within the specified time limit, the Knative Autoscaler component scales the revision to 0, and the service enters a terminal Failed state.

4.7.3.1. Configuring the progress deadline

Configure progress deadline settings to set the maximum time allowed in seconds or minutes for deployment progress before the system reports a Knative Revision failure.

By default, the progress deadline is set to 600 seconds. This value is specified as a Go time.Duration string and must be rounded to the nearest second.

Procedure

Configure progress deadline by modifying your KnativeServing custom resource (CR).

In your

KnativeServingCR, set the value ofprogressDeadline:Example of progress deadline set to 60 seconds

apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: deployment: progressDeadline: "60s"

4.8. Configuring Kourier

Kourier is a lightweight Kubernetes-native Ingress for Knative Serving. Kourier acts as a gateway for Knative, routing HTTP traffic to Knative services.

4.8.1. Accessing the current Envoy bootstrap configuration

The Envoy proxy component in Kourier handles inbound and outbound HTTP traffic for the Knative services. By default, Kourier has an Envoy bootstrap configuration in the kourier-bootstrap config map in the knative-serving-ingress namespace.

Procedure

To get the current Envoy bootstrap configuration, run the following command:

Example command

$ oc get cm kourier-bootstrap -n knative-serving-ingress -o yaml

For example, with the default configuration, the example command produces the following output:

Example output

Name: kourier-bootstrap Namespace: knative-serving-ingress Labels: app.kubernetes.io/component=net-kourier app.kubernetes.io/name=knative-serving app.kubernetes.io/version=release-v1.10 networking.knative.dev/ingress-provider=kourier serving.knative.openshift.io/ownerName=knative-serving serving.knative.openshift.io/ownerNamespace=knative-serving Annotations: manifestival: newExample

Dataoutputdynamic_resources: ads_config: transport_api_version: V3 api_type: GRPC rate_limit_settings: {} grpc_services: - envoy_grpc: {cluster_name: xds_cluster} cds_config: resource_api_version: V3 ads: {} lds_config: resource_api_version: V3 ads: {} node: cluster: kourier-knative id: 3scale-kourier-gateway static_resources: listeners: - name: stats_listener address: socket_address: address: 0.0.0.0 port_value: 9000 filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: stats_server http_filters: - name: envoy.filters.http.router typed_config: "@type": type.googleapis.com/envoy.extensions.filters.http.router.v3.Router route_config: virtual_hosts: - name: admin_interface domains: - "*" routes: - match: safe_regex: regex: '/(certs|stats(/prometheus)?|server_info|clusters|listeners|ready)?' headers: - name: ':method' string_match: exact: GET route: cluster: service_stats clusters: - name: service_stats connect_timeout: 0.250s type: static load_assignment: cluster_name: service_stats endpoints: lb_endpoints: endpoint: address: pipe: path: /tmp/envoy.admin - name: xds_cluster # This keepalive is recommended by envoy docs. # https://www.envoyproxy.io/docs/envoy/latest/api-docs/xds_protocol typed_extension_protocol_options: envoy.extensions.upstreams.http.v3.HttpProtocolOptions: "@type": type.googleapis.com/envoy.extensions.upstreams.http.v3.HttpProtocolOptions explicit_http_config: http2_protocol_options: connection_keepalive: interval: 30s timeout: 5s connect_timeout: 1s load_assignment: cluster_name: xds_cluster endpoints: lb_endpoints: endpoint: address: socket_address: address: "net-kourier-controller.knative-serving-ingress.svc.cluster.local." port_value: 18000 type: STRICT_DNS admin: access_log: - name: envoy.access_loggers.stdout typed_config: "@type": type.googleapis.com/envoy.extensions.access_loggers.stream.v3.StdoutAccessLog address: pipe: path: /tmp/envoy.admin layered_runtime: layers: - name: static-layer static_layer: envoy.reloadable_features.override_request_timeout_by_gateway_timeout: falseExample

BinaryDataoutputEvents: <none>

4.8.2. Customizing kourier-bootstrap for Kourier getaways

The Envoy proxy component in Kourier handles inbound and outbound HTTP traffic for the Knative services. By default, Kourier has an Envoy bootstrap configuration in the kourier-bootstrap config map in the knative-serving-ingress namespace. You can change this config map to a custom one.

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving.

- You have cluster administrator permissions on OpenShift Container Platform, or you have cluster or dedicated administrator permissions on Red Hat OpenShift Service on AWS or OpenShift Dedicated.

Procedure

Specify a custom bootstrapping config map by changing the

spec.ingress.kourier.bootstrap-configmapfield in theKnativeServingcustom resource (CR):Example

KnativeServingCRapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: config: network: ingress-class: kourier.ingress.networking.knative.dev ingress: kourier: bootstrap-configmap: my-configmap enabled: true # ...

4.8.3. Enabling administrator interface access

You can change the envoy bootstrap configuration to enable access to the administrator interface.

This procedure assumes enough knowledge of Knative, as changing envoy bootstrap configuration might result in Knative failure. Red Hat does not support custom configurations that are not tested or shipped with the product.

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving.

- You have cluster administrator permissions on OpenShift Container Platform, or you have cluster or dedicated administrator permissions on Red Hat OpenShift Service on AWS or OpenShift Dedicated.

Procedure

To enable administrator interface access, locate this configuration in your bootstrapping config map:

pipe: path: /tmp/envoy.admin

Substitute it with the following configuration:

socket_address: 1 address: 127.0.0.1 port_value: 9901- 1

- This configuration enables access to the Envoy admin interface on the loopback address (127.0.0.1) and port 9901.

Apply the

socket_addressconfiguration in theservice_statscluster configuration and in theadminconfiguration:The first is in the

service_statscluster configuration:clusters: - name: service_stats connect_timeout: 0.250s type: static load_assignment: cluster_name: service_stats endpoints: lb_endpoints: endpoint: address: socket_address: address: 127.0.0.1 port_value: 9901The second is in the

adminconfiguration:admin: access_log: - name: envoy.access_loggers.stdout typed_config: "@type": type.googleapis.com/envoy.extensions.access_loggers.stream.v3.StdoutAccessLog address: socket_address: address: 127.0.0.1 port_value: 9901

4.9. Restrictive network policies

4.9.1. Clusters with restrictive network policies

If you are using a cluster that multiple users have access to, your cluster might use network policies to control which pods, services, and namespaces can communicate with each other over the network. If your cluster uses restrictive network policies, it is possible that Knative system pods are not able to access your Knative application. For example, if your namespace has the following network policy, which denies all requests, Knative system pods cannot access your Knative application:

Example NetworkPolicy object that denies all requests to the namespace

kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-by-default namespace: example-namespace spec: podSelector: ingress: []

4.9.2. Enabling communication with Knative applications on a cluster with restrictive network policies

To allow access to your applications from Knative system pods, you must add a label to each of the Knative system namespaces, and then create a NetworkPolicy object in your application namespace that allows access to the namespace for other namespaces that have this label.

A network policy that denies requests to non-Knative services on your cluster still prevents access to these services. However, by allowing access from Knative system namespaces to your Knative application, you are allowing access to your Knative application from all namespaces in the cluster.

If you do not want to allow access to your Knative application from all namespaces on the cluster, you might want to use JSON Web Token authentication for Knative services instead. JSON Web Token authentication for Knative services requires Service Mesh.

Prerequisites

-

Install the OpenShift CLI (

oc). - OpenShift Serverless Operator and Knative Serving are installed on your cluster.

Procedure

Add the

knative.openshift.io/system-namespace=truelabel to each Knative system namespace that requires access to your application:Label the

knative-servingnamespace:$ oc label namespace knative-serving knative.openshift.io/system-namespace=true

Label the

knative-serving-ingressnamespace:$ oc label namespace knative-serving-ingress knative.openshift.io/system-namespace=true

Label the

knative-eventingnamespace:$ oc label namespace knative-eventing knative.openshift.io/system-namespace=true

Label the

knative-kafkanamespace:$ oc label namespace knative-kafka knative.openshift.io/system-namespace=true

Create a

NetworkPolicyobject in your application namespace to allow access from namespaces with theknative.openshift.io/system-namespacelabel:Example

NetworkPolicyobjectapiVersion: networking.k8s.io/v1 kind: NetworkPolicy metadata: name: <network_policy_name> 1 namespace: <namespace> 2 spec: ingress: - from: - namespaceSelector: matchLabels: knative.openshift.io/system-namespace: "true" podSelector: {} policyTypes: - Ingress

4.10. Configuring revision timeouts

You can configure timeout durations for revisions globally or individually to control the time spent on requests.

4.10.1. Configuring revision timeout

You can configure the default number of seconds for the revision timeout based on the request.

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving.

You have the required permissions for your cluster:

- Cluster administrator permissions on OpenShift Container Platform

- Cluster administrator or dedicated administrator permissions on Red Hat OpenShift Service on AWS

- Cluster administrator or dedicated administrator permissions on OpenShift Dedicated

Procedure

Choose the appropriate method to configure the revision timeout:

To configure the revision timeout globally, set the

revision-timeout-secondsfield in theKnativeServingcustom resource (CR):apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: config: defaults: revision-timeout-seconds: "300"To configure the timeout per revision by setting the

timeoutSecondsfield in your service definition:apiVersion: serving.knative.dev/v1 kind: Service metadata: namespace: my-ns spec: template: spec: timeoutSeconds: 300 containers: - image: ghcr.io/knative/helloworld-go:latest

NoteTo set the revision timeout to a value over 600 seconds (10 minutes), you must increase the default OpenShift Container Platform route timeout and the maximum revision timeout.

For instructions on how to configure timeouts for requests exceeding the default 600 seconds (10 minutes), see "Long-running requests".

4.10.2. Configuring maximum revision timeout

By setting the maximum revision timeout, you ensure that no revision exceeds a specific limit. The maximum revision timeout must not exceed the terminationGracePeriodSeconds value of the activator to prevent the activator from disrupting in-flight requests.

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving.

You have the required permissions for your cluster:

- Cluster administrator permissions on OpenShift Container Platform

- Cluster administrator or dedicated administrator permissions on Red Hat OpenShift Service on AWS

- Cluster administrator or dedicated administrator permissions on OpenShift Dedicated

Procedure

To configure the maximum revision timeout, set the

max-revision-timeout-secondsfield in theKnativeServingcustom resource (CR):apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: config: defaults: max-revision-timeout-seconds: "600"NoteTo set the maximum revision timeout to a value over 600 seconds (10 minutes), you must increase the default OpenShift Container Platform route timeout.

For instructions on how to configure timeouts for requests exceeding the default 600 seconds (10 minutes), see "Long-running requests".

4.10.3. Configuring revision response start timeout

You can set the revision response start timeout to define how long Serving waits for a revision to start sending network traffic. The revision response start timeout must not exceed the revision timeout. The default value is 300 seconds (5 minutes).

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving.

You have the required permissions for your cluster:

- Cluster administrator permissions on OpenShift Container Platform

- Cluster administrator or dedicated administrator permissions on Red Hat OpenShift Service on AWS

- Cluster administrator or dedicated administrator permissions on OpenShift Dedicated

Procedure

Choose the appropriate method to configure the revision response start timeout:

To configure the timeout globally, set the

revision-response-start-timeout-secondsfield in yourKnativeServingcustom resource (CR). If your required response start timeout exceeds the revision timeout, also adjust therevision-timeout-secondsfield:Example of revision response start timeout globally set to 300 seconds (5 minutes)

apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: config: defaults: revision-timeout-seconds: "600" revision-response-start-timeout-seconds: "300"To configure the timeout per revision, set the

responseStartTimeoutSecondsfield in your service definition. If your required response start timeout exceeds the revision timeout, also adjust thetimeoutSecondsfield:Example of a service definition with revision response start timeout set to 300 seconds (5 minutes)

apiVersion: serving.knative.dev/v1 kind: Service metadata: namespace: my-ns spec: template: spec: timeoutSeconds: 600 responseStartTimeoutSeconds: 300 containers: # ...

NoteTo set the revision response start timeout and the revision timeout to a value over 600 seconds (10 minutes), you must increase the default OpenShift Container Platform route timeout and the maximum revision timeout.

For instructions on how to configure timeouts for requests exceeding the default 600 seconds (10 minutes), see "Long-running requests".

4.10.4. Configuring revision idle timeout

By setting the revision idle timeout, you can specify the maximum duration in seconds a request allowes to stay open without receiving data from the application. The default duration is 0 (infinite).

Prerequisites

- You have installed the OpenShift Serverless Operator and Knative Serving.

You have the required permissions for your cluster:

- Cluster administrator permissions on OpenShift Container Platform

- Cluster administrator or dedicated administrator permissions on Red Hat OpenShift Service on AWS

- Cluster administrator or dedicated administrator permissions on OpenShift Dedicated

Procedure

Choose the appropriate method to configure the revision idle timeout:

To configure the timeout globally, set the

revision-idle-timeout-secondsfield in yourKnativeServingcustom resource (CR):Example of revision idle timeout globally set to 300 seconds (5 minutes)

apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: config: defaults: revision-idle-timeout-seconds: "300"To configure the timeout per revision, set the

idleTimeoutSecondsfield in your service definition:Example of a service definition with revision idle timeout set to 300 seconds (5 minutes)

apiVersion: serving.knative.dev/v1 kind: Service metadata: namespace: my-ns spec: template: spec: idleTimeoutSeconds: 300 containers: # ...

4.10.5. Long-running requests

To ensure that requests exceeding the default 600 second timeout set by Knative are not prematurely terminated, you need to adjust the timeouts in the following components:

- OpenShift Container Platform route

- OpenShift Serverless Serving

- Load balancer, depending on the cloud provider

You can configure the timeouts globally or per revision. You can configure the timeouts globally if you have requests across all Knative services that need extended durations, or per revision for specific workloads that require different timeout values, such as AI deployments.

4.10.5.1. Configuring the default route timeouts globally

By configuring the route timeouts globally, you can ensure consistent timeout settings across all services, simplifying management for workloads that have similar timeout needs and reducing the need for individual adjustments.

You can configure the route timeouts globally by updating the ROUTE_HAPROXY_TIMEOUT environment value in your serverless-operator subscription and updating the max-revision-timeout-seconds field in your KnativeServing custom resource (CR). This applies the timeout changes across all Knative services, and you can deploy services with specific timeouts up to the maximum value set.

The Serverless Operator manages the ROUTE_HAPROXY_TIMEOUT environment variable. The default value is 600.

Procedure

Set the value of

ROUTE_HAPROXY_TIMEOUTin your subscription to the required timeout in seconds by running the following command. Note that this change redeploys the pods in theopenshift-serverlessnamespace.Setting the

ROUTE_HAPROXY_TIMEOUTvalue to 900 seconds$ oc patch subscription.operators.coreos.com serverless-operator -n openshift-serverless --type='merge' -p '{"spec": {"config": {"env": [{"name": "ROUTE_HAPROXY_TIMEOUT", "value": "900"}]}}}'You can also set the value of

ROUTE_HAPROXY_TIMEOUTin your subscription directly:A subscription definition with

ROUTE_HAPROXY_TIMEOUTset to 900 secondsapiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: #... spec: channel: stable config: env: - name: ROUTE_HAPROXY_TIMEOUT value: '900' #...NoteIf you created your routes manually and disabled auto-generation with the

serving.knative.openshift.io/disableRouteannotation, you can configure the timeouts directly in the route definitions.Set the maximum revision timeout in your

KnativeServingCR:KnativeServingCR withmax-revision-timeout-secondsset to 900 secondsapiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: defaults: max-revision-timeout-seconds: "900" #...The Serverless Operator automatically adjusts the

terminationGracePeriodvalue of the activator to the maximum revision timeout value to avoid request termination when the cluster terminates activator pods.Optional: Verify the timeout value by running the following command:

$ oc get deployment activator -n knative-serving -o jsonpath="{.spec.template.spec.terminationGracePeriodSeconds}"If necessary for your cloud provider, adjust the load balancer timeout by running the following command:

Load balancer timeout change for AWS Classic LB

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge --patch=' \ {"spec":{"endpointPublishingStrategy": \ {"type":"LoadBalancerService", "loadBalancer": \ {"scope":"External", "providerParameters":{"type":"AWS", "aws": \ {"type":"Classic", "classicLoadBalancer": \ {"connectionIdleTimeout":"20m"}}}}}}}'Deploy a Knative service with the needed timeouts less or equal to the

max-revision-timeout-secondsvariable:A Service definition with timeouts set to 800 seconds

apiVersion: serving.knative.dev/v1 kind: Service metadata: name: example-service-name spec: template: spec: timeoutSeconds: 800 responseStartTimeoutSeconds: 800ImportantWhen you use Service Mesh, the activator pod might stop while a long-running request is in flight, which interrupts the request. To prevent this interruption, adjust the value of the

terminationDrainDurationfield in theServiceMeshControlPlaneCR:+

apiVersion: maistra.io/v2 kind: ServiceMeshControlPlane #... spec: techPreview: meshConfig: defaultConfig: terminationDrainDuration: 1000s 1 #...- 1

- Ensure that the value exceeds the request duration to avoid the Istio proxy shutdown, which would interrupt the request.

Verification

If you are using Kourier, you can verify the current value of the timeout at the OpenShift Container Platform route by running the following command:

$ oc get route <route_name> -n knative-serving-ingress ess -o jsonpath="{.metadata.annotations.haproxy\.router\.openshift\.io/timeout}" 800s

4.10.5.2. Configuring the default route timeouts per revision

By configuring the route timeouts per revision, you can fine-tune timeouts for workloads with unique requirements, such as AI or data processing applications, without impacting the global timeout settings for other services. You can configure the timeouts of a specific revision by updating your KnativeServing custom resource (CR), the Service definition, and using the serving.knative.openshift.io/setRouteTimeout annotation to adjust the OpenShift Container Platform route timeout.

Procedure

Set the

max-revision-timeoutannotation in yourKnativeServingCR as you require:apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving spec: config: defaults: max-revision-timeout-seconds: "900"Optional: Verify the termination grace period of an activator by running the following command:

$ oc get deployment activator -n knative-serving -o jsonpath="{.spec.template.spec.terminationGracePeriodSeconds}" 900If necessary for your cloud provider, adjust the load balancer timeout by running the following command:

Load balancer timeout change for AWS Classic LB

$ oc -n openshift-ingress-operator patch ingresscontroller/default \ --type=merge --patch='{"spec":{"endpointPublishingStrategy": \ {"type":"LoadBalancerService", "loadBalancer": \ {"scope":"External", "providerParameters":{"type":"AWS", "aws": \ {"type":"Classic", "classicLoadBalancer": \ {"connectionIdleTimeout":"20m"}}}}}}}'Set the timeout for your specific service:

apiVersion: serving.knative.dev/v1f kind: Service metadata: name: <your_service_name> annotations: serving.knative.openshift.io/setRouteTimeout: "800" 1 spec: template: metadata: annotations: #... spec: timeoutSeconds: 800 2 responseStartTimeoutSeconds: 800 3- 1

- This annotation sets the timeout for the OpenShift Container Platform route. You can fine-tune this for each service instead of setting a global maximum.

- 2

- This ensures that the request does not exceed the specific value.

- 3

- This ensures that the response start timeout does not trigger before the request reaches the maximum threshold. The default value is

300.

ImportantWhen you use Service Mesh, the activator pod might stop while a long-running request is in flight, which interrupts the request. To prevent this interruption, adjust the value of the

terminationDrainDurationfield in theServiceMeshControlPlaneCR:+

apiVersion: maistra.io/v2 kind: ServiceMeshControlPlane #... spec: techPreview: meshConfig: defaultConfig: terminationDrainDuration: 1000s 1 #...- 1

- Ensure that the value exceeds the request duration to avoid the Istio proxy shutdown, which would interrupt the request.

Verification

If you are using Kourier, you can verify the current value of the timeout at the OpenShift Container Platform route by running the following command:

$ oc get route <route_name> -n knative-serving-ingress ess -o jsonpath="{.metadata.annotations.haproxy\.router\.openshift\.io/timeout}" 800s

Chapter 5. Debugging Serverless applications

You can use a variety of methods to troubleshoot a Serverless application.

5.1. Checking terminal output

You can check your deploy command output to see whether deployment succeeded or not. If the deployment process terminates, the output displays an error message that explains the failure. This kind of failure is most likely due to either a misconfigured manifest or an invalid command.

Procedure

Open the command output on the client where you deploy and manage your application. The following example is an error that you might see after a failed

oc applycommand:Example output

Error from server (InternalError): error when applying patch: {"metadata":{"annotations":{"kubectl.kubernetes.io/last-applied-configuration":"{\"apiVersion\":\"serving.knative.dev/v1\",\"kind\":\"Route\",\"metadata\":{\"annotations\":{},\"name\":\"route-example\",\"namespace\":\"default\"},\"spec\":{\"traffic\":[{\"configurationName\":\"configuration-example\",\"percent\":50}]}}\n"}},"spec":{"traffic":[{"configurationName":"configuration-example","percent":50}]}} to: &{0xc421d98240 0xc421e77490 default route-example STDIN 0xc421db0488 264682 false} for: "STDIN": Internal error occurred: admission webhook "webhook.knative.dev" denied the request: mutation failed: The route must have traffic percent sum equal to 100. ERROR: Non-zero return code '1' from command: Process exited with status 1This output indicates that you must configure the route traffic percent to be equal to 100.

5.2. Checking pod status

You might need to check the status of your Pod object to identify the issue with your Serverless application.

Procedure

List all pods for your deployment by running the following command:

$ oc get pods

Example output

NAME READY STATUS RESTARTS AGE configuration-example-00001-deployment-659747ff99-9bvr4 2/2 Running 0 3h configuration-example-00002-deployment-5f475b7849-gxcht 1/2 CrashLoopBackOff 2 36s

In the output, you can see all pods with selected data about their status.

View the detailed information on the status of a pod by running the following command:

Example output

$ oc get pod <pod_name> --output yaml

In the output, the

conditionsandcontainerStatusesfields might be particularly useful for debugging.

5.3. Checking revision status

You might need to check the status of your revision to identify the issue with your Serverless application.

Procedure

If you configure your route with a

Configurationobject, get the name of theRevisionobject created for your deployment by running the following command:$ oc get configuration <configuration_name> --output jsonpath="{.status.latestCreatedRevisionName}"You can find the configuration name in the

Route.yamlfile, which specifies routing settings by defining an OpenShiftRouteresource.If you configure your route with revision directly, look up the revision name in the

Route.yamlfile.Query for the status of the revision by running the following command:

$ oc get revision <revision_name> --output yaml

A ready revision should have the

reason: ServiceReady,status: "True", andtype: Readyconditions in its status. If these conditions are present, you might want to check pod status or Istio routing. Otherwise, the resource status has the error message.

5.3.1. Additional resources

5.4. Checking Ingress status

You might need to check the status of your Ingress to identify the issue with your Serverless application.

Procedure

Check the IP address of your Ingress by running the following command:

$ oc get svc -n istio-system istio-ingressgateway

The

istio-ingressgatewayservice is theLoadBalancerservice used by Knative.If there is no external IP address, run the following command:

$ oc describe svc istio-ingressgateway -n istio-system

This command prints the reason why IP addresses were not provisioned. Most likely, it is due to a quota issue.

5.5. Checking route status

In some cases, the Route object has issues. You can check its status by using the OpenShift CLI (oc).

Procedure

View the status of the

Routeobject with which you deployed your application by running the following command:$ oc get route <route_name> --output yaml

Substitute

<route_name>with the name of yourRouteobject.The

conditionsobject in thestatusobject states the reason in case of a failure.

5.6. Checking Ingress and Istio routing

Sometimes, when you use Istio as an Ingress layer, the Ingress and Istio routing have issues. You can see the details on them by using the OpenShift CLI (oc).

Procedure

List all Ingress resources and their corresponding labels by running the following command:

$ oc get ingresses.networking.internal.knative.dev -o=custom-columns='NAME:.metadata.name,LABELS:.metadata.labels'

Example output

NAME LABELS helloworld-go map[serving.knative.dev/route:helloworld-go serving.knative.dev/routeNamespace:default serving.knative.dev/service:helloworld-go]

In this output, the labels