Troubleshooting the Vector collector in RHOCP 4

Table of Contents

- Global overview

1.1. Get the configurations, logs, alerts, metrics

1.2. Verify ClusterLogging Operator version installed and support

1.3. Verify the clusterLogging/ClusterLogForwarder CR status section

1.4. Verify the clusterLogging is inManagedstatus

1.5. Verify no errors in the ClusterLogging Operator pod

1.6. Verify the Vector running configuration

1.7. Verify the collector pods have memory and cpu limits set

1.8. Verify the collector pods memory and cpu usage

1.9. Verify the collectors pods are not restarting - Enable Vector DEBUG level

- Connectivity/Auth errors with the log receiver

3.1. Prerequisites

3.2. Tools

3.3. Scenario 1. Network connectivity

3.4. Scenario 2. SSL issues

3.5. Scenario 3. UDP

3.6. Scenario 4. Auth errors

3.7. Scenario 5. Test the service - Too many logs

- Not able to see logs

5.1. Scenario 1. Not logs from any collector from the cluster visible in one of the outputs

5.2. Scenario 2. Not logs from a collector or some collectors from the cluster

5.3. Scenario 3. Not full logs log forwarded to syslog

5.4. Additional resources - Metrics

6.1. Dashboards

6.2. Vector's per-component memory usage

6.3. Other metrics - Transition from Logging v5 to Logging v6

- detectMultilineException / detectMultilineErrors

- Additional resources

1. Global overview

Some of the data obtained from the commands shown in this document are present in the logging data must-gather. All actions that require revision of the collectors should go through all the steps in this Global Overview section.

1.1. Get the configurations, logs, alerts, metrics

Before starting, the basic requirements for a troubleshooting are:

- Get the logging data following the steps in the documentation

- Get the alerts present on the moment of the issue following the article: "How to get fired alerts on OpenShift using cli or script?"

- Dashboard obtained from OCP Console > Observe > Dashboards > Dashboard: Logging / Collection where the Time Range includes the time of the issue.

In some circumstances could need to request/provide:

-

Partial metrics: "How to collect cluster Prometheus metrics in Red Hat OpenShift Container Platform"

-

A sosreport. For getting it, review the Red Hat Documentation "Generating a sosreport archive for an OpenShift Container Platform cluster node" and/or the articles:

-

Tcpdump:

1.2. Verify ClusterLogging Operator version installed and support

Verify cluster version:

$ oc get clusterversion

Verify ClusterLogging Operator version:

$ oc get csv -n openshift-logging |grep cluster-logging

Review in the article OpenShift Operator Life Cycles that the ClusterLogging Operator version has support and it’s supported for the OpenShift version used.

NOTES:

- Starting in OpenShift 4.16, the Elasticsearch Operator is no longer supported for Red Hat OpenShift Logging (RHOL). More details in the article Missing the Red Hat Elasticsearch operator in RHOCP.

- The last version of Elasticsearch released is 5.8.z. More details in the article “Does Red Hat support version drift between OpenShift Elasticsearch Operator and OpenShift Logging Operator starting from RHOL v5.9 in RHOCP 4”

1.3. Verify the clusterLogging/ClusterLogForwarder CR status section

Review in the clusterLogging/ClusterLogForwarder CR the status section

In Logging 5:

Get the clusterLogging CR and clusterLogForwarder CR (The clusterLogForwarder CR may not exist):

$ oc get clusterlogging -A

$ oc get clusterLogForwarder -A

Review the status of the clusterLogging CR and clusterLogForwarder CR returned from the previous output verifying that there are no errors:

$ oc get clusterlogging <CR name> -n <namespace> -o yaml

$ oc get clusterlogforwarder <CR name> -n <namespace> -o yaml

Note: Review the following articles, which are explaining some bugs in the clusterLogForwarder CR status for RHOL v6:

- Collector pods are going in CrashLoopBackOff state in RHOL 6

- Collector configmap not generated when same input used twice for the same output in RHOL 6

In Logging 6:

Get the clusterLogForwarder CR:

$ oc get obsclf -A

Review the status of the clusterLogForwarder CR returned from the previous output verifying that there are no errors:

$ oc get obsclf <CR name> -n <namespace> -o yaml

1.4. Verify the clusterLogging is in Managed status

Impact:

When the CLO is in Unmanaged status (unless a Support Exception is in place or it was recommended by Red Hat as a workaround for a bug until the fix is released) it follows the “Support policy for unmanaged Operators”.

Diagnosis:

// In Logging 5

$ oc get clusterLogging <CR name> -o jsonpath='{.spec.managementState}' -n <namespace>

// In Logging 6

$ oc get obsclf <CR name> -o jsonpath='{.spec.managementState}' -n <namespace>

1.5. Verify no errors in the ClusterLogging Operator pod

Check the timestamp of the errors as they could be from the past and not current or in the moment of the issue

$ unset clo

$ clo=$(oc get pod -l name=cluster-logging-operator -o jsonpath='{.items[0].metadata.name}' -n openshift-logging)

$ oc logs $clo -n openshift-logging |grep -i error

1.6. Verify the Vector running configuration

Root cause:

In some situations the Vector running configuration has not the expected configuration. Some of them:

clusterLoggingOperator not able to reconcile the configuration. Errors in the logs from theclusterLoggingOperator pod should be visible

'TheclusterLoggingOperator is not translating an option set in theclusterLogForwarder/obsclfCR to the Vector running configuration

Diagnosis:

The Vector running configuration is stored in a secret in Logging v5 and in a configmap in Logging v6 in the same namespace that the Vector pods are running.

This secret or configmap follows the pattern <CR name>-config. If the CR is called collector or instance, the secret containing the Vector running configuration will be collector-config.

See section "Verify the clusterLogging/ClusterLogForwarder CR status section" for getting the CR name:

// In Logging v5

$ oc get secret <CR name>-config -n <namespace> -o jsonpath='{.data.vector\.toml}' |base64 -d

// In Logging v6

$ oc get configmap <CR name>-config -n <namespace> -o jsonpath='{.data.vector\.toml}' |base64 -d

or

// In Logging v5

$ oc extract secret/<CR name>-config --keys="vector.toml" -n <namespace>

// In Logging v6

$ oc extract configmap/<CR name>-config --keys="vector.toml" -n <namespace>

1.7. Verify the collector pods have memory and cpu limits set

Starting in Logging 6.0, it was implemented in the bug This content is not included.LOG-4745, by default, memory and cpu limits when not set by the OpenShift Admin.

Impact:

If no limits are set and using in a previous version than Logging 6.0, Vector could use all the memory available in the node impacting to all the loads running in the same node below some circumstancies.

Root Cause:

This high cpu or memory memory is usually caused when an issue is present delivering the logs to the destinations causing back pressure.

For more information read the article: "Log collector vector has high memory consumption in RHOCP 4"

Diagnosis:

// Get the collector ds

$ oc get ds -l app.kubernetes.io/component=collector -n <namespace>

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

collector 8 8 8 8 8 kubernetes.io/os=linux 50d

// Check if set limits to the collector daemonset obtained from previous command

$ oc get ds <name> -o jsonpath='{.spec.template.spec.containers[0].resources}' -n <namespace> |jq

Actions:

Set memory and cpu limits for the collector. See the section in this document Verify the collector pods memory and cpu usage".

1.8. Verify the collector pods memory and cpu usage

Impact:

- If the memory or cpu usage are close to the limits could cause:

- If reached Memory Limit: pods restarted with OOMKill

- If reached CPU Limit: delay in reading/parsing/delivering the logs and logs missed

Diagnosis:

Go to the OCP Console > Observe > Metrics and run the Prometheus queries below.

Note: This promQL assumes that collector* is the name of the collector pods, from the openshift-logging namespace. Modify the values to match the config from the cluster evaluated

// Collectors CPU usage:

pod:container_cpu_usage:sum{pod=~'collector.*',namespace='openshift-logging'}

// Collectors Memory usage

sum(container_memory_working_set_bytes{pod=~'collector.*',namespace='openshift-logging'}) BY (pod, namespace)

Take into consideration that when selecting a longer Time Range for metrics, the peaks and valleys will be less visible on the graph.

If the problem is not happening constantly and it happens in a specific time, it should be recommended to review the metrics from that specific time.

For knowing what’s the baseline for setting the requests.cpu and the requests.memory run the queries setting as Time Range: 1 week

Actions:

If the requests.cpu and/or the requests.memory are too low, the actual requests from the collector may not be fulfilled. Increase the requests.cpu and/or the requests.memory values at least to baseline usage observed in the metrics.

If the pod reaches the limits.cpu and/or the limits.memory, increase the limit reached. The limits.cpu and the limits.memory values should be adjusted to allow the regular operation of the collector.

1.9. Verify the collectors pods are not restarting

Diagnosis:

// In Logging 5

$ oc get pods -l component=collector -n <namespace>

// In Logging 6

$ oc get pods -l app.kubernetes.io/component=collector -n <namespace>

If the collectors are restarting, then, they could be in:

CrashLoopBackOffRunning

In CrashLoopBackOff status:

Root Cause:

This is usually caused by a bad configuration or image not being able to be downloaded.

Diagnosis:

Review the events:

$ oc get events -n <namespace>

$ oc describe pod <pod name> -n <namespace>

Review the pod status:

$ oc get pod <pod name> -n <namespace>

Review the error in the previous pod:

$ oc logs <pod name> -p -n <namespace>

In Running status:

Verify if restarting with OOMKill

Root cause:

If the collector pods are restarting with OOMKill, then, the most common issues are:

- Caused because the normal operation needs that memory. Review the memory metrics for the collector

- It could be caused by slowness delivering the logs causing memory back pressure to the collector

- It could be caused by an interruption in delivering the logs causing back pressure to the collector temporarily increasing the memory usage and cpu

Diagnosis:

Review if the pods are restarting with OOMKill:

// In Logging 5

$ oc get pods -l component=collector -n <namespace> -o yaml |grep -i oomkill

// In Logging 6

$ oc get pods -l app.kubernetes.io/component=collector -n <namespace> |grep -i oomkill

Go to the OpenShift Console > Observe > Dashboards > Dashboard: Logging / Collection and review the Total errors last 60m dashboard if errors are present that could justify the back pressure.

Review if errors are present in the logs from collectors:

// In Logging 5

$ for pod in $(oc get pods -l component=collector -o name -n <namespace> ); do oc logs $pod -n <namespace> |grep -i error; done

// In Logging 6

$ for pod in $(oc get pods -l app.kubernetes.io/component=collector -o name -n <namespace> ); do oc logs $pod -n <namespace> |grep -i error; done

Articles of interest:

Other causes

Diagnosis:

Review the events:

$ oc get events -n <namespace>

$ oc describe pod <pod name> -n <namespace>

Review the pod status:

$ oc get pod <pod name> -n <namespace>

Review the error in the previous pod:

$ oc logs <pod name> -p -n <namespace>

If nothing is visible, it could require a sosreport from the node where the collector pod is restarting. See section "Get the configurations, logs, alerts, metrics" for knowing how to get it.

2. Enable Vector DEBUG level

In some circumstances when not able to observe anything relevant in the metrics and logs, it could be required to change the Vector Log level to debug or higher. The log level can be set by adding an annotation to the clusterLogForwarder CR. Steps are detailed in the article: "How to change the log level in the Vector in RHOCP 4".

3. Connectivity/Auth errors with the log receiver

3.1. Prerequisites

The configuration for connection, certificates, authentication and authorization are all set in the clusterLogForwarder CR.

Review the section Verify the Vector running configuration for verifying the configuration that Vector is using and in this way, using these definitions for when testing with curl.

The TLS and auth definitions are in a section like:

// For tls

[sink.<output_normalized_name>.tls]

...

// For auth

[sink.<output_normalized_name>.auth]

...

For instance, for the Red Hat Managed Loki, and a collector named “collector”:

// In Logging v5

$ oc get secret collector-config -o jsonpath='{.data.vector\.toml}' |base64 -d

--- OUTPUT OMMITED ---

[sinks.output_default_loki_infra.tls]

min_tls_version = "VersionTLS12"

ciphersuites = "TLS_AES_128_GCM_SHA256,TLS_AES_256_GCM_SHA384,TLS_CHACHA20_POLY1305_SHA256,ECDHE-ECDSA-AES128-GCM-SHA256,ECDHE-RSA-AES128-GCM-SHA256,ECDHE-ECDSA-AES256-GCM-SHA384,ECDHE-RSA-AES256-GCM-SHA384,ECDHE-ECDSA-CHACHA20-POLY1305,ECDHE-RSA-CHACHA20-POLY1305,DHE-RSA-AES128-GCM-SHA256,DHE-RSA-AES256-GCM-SHA384"

ca_file = "/var/run/secrets/kubernetes.io/serviceaccount/service-ca.crt"

# Bearer Auth Config

[sinks.output_default_loki_infra.auth]

strategy = "bearer"

token = "xxxxx"

// In Logging v6

$ oc get config collector-config -o jsonpath='{.data.vector\.toml}' |base64 -d

3.2. Tools

The common tools are:

- tcpdump

- curl

tcpdump

Use the article Running tcpdump inside an OpenShift pod to capture network traffic and where the destination will be:

- If it’s not expected to go through the proxy

TCPDUMP_EXTRA_PARAMS=”host <endpoint> and port <port>" - If it’s expected to go through the proxy

TCPDUMP_EXTRA_PARAMS=”host <proxy> and port <proxy port>"

curl

The curl command can allow:

- test the network connectivity. This test only validates that something is listening on the endpoint and port configured, but it doesn’t validate that connected to the right service or that the service is working properly

- test if the service receiving the logs is working

Note: the curl command can only be used when the network protocol is TCP, not when it’s UDP.

Interesting options for being used with curl are:

For authentication/authorization:

-u $user:'$passwd'

-H "Authorization: Bearer $token"

For SSL/TLS:

--cacert </path/to/ca>

--cert </path/to/cert>

--key </path/to/key>

--tlsv1.2

--tlsv1.1

--tls-max

--ciphers

For when cluster-wide proxy exists:

--resolve <endpoint>:<endpoint port>:<proxy>:<proxy port>

--noproxy "<endpoint IP Address or name>"

3.3. Scenario 1. Network connectivity

Root causes:

Several root causes could be:

- A proxy not allowing the connection or forwarding to the wrong destination/port

- A bad configuration of the no_proxy

- A firewall not allowing the connection

- Incorrect destination endpoint

- Incorrect configuration in the clusterLogForwarder: protocol, certificates, etc

- Not matching the ciphers supported in the collector and the destination

- Others

Diagnosis:

Verify if the cluster has configured a cluster-wide proxy and confirm if the network packages should go through the proxy or not. In case that shouldn’t go through the proxy, review if present in the no_proxy definition:

$ oc get proxy/cluster

1. If the connectivity between the collectors and the endpoint must go through the cluster-wide proxy

Test the connectivity with curl passing the options used as it’s set the connection in the clusterLogForwarder

$ curl -vvv <endpoint>:<port>...

2. If the connectivity between the collectors and the endpoint shouldn’t go through the proxy

Test the connectivity with curl passing the options used as it sets the connection in the clusterLogForwarder. Curl doesn’t honor the CIDR addresses as detailed in the article "cURL to NO_PROXY CIDR addresses are not working as expected”, then, for bypassing it:

$ curl -vvv <endpoint>:<port> --noproxy "<IP ADDRESS or hostname>"...

If the test with curl works, double check with a tcpdump run in the collector pod that the packages doesn’t go through the proxy running tcpdump as detailed in the article "Running tcpdump inside an OpenShift pod to capture network traffic" where the TCPDUMP_EXTRA_PARAMS is as it follows:

TCPDUMP_EXTRA_PARAMS="(host <proxy> and port <proxy port>) or (host <endpoint> and port <endpoint port>)"

Scenario 2. SSL issues

Review the parameters set in the clusterLogforwarder and verify what’s the real configuration set in the collector following the section "Verify the Vector running configuration". After that, test with curl using the same parameters available in the Vector configuration.

For instance, for a configuration as below without being configured a proxy:

[sinks.output_default_loki_infra]

type = "loki"

inputs = ["output_default_loki_infra_remap_label"]

endpoint = "https://logging-loki-gateway-http.openshift-logging.svc:8080/api/logs/v1/infrastructure"

--- OUTPUT OMMITED ---

[sinks.output_default_loki_infra.tls]

min_tls_version = "VersionTLS12"

ciphersuites = "TLS_AES_128_GCM_SHA256,TLS_AES_256_GCM_SHA384,TLS_CHACHA20_POLY1305_SHA256,ECDHE-ECDSA-AES128-GCM-SHA256,ECDHE-RSA-AES128-GCM-SHA256,ECDHE-ECDSA-AES256-GCM-SHA384,ECDHE-RSA-AES256-GCM-SHA384,ECDHE-ECDSA-CHACHA20-POLY1305,ECDHE-RSA-CHACHA20-POLY1305,DHE-RSA-AES128-GCM-SHA256,DHE-RSA-AES256-GCM-SHA384"

ca_file = "/var/run/secrets/kubernetes.io/serviceaccount/service-ca.crt"

# Bearer Auth Config

[sinks.output_default_loki_infra.auth]

strategy = "bearer"

token = "xxxxx"

The test with curl could be:

$ token="<xxxxx>"

$ curl -vvv --cacert /var/run/secrets/kubernetes.io/serviceaccount/service-ca.crt -H "Authorization: Bearer ${token}" https://logging-loki-gateway-http.openshift-logging.svc:8080/api/logs/v1/infrastructure

Scenario 3. UDP

It’s possible to configure syslog for using UDP. UDP is a protocol where no confirmation of the packages exists. This means that no error will exist in the collector logs in the case that it is not able to deliver the packages to the destination.

A way of verifying if the network packages are sent and received is using tcpdump on both sides: collector and endpoint receiving the logs. With this, it’s possible to verify if the collector is sending and on the other side if the packages are received.

If the collector sends and the endpoint is not receiving, then, it should be needed to review if something in the middle is intercepting the packages.

Scenario 4. Auth errors

If it’s used user and password, use curl with the option -u:

$ oc -n <namespace> rsh <collector pod>

$ user="/path/to/user/file"

$ passwd="/path/password/file"

$ curl -vvv -u $user:'$passwd' <endpoint>:<port>...

If it’s used token, use curl with the option -H "Authorization: Bearer $token"

$ oc -n <namespace> rsh <collector pod>

// if token is directly in the configuration

$ token="</path/to/toke/file|environment variable>"

or

// if the token is an environment variable or in a file

$ token="</path/to/toke/file|environment variable>"

$ curl -vvv -H "Authorization: Bearer $token" <endpoint>:<port>...

NOTE: If it’s used UDP, it’s not confirmation of the log delivery, then, errors won't be visible in case that it is not able to deliver the logs on the collectors. A way of confirming if the collector is sending the chunks to the destination is taking a tcpdump inside the collector pod. Review the section Global Overview: Get the configurations, logs, alerts, metrics.

Scenario 5. Test the service

For some of the output types supported by the collector it is possible to validate if also the service is answering.

For Elasticsearch:

// Testing that an Elasticsearch is available and the service answering

$ curl -vvv <elasticsearch>:<port>...

{

"name" : "example.com",

"cluster_name" : "elasticsearch",

"cluster_uuid" : "DX-RpiA0Q4Wm0e2-lKj6rw",

---OUTPUT OMMITED---

// Testing that able to create indices:

$ curl -XPUT <elasticsearch>:<port>/audit-write -H 'Content-Type: application/json' -d' { "settings": { "index": { "number_of_replicas": 0, "number_of_shards": 1 }}}'...

}

For Splunk:

$ curl "<endpoint>:<port>/services/collector/event" \

-H "Authorization: Splunk ${HEC_TOKEN}" \

-d '{"event": "Pony 1 has left the barn", "index": "<INDEX_NAME>"}{"event": "Pony 2 has left the barn", "index": "<INDEX_NAME>"}{"event": "Pony 3 has left the barn", "nested": {"key1": "value1"}, "index": "<INDEX_NAME>"}'

4. Too many logs

Root cause:

The phrase “Too many logs” could be ambiguous. Usually, these “too many logs” can proceed from:

- Increased the loads in the Openshift cluster

- Pods deployed in debug or many verbose

- Failures in the nodes or pods

- Upgrade activities that per se generate a spike of logs during the activity

Diagnosis:

The first step should identify what loads are the most of the logs coming from and collected. As a requirement needs to be installed and configured the LogFileMetricExporter.

For identifying the containers producing more logs review the article: "Identify the namespaces that are producing the most amount of Logs in RHOCP4".

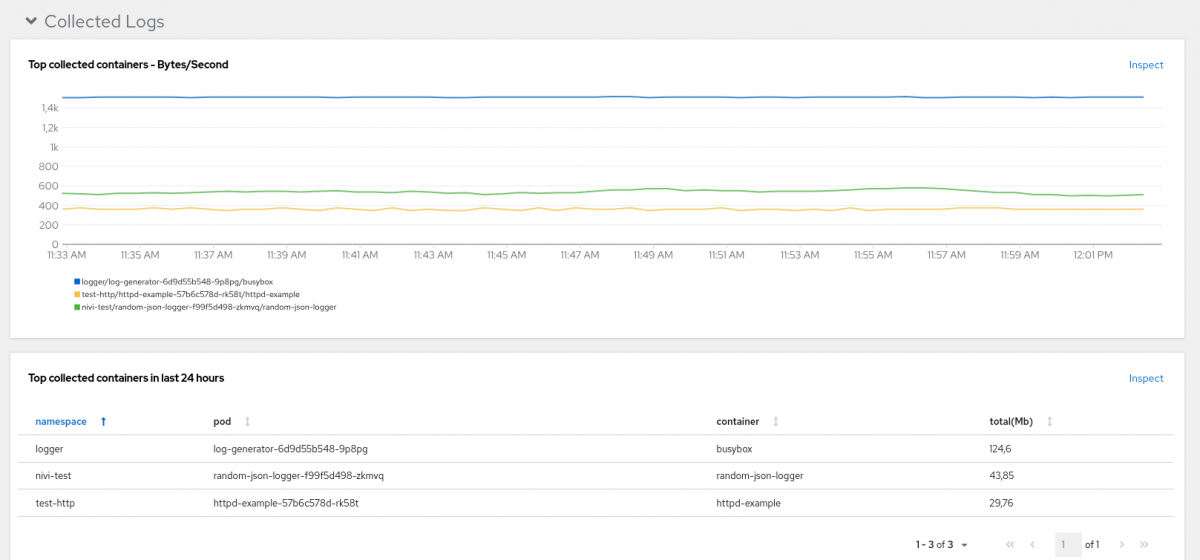

For identifying the containers from what being collected more logs, go to the OCP Console > Observe > Dashboards > Dashboard: Logging / Collection. Review the metrics available in the Section Collected Logs and review the "Top collected containers" metrics.

Note: if the Dashboard Logging / Collection is not available, read the article "Logging Collection Dashboard is not visible in RHOCP 4".

Actions:

Once identified the load generating the most of the logs, review the content of the logs for identifying:

-

if the logs are valid and no further action needed

-

an error is present and not working with normality, and that’s the reason for producing so many logs

-

The load is in log level debug.

-

Debug logs are printed as INFO. An example is present in the article “pods in openshift-vsphere-infra namespace so much verbose in RHOCP 4”

-

Filter the logs:

Articles of interest:

- "How to reduce the logs in OpenShift Logging when there are too many logs "

- "Vector sends duplicated the logs when log forwarding to syslog in RHOL"

- "Vector duplicates fields log forwarding to syslog in RHOCP 4"

NOTE: if the log source is audit/infrastructure, Red Hat support can help on it, even when the first analysis should be done by the OpenShift Admin. If the log source is an application, it’s entirely on the OpenShift Admin side.

5. Not able to see logs

It’s needed to understand what the scenario is. Some of them are:

- Not logs from any collector from the cluster visible in one of the outputs

- Not logs from a collector or some collectors from the cluster

- Not full audit logs log forwarding to syslog

Diagnosis:

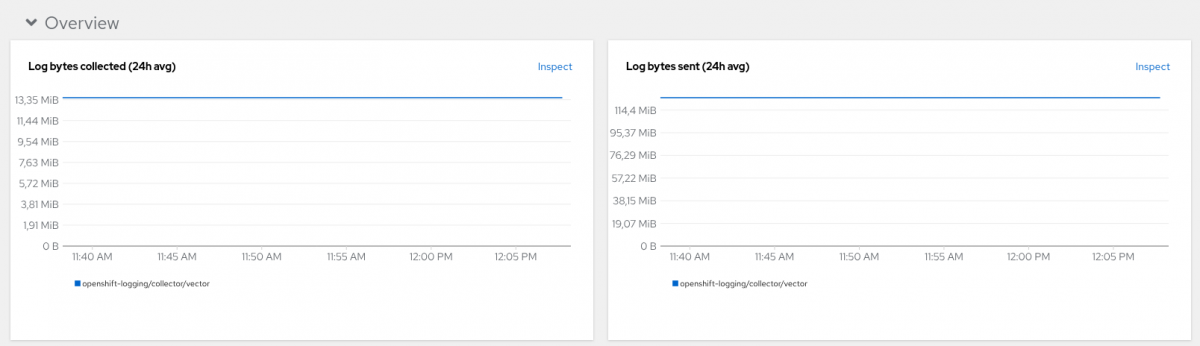

Go to the OCP Console > Observe > Dashboards > Dashboard: Logging / Collection. In specific the Dashboards:

- Log bytes collected (24h avg) and Log bytes sent (24h)

- Total errors last 60m

Check the logs from the collectors looking for any error at the moment of the issue:

// In Logging 5

$ for pod in $(oc get pods -l component=collector -o name -n <namespace> ); do oc logs $pod -n <namespace> |grep -i error; done

// In Logging 6

$ for pod in $(oc get pods -l app.kubernetes.io/component=collector -o name -n <namespace> ); do oc logs $pod -n <namespace> |grep -i error; done

In real time, running vector top and vector tap . Read article: "How to use vector tap and vector top for troubleshooting in RHOCP 4")

5.1. Scenario 1. Not logs from any collector from the cluster visible in one of the outputs

If there does exist any problem log forwarding to one of the destinations and the collector buffer gets full, then, by default, the policy is to block to read more logs. More details in:

a. Article "Collector stops of log forwarding when one of the outputs reaches the queue size limit in RHOCP 4"

b. Article "Multi log forwarder feature use cases" section "For what's not the multi log forwarder feature? Scenario 3

c. Read the article "Tuning log payloads and delivery in RHOCP 4"

5.2. Scenario 2. Not logs from a collector or some collectors from the cluster

N/A

5.3. Scenario 3. Not full logs log forwarded to syslog

Read the articles:

- "ClusterLogForwarder is not sending full audit logs to external rsyslog"

- "Vector splits the logs when log forwarding to syslog in RHOCP 4"

5.4 Additional resources

- "Why does the Red Hat Logging stack not guarantee log sink?

- "Reducing log loss during node scaledown by ensuring Vector terminates last"

6. Metrics

6.1. Dashboards

A Dashboard exists going to OpenShift Console > Observe > Dashboards > Dashboard: Logging / Collection.

Note: if the Dashboard Logging / Collection is not available, read the article "Logging Collection Dashboard is not visible in RHOCP 4".

If in the Logging / Collection Dashboard the Produced Logs shows No datapoints found review if the LogFileMetricExporter resource is configured.

6.2. Dashboards

Review article: "How to monitor Vector's per-component memory usage in RHOL"

6.3. Other metrics

CPU Usage for the collector pods.

pod:container_cpu_usage:sum{pod=~'collector.*',namespace='openshift-logging'}

Memory Usage for the collector pod.

sum(container_memory_working_set_bytes{pod=~'collector.*',namespace='openshift-logging'}) BY (pod, namespace)

Note: In the two previous queries, change collector.* and namespace value in case that the collectors is different

Log bytes sent by output type (5m rate, Loki output)

sum by (component_name) (rate(vector_component_sent_bytes_total{component_type="loki", component_kind="sink"}[5m]))

Log events sent by output type (5m rate, Loki output)

sum by (component_id) (rate(vector_buffer_sent_events_total{component_kind="sink",component_type="loki"}[5m]))

Rate of discarding buffered events by output (irate, Loki output)

sum by(component_name) (irate(vector_buffer_discarded_events_total{component_kind="sink",component_type="loki"}[2m]))

Note: In the previous queries, change component_type if the output is not Loki

7. Transition from Logging v5 to Logging v6

Some useful resources:

- "How to transition the collectors and the default log store from Red Hat OpenShift Logging 5 to 6"

- "How to migrate Vector checkpoints in RHOCP 4"

- "429 response errors and duplicated logs when upgrading to Logging v6 in RHOCP 4"

- "Configure Tolerations, NodeSelector & Resources for collector pods in OpenShift Logging 6"

- "Migrating the default log store from Elasticsearch to Loki in OCP 4"

- "How to configure the clusterLogForwarder to log forward to the Red Hat Managed Elasticsearch in Red Hat OpenShift Logging 6"

8. detectMultilineException / detectMultilineErrors

Some useful resources:

- "How detectMultilineErrors or detectMultilineException works in Logging stack with RHOCP 4"

- "Logs with detectMultilineException option enabled not properly parsed in RHOCP 4"